Howdy, Alex here, let me catch you up on everything that happened in AI:

(btw; If you haven’t heard from me last week, it was a Substack glitch, it was a great episode with 3 interviews, our 3rd birthday, I highly recommend checking it out here)

This week was started on a relatively “chill” note, if you consider Anthropic enabling 1M context window chill. And then escalated from there. We covered the new GPT 5.4 Mini & Nano variants from OpenAI. How MiniMax used autoresearch loops to improve MiniMax 2.7, Cursor shipping their own updated Composer 2 model, and how NVIDIA CEO Jensen Huang embraced OpenClaw calling it “the most important OSS software in history” and that every company needs an OpenClaw strategy.

Also, OpenAI acquires Astral (ruff, uv tools) and Mistral releases a “small” 119B unified model and Cursor dropped their Opus like Composer 2 model. Let’s dive in:

Big Companies LLMs

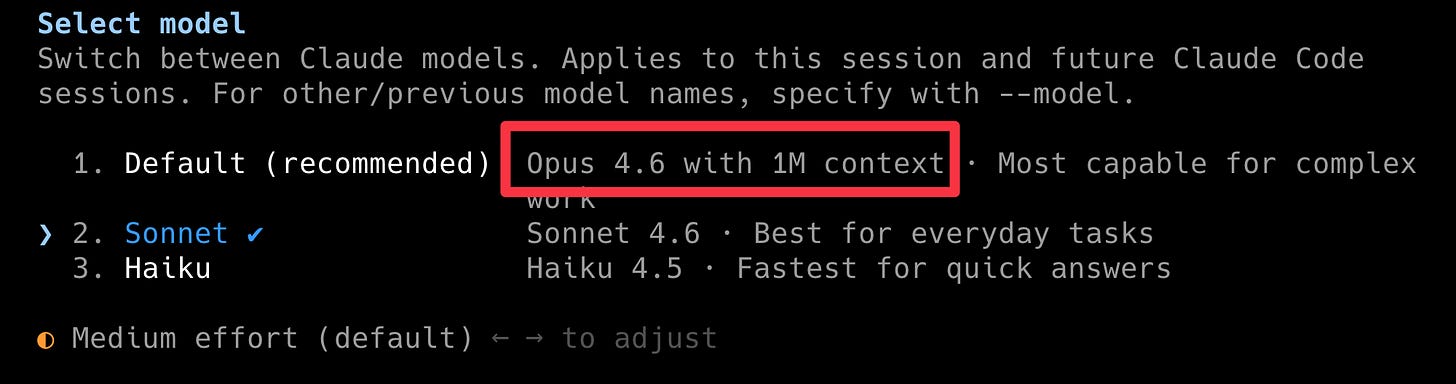

1M context is now default for Opus.

Anthropic enabled the 1M context window they shipped Claude with in beta, by default, to everyone.

Claude, Claude Code, hell, even inside OpenClaw if you’re able to get your Max account in there, are now using the 1M long version of Opus. This is huge, because, while its not perfect it’s absolutely great to have 1 long conversation and not worry about auto-compaction of your context.

As we just celebrated our 3rd anniversary, I remember that back then, we were excited to see GPT-5 with 8K context. Love how fast we’re moving on this.

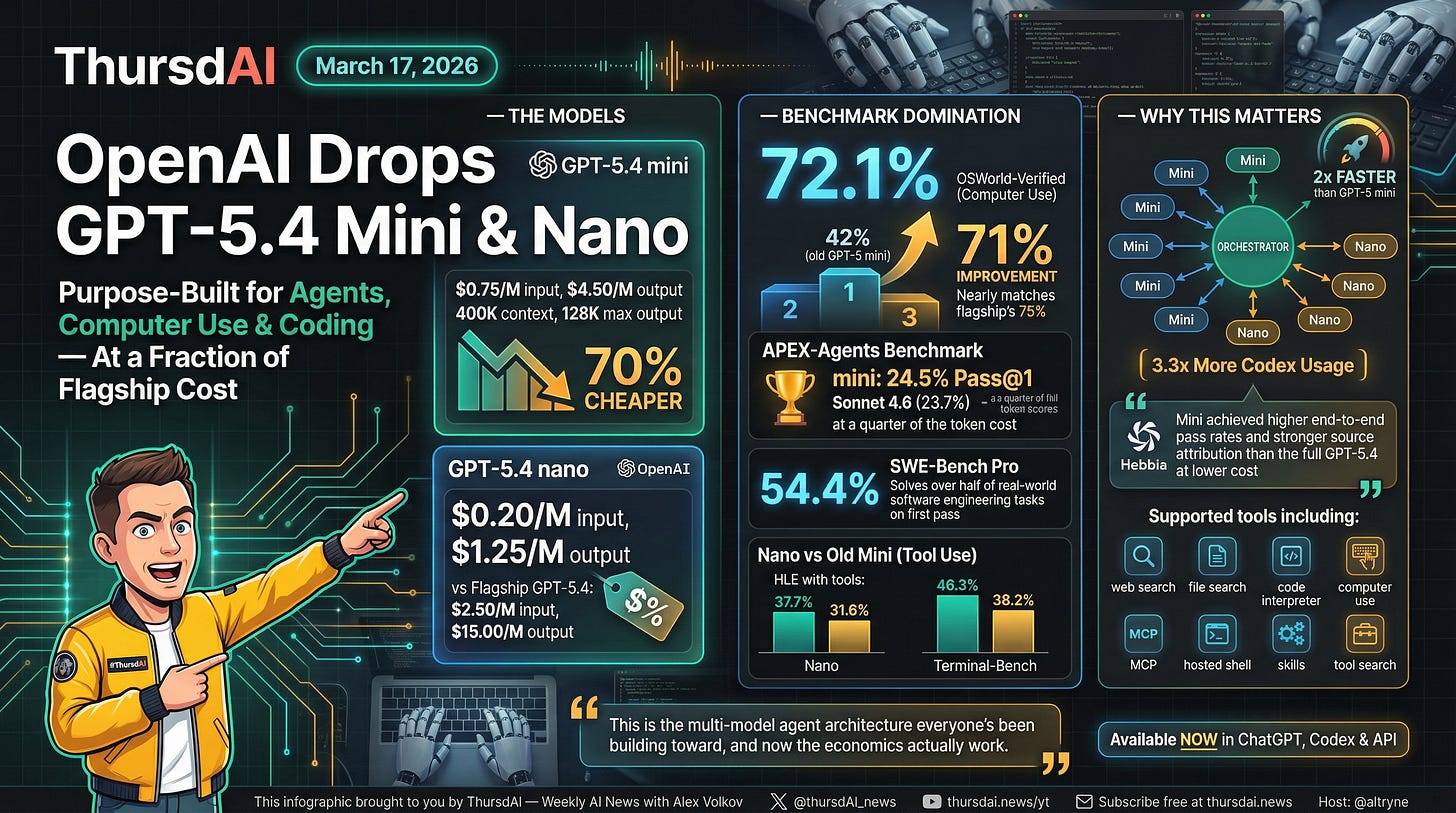

OpenAI drops GPT-5.4 mini and nano, optimized for coding, computer use, and subagents at a fraction of flagship cost

Last week on the show, Ryan said he burned through 1B (that’s 1 billion) tokens in a day! That is crazy, and there’s no way a person sitting in front of a chatbot can burn through this many tokens. This is only achieved via orchestration.

To support this use-case, OpenAI dropped 2 new smaller models, cheaper and faster to run. GPT 5.4 Mini achieves a remarkable 72.1% on OSWorld Verified, which means it uses the computer very well, can browse and do tasks. 2x faster than the previous mini, at .75c/1M token, this is the model you want to use in many of your subagents that don’t require deep engineering.

This is OpenAI’s ... sonnet equivalent, at 3x the speed and 70% the cost from the flagship.

Nano is even crazier, 20 cents per 1M tokens, but it’s not as performant, so I wouldn’t use it for code. But for small tasks, absolutely.

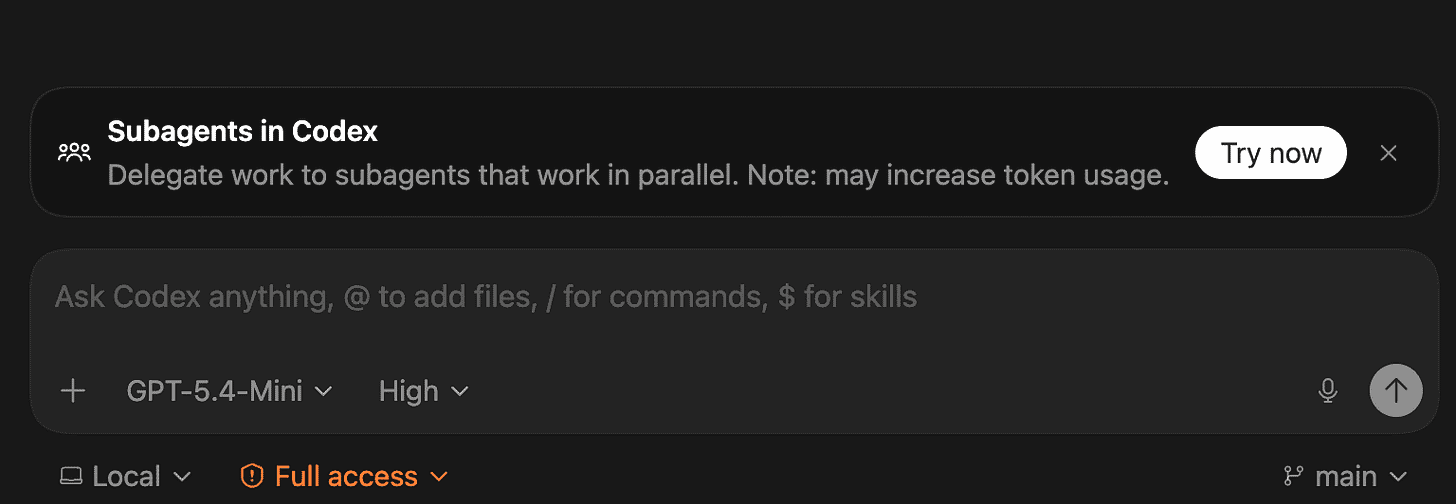

Here’s the thing that matters, these models are MEANT to be used with the new “subagents” feature that was also launched this week in Codex, all you need to do as... ask!

Just tell Codex “spin up a subagent to do... X” and it’ll do it.

OpenAI shifts focus on AI for engineering and enterprise, acquires Astral.sh makers of UV.

Look, there’s no doubt that OpenAI the absolutely leader in AI, brought us ChatGPT, with over 900M users using it weekly. But they see what every enterprise sees, developers are MUCH more productive (and slowly so are everyone else) when they use tools that can code.

According to WSJ, OpenAI executives will reprioritize some of the side-quests they have (Sora?) to focus on productivity and business. Which essentially means, more Codex, more Codex native, more productivity tools.

With that focus, today they announced that OpenAI / Codex is acquiring Astral, the folks behind the widely popular UV python package manager. This brings strong developer tools firepower to the Codex team, the astral folks are great at writing incredibly fast tools in rust! Looking forward to see how these great folks improve Codex even more.

Jensen Declares Total OpenClaw Victory at GTC, Announces NemoClaw (Github)

This was kind of surreal, NVIDIA CEO Jensen Huang, is famous for doing his stadium size keynote, without a teleprompter, and for the last 10 minutes or so, he went all in on OpenClaw. Calling it “the most important OSS software in history” and outlining how this is the new computer.

That Peter Steinberger with OpenClaw showed the world a blueprint for the new coputer, an personal agentic system, with IO, files, computer use, memory, powered by LLMs.

Jensen did outline that the 3 things that make OpenClaw great are also the things that enterprises cannot allow, write access to your files + ability to communicate externally is a bad combo, so they have launched NemoClaw.

They’ve got a bunch of security researchers to work with OpenClaw team to integrate their new OpenShell sandboxing effort, network guardrails and policy engine integration.

I reminded folks on the pod that the internet was very insecure, there was a time where folks were afraid of using their creditcards online. OpenClaw seems to be speed running that “unsecure but super useful” to “secure because it’s super useful” arc and it’s great to see a company as huge as NVIDIA embrace.

Not to mention that given that agents can run 24/7, this means way more inference and way more chips sold for NVIDIA so makes sense for them, but still great to see!

Manus “my computer” and other companies replicating “OpenClaw” success

This week it became clear, after last weeks Perplexity “computer”, Manus (now part of Meta) has also announced a local extension of their cloud agents, and those two are only the first announcements, it’s clear now that every company dissected OpenClaw’s moment and will be trying to give its users what they want. An agentic always on AI assistant with access to the users files, documents etc.

Claude code added “channels“ support with telegram and discord connectors today, which, also, is one big missing piece of the puzzle for them. Everything is converging on this. Even OpenAI is rumored to consolidate Codex (which sees huge success) with OpenAI and Atlast browser into 1 “mega” APP that would do these things and act as an agent.

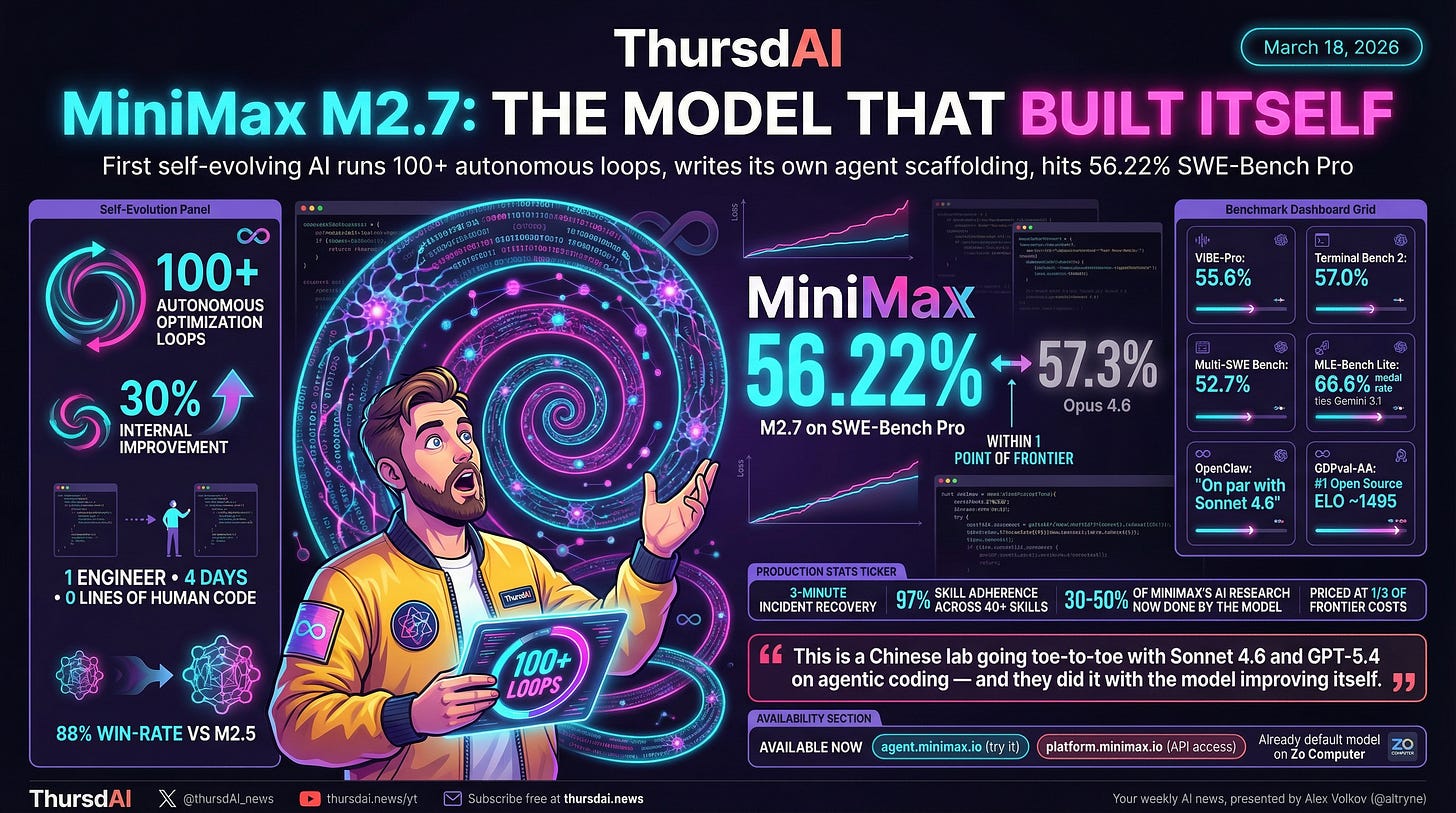

MiniMax M2.7: The Model That Built Itself

This one blew me away, it’s not quite open source (yet?) but the MiniMax folks are coming out with a 2.7 version just after their MiniMax 2.5 was featured on our show and .. they are claiming that this model trained itself.

Similarly to Andrej Karpathy’s auto-researcher, the MiniMax folks ran 100+ autonomous optimization loops, t get this model to 56.22% on the hard Swe-bench pro benchmark (close to Opus’s 57.3%!) and this one gets a 88% win rate vs the very excellent MiniMax 2.5.

They used the previous model to build the agent harness and scaffolding, with 1 engineer babysitting these agent, and writing 0 lines of human code, which as we said before, every company will be doing, as we’re staring singularity in the face!

We’ve evaluated this model as well (Wolfram has been busy this week!) and it’s doing really well on WolfBench with 52% average and 64% top score, it’s very close to 5.3 codex on our terminalBench benchmark!

We hope that this model will be open source at some point soon as well!

Cursor drops Composer 2 - nearly matching Opus 4.6, fast version (Blog)

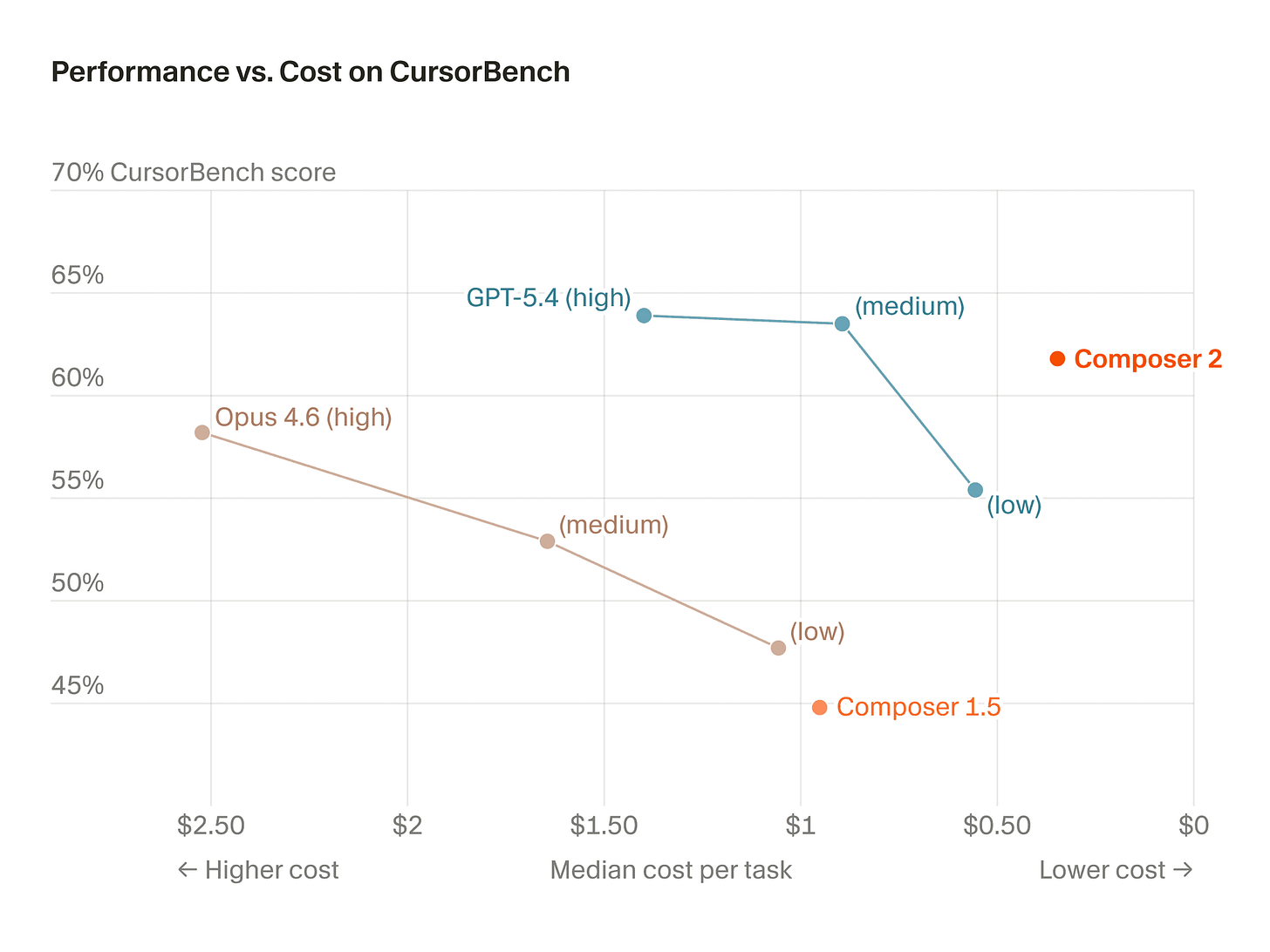

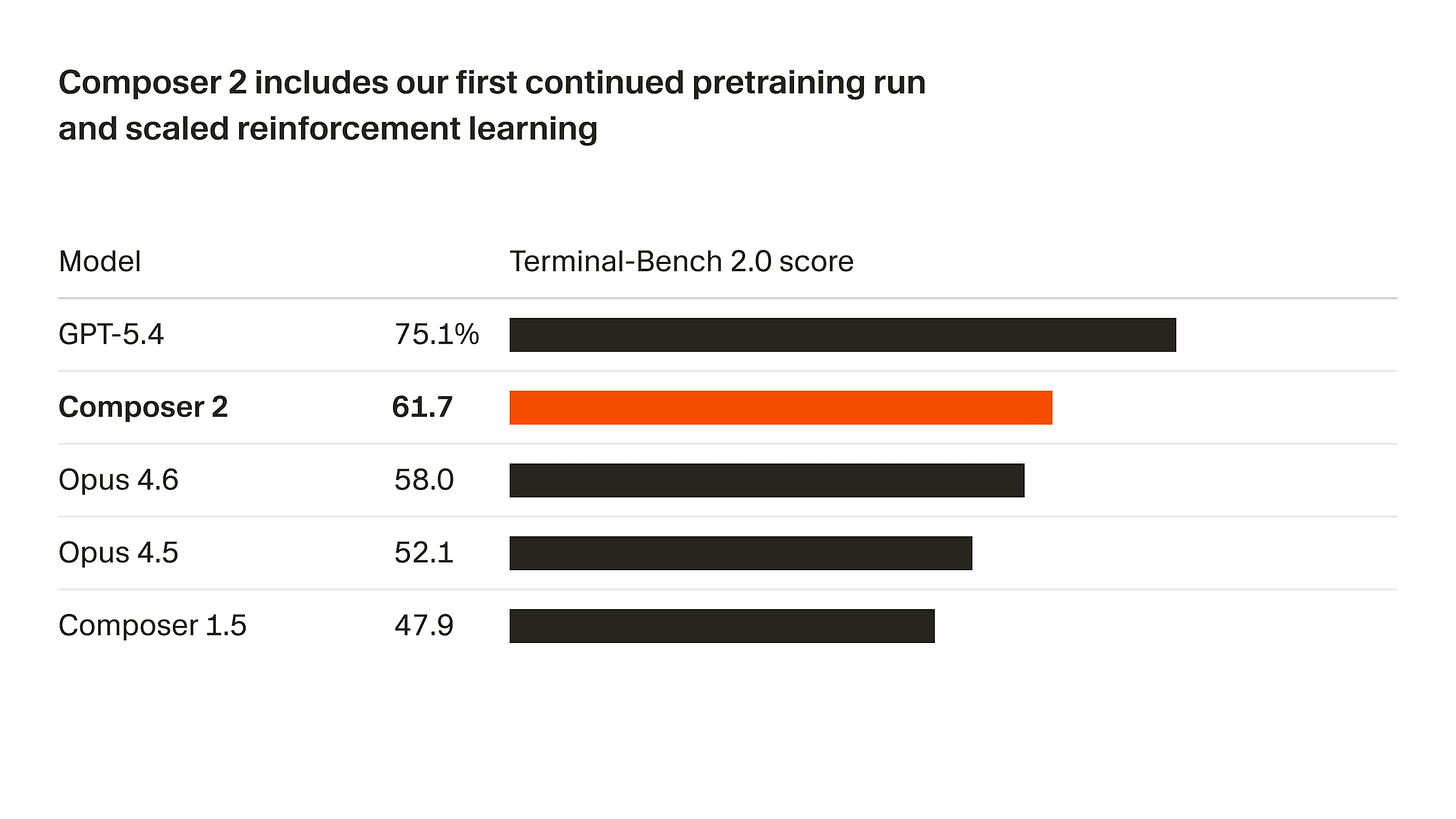

Cursor decided to add to our show’s breaking news record of Thursday releases with a brand new in-house trained Composer 2. This time they released more benchmarks than only their internal “composer bench” and this model looks great! (we are pretty sure it’s a finetune of a chinese OSS model, but we don’t know which)

Getting 61% on Terminal Bench, beating Opus 4.6 is quite a significant achievement, but coupled with the incredible pricing they are offering, $0.5/1Mtok input and $2.50/M output tokens, Cursor is really aiming for the productivity folks and showing that they are more than just an IDE.

Early users are reporting noticeably cleaner code than both Opus and Composer 1.5 — better adherence to clean code principles, smarter multi-file implementations, and strong performance on long-horizon agentic tasks like full API migrations and legacy codebase refactoring. They also shipped a new interface called Glass (in alpha) that’s built for monitoring these long-running agent loops.

Open Source: Mistral is Back, Baby

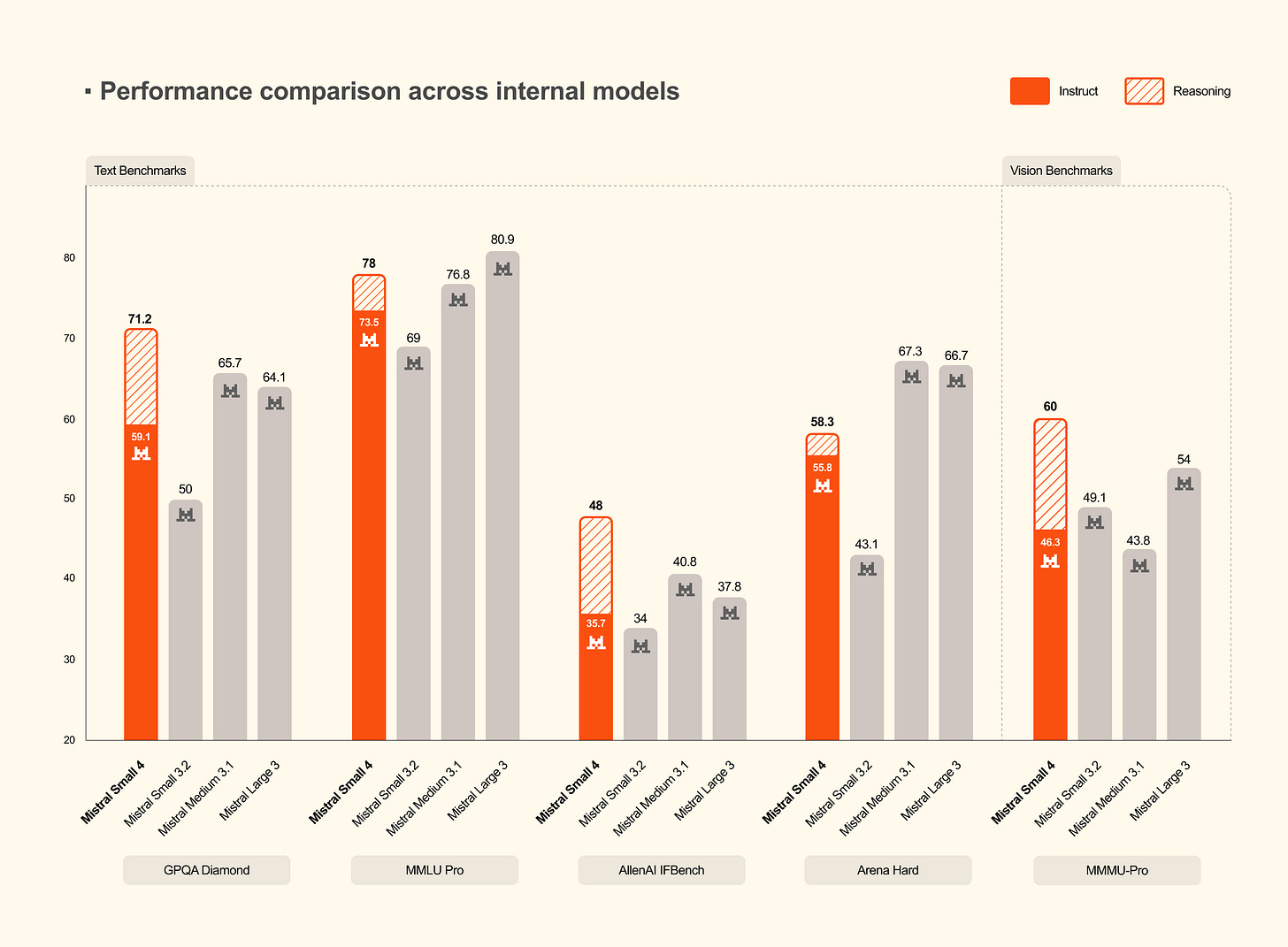

Mistral Small 4: 119B MoE with 128 experts + Apache 2.0 (X, Blog, HF)

It’s been a while since Mistral dropped something properly open source, and this week they kicked off what looks like their fourth generation with Mistral Small 4. The name is a little funny given the actual size — 119 billion total parameters, 128 experts in the mixture — but with only 6 billion active per token. So you get the knowledge footprint of a massive model but the compute profile of a small one. Very MoE-brained.

The bigger story here is what’s unified inside: this is Magistral (reasoning), Pixtral (multimodal), and Devstral (coding) all rolled into one weights file. Previously you had to choose which Mistral “side quest” model you wanted. Now there’s a reasoning_effort parameter where you dial from none for fast cheap responses all the way up to high for step-by-step thinking, no model switch required.

How does it perform? We ran it through WolfBench and it landed toward the lower end of Wolfram’s current leaderboard — around 17% on the agentic tasks, roughly on par with Nemotron at the same scale. It’s not competing with Opus or GPT-5.4, and we weren’t really expecting it to. What we’re excited about is that it does multimodal, reasoning, and coding in one Apache-licensed package, and people are already running IQ4 quants locally. Shout out to Mistral for the return to open source — it’s been a minute, and the community noticed.

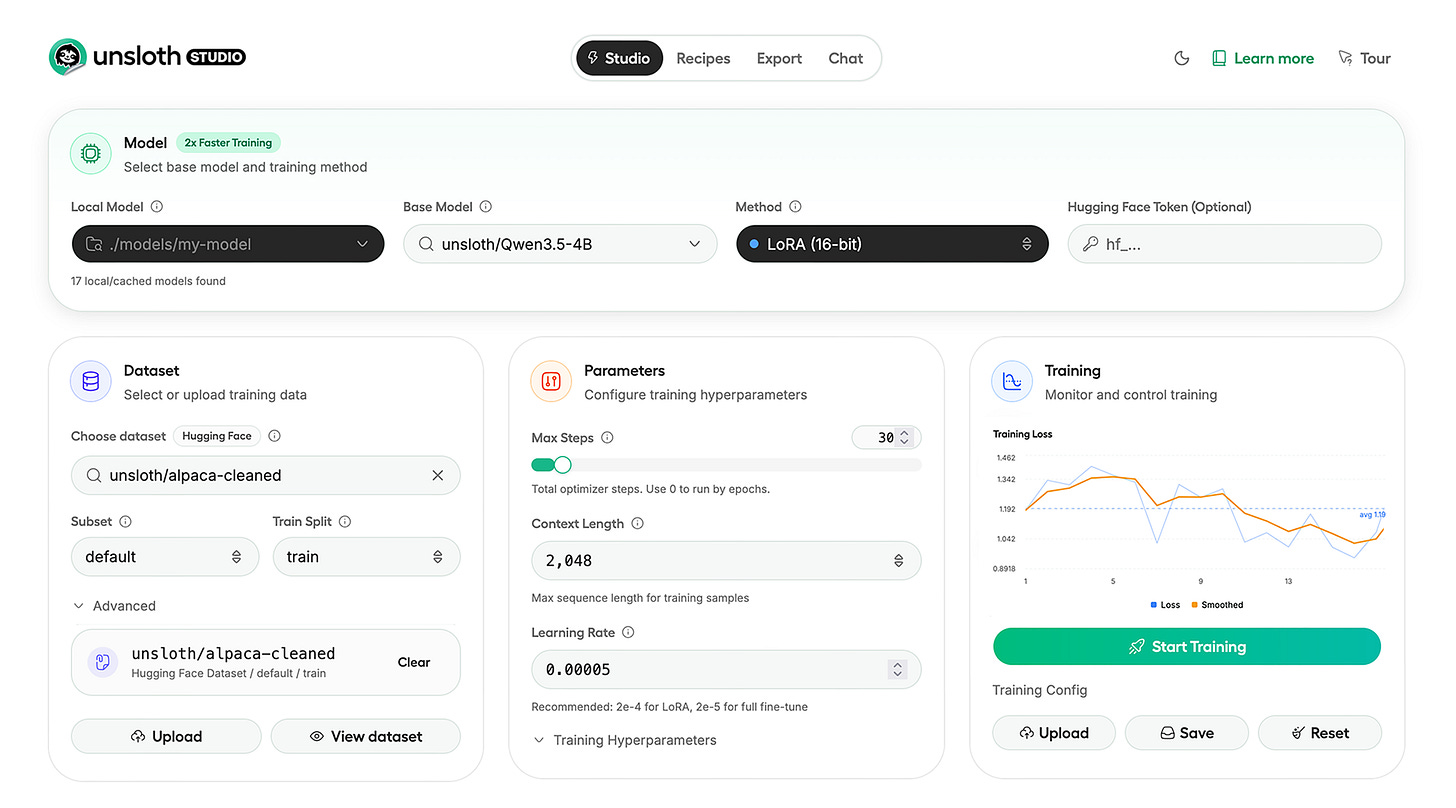

Unsloth Studio: Fine-Tuning Gets a UI (Blog)

Something I think people are sleeping on this week is Unsloth Studio, the open-source web UI that the Unsloth team just launched for local LLM training and inference. Unsloth has been quantizing and compressing models better than basically anyone for a while now — 2x training speed, 70% less VRAM, zero accuracy loss — but that was all code-first. Studio is the no-code interface layer on top of all of that.

The numbers: supports 500+ models across text, vision, audio, and embeddings. It runs 100% offline with no telemetry. Julien Chaumond, the CTO of Hugging Face, confirmed it trains successfully on a Colab Pro A100. There’s even a free Colab notebook for models up to 22B parameters. For folks who want to fine-tune models overnight without spinning up cloud infra or wrestling with Docker, this is a genuine leap forward. Nisten compared it to what LM Studio did for local inference — making something that used to require deep expertise suddenly accessible to anyone. I think that comparison is spot on, and I want to get Daniel and the Unsloth team on the show to dig into this properly.

This Week’s Buzz: W&B iOS App & The Overthinking Paradox

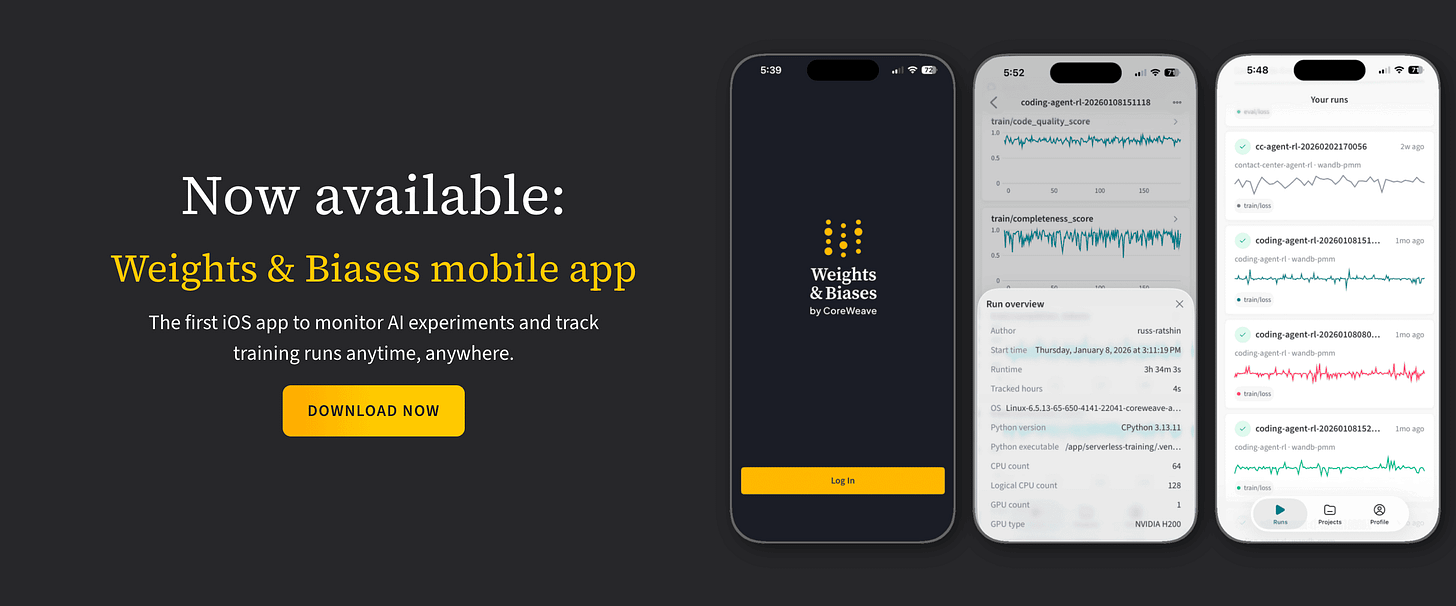

The iOS App is Finally Here (app store)

Okay, I’m going to do a quick applause. 👏

The most requested feature in Weights & Biases history is now live: the W&B iOS mobile app. If you’ve ever kicked off a training run overnight and woken up to find it crashed at hour two without knowing about it until morning, you understand exactly why people have been begging for this. Live metrics, loss curves, KL divergence — all right on your phone. And native push notifications for alerts! The second your run fails or a custom metric crosses a threshold, you get a notification on your phone.

Please give us feedback through the app, the iOS team is actively building on top of this. Get it on the App Store and let us know what you need.

WolfBench insight: More Thinking ≠ Better Agents

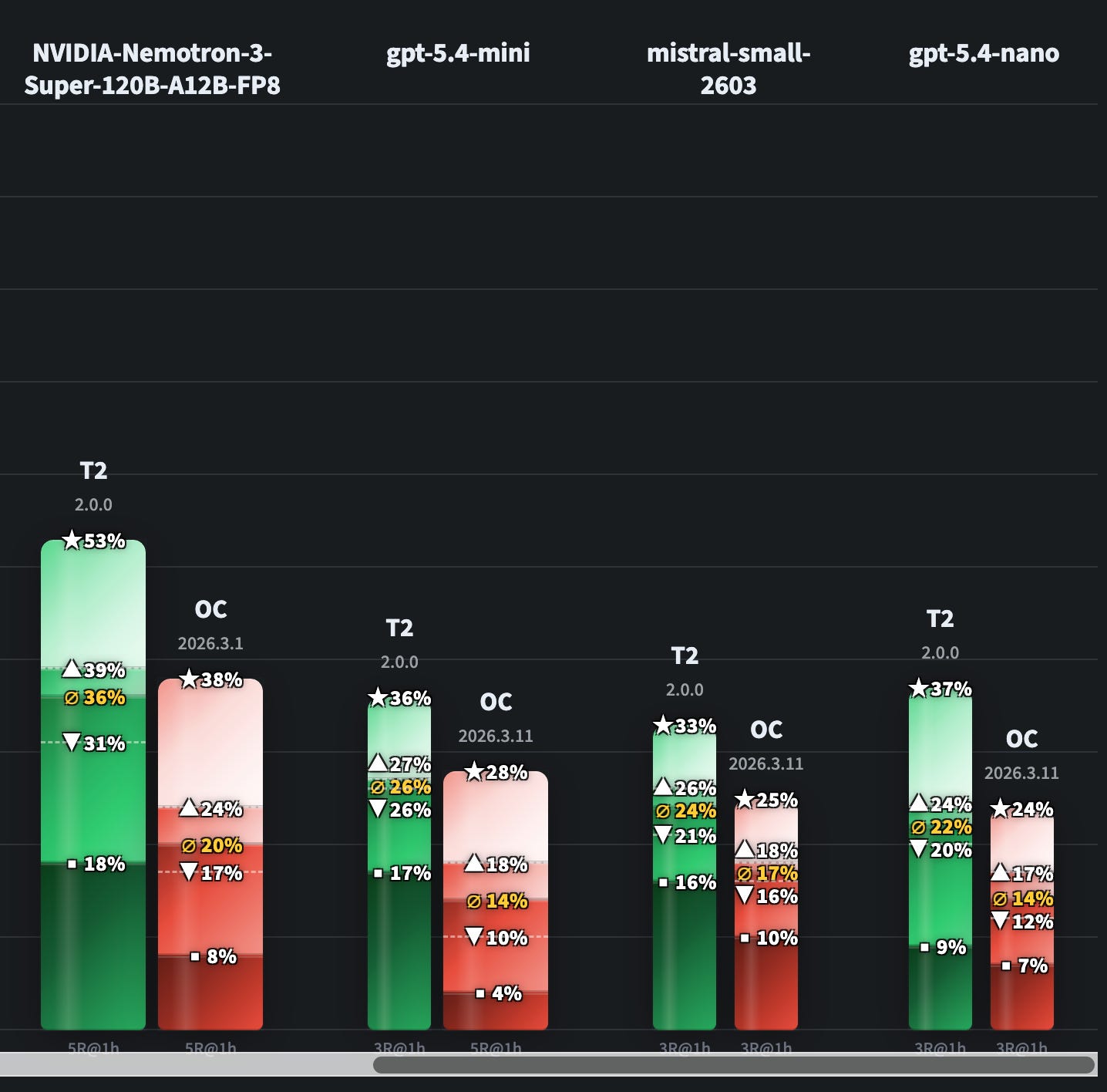

This is one of the more counterintuitive findings we’ve surfaced from the W&B + Wolf Bench collaboration, and Wolfram laid it out really clearly.

He tested Opus 4.6 and GPT-5.4 across different thinking/reasoning effort levels inside the Terminal Bench 2.0 agentic benchmark framework — using both the default Terminus 2 harness and the OpenClaw agent framework. For GPT-5.4, the pattern was exactly what you’d expect: higher reasoning effort gets better results. At extra-high, it hit 71% with 85% ceiling on tasks it could solve.

For Opus 4.6, though? Turning it up to the maximum thinking level made it significantly worse. From 71% on standard settings all the way down to 59% on max reasoning. It lost tasks it had been reliably solving before. Wolfram dug into the traces in Weave and found out why: the model was overthinking. In an agentic benchmark where you have a one-hour time limit per task, spending ten minutes reasoning about what terminal command to try — and then getting an error — and then spending another ten minutes reasoning about it — is catastrophically inefficient.

We’ll keep you up to date with more Alpha from our bench efforts! Stay tuned and checkout wolfbench.ai

Voice & Audio

xAI relaunched the Grok Text-to-Speech API (try it)

It’s actually a pretty full-featured release right away. Multiple voices, expressive controls, WebSocket streaming, multilingual support, and the whole platform feel suggests xAI is very much trying to build a serious multimodal API stack, not just throw out a toy demo.

The inline control tags are the fun part. You can embed pauses, laughter, whispers, breathing cues, all that. Those controls matter a lot for agents because the difference between “reads text out loud” and “feels usable in a voice interaction” usually lives in those details. As you can see in the video.. it’s.. not perfect.. yet? but pretty fun!

But the thing I personally had the most fun with this week was Fish Audio. We didn’t get to cover it properly last week, and when I played with it more this week, I came away really impressed. It’s fast, expressive, open source, and the voice control vibe is genuinely cool.

My favorite moment was not even a benchmark thing. I used Fish Audio with an agent setup to make a character voice inspired by Project Hail Mary, then had my kid talk to it. And the result was weirdly magical. If you remember the Audio book of Hail Mary, fish audio was able to get the voice juuuust right + Opus via OpenClaw obliged with a great skill to talk like rocky. I won’t post this for obvious copyright reasons but I showed it on the live show, at the end.

Parting thoughts: I was hoping for a quieter week this week as I was sick, but it didn’t materialize, I should stop hoping for quiet weeks I think. After all, this is how the singularity starts, faster and faster developments, models that train themselves, every company becomes an agentic company.

We’ll keep you posted on the most important breakthroughs, cover breaking news and bring interesting folks to the show as guests.

Thank you for reading, see you next week 👋

ThursdAI - Mar 19, 2026 - TL;DR

TL;DR of all topics covered:

Hosts and Guests

Alex Volkov - AI Evangelist & Weights & Biases (@altryne)

Co Hosts - @WolframRvnwlf @yampeleg @nisten @ldjconfirmed @ryancarson

Big CO LLMs + APIs

Anthropic makes Opus 4.6 with 1M context the default claude code - at the same price (X)

OpenAI drops GPT-5.4 mini and nano, optimized for coding, computer use, and subagents at a fraction of flagship cost (X, Announcement, Announcement)

Xiaomi - Omni modal and language only 1T parameters - MiMo (X)

Google AI Studio gets a full-stack vibe coding overhaul with Antigravity agent, Firebase integration, and multiplayer support (X, Blog, Announcement)

MiniMax M2.7: the first self-evolving model that helped build itself, hitting 56.22% on SWE-Bench Pro (X, X, Announcement)

Cursor launches Composer 2, their first proprietary frontier coding model beating Opus 4.6 at a fraction of the cost (X, Blog)

Open Source LLMs

Mamba-3 drops with three SSM-centric innovations: trapezoidal discretization, complex-valued states, and MIMO formulation for inference-first linear models (X, Arxiv, GitHub)

H Company releases Holotron-12B, an open-source hybrid SSM model for computer-use agents that hits 8.9k tokens/sec and jumps WebVoyager from 35.1% to 80.5% (X, X, HF, Blog)

Hugging Face’s Spring 2026 State of Open Source report reveals 11M users, 2M models, and China dominating 41% of downloads as open source becomes a geopolitical chess board (X, Blog, X, X)

Unsloth launches open-source Studio web UI for local LLM training and inference with 2x speed and 70% less VRAM (X, Announcement, GitHub)

Astral (Ruff, uv, ty) joins OpenAI’s Codex team (announcement , blog , Charlie Marsh)

Mistral Small 4: 119B MoE with 128 experts, only 6B active per token, unifying reasoning, multimodal, and coding under Apache 2.0 (X, Blog, HF)

Tools & Agentic Engineering

NVIDIA GTC: Jensen Huang declares “Every company needs an OpenClaw strategy,” announces NemoClaw enterprise platform (X, TechCrunch, NemoClaw)

OpenAI ships subagents for Codex, enabling parallel specialized agents with custom TOML configs (X, Announcement, GitHub)

Manus (now Meta) launches ‘My Computer’ desktop app, bringing its AI agent from the cloud onto your local machine for macOS and Windows (X, Blog)

This weeks Buzz

Weights & Biases launches iOS mobile app for monitoring AI training runs with crash alerts and live metrics (X, Announcement)

GPT 5.4 went from worst to best on WolfBenchAI after an OpenClaw config fix exposed a max_new_tokens bottleneck (X, X, X)

Voice & Audio

xAI launches Grok Text-to-Speech API with 5 voices, expressive controls, and WebSocket streaming (X, Announcement)

AI Art & Diffusion & 3D

NVIDIA DLSS 5 is making waves with a new generative AI filter (Blog)