Hey, Alex here, I’ll try to catch you up, but it’s one of the more intense weeks in AI in recent memory.

Here’s the TL;DR - OpenAI dominates across the board this week! Finally launches “spud”, called it GPT 5.5 (and 5.5 Pro), and it’s SOTA on most things,nearly matching the mysterious Claude Mythos but released and we can actually use it (we tested it extensively).

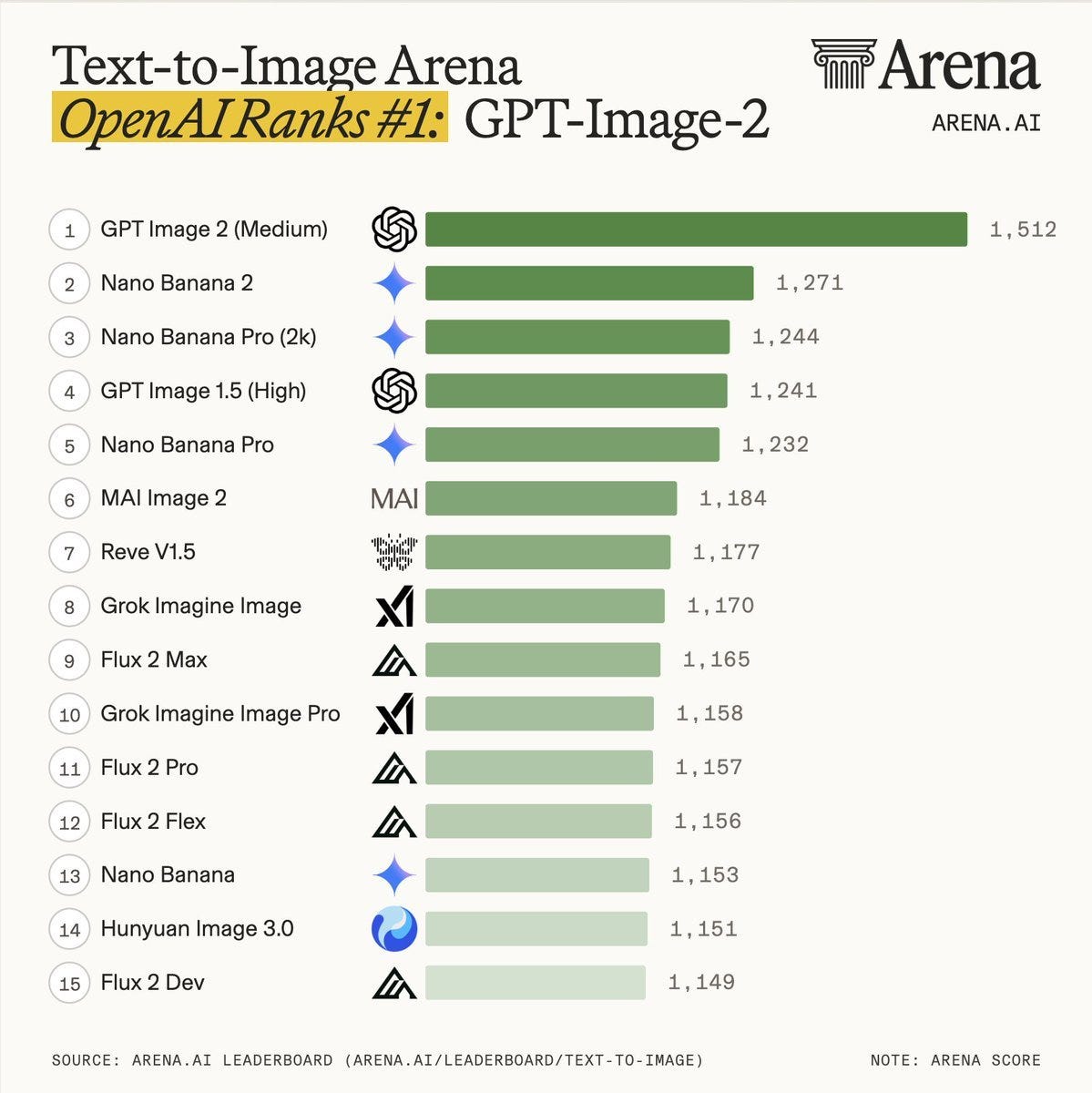

OpenAI also took the crown in image generate with the incredible GPT-image-v2 release, beating Nano Banana 2 and pro by a significant margin, the images are incredible, this model can generate working QR codes and 360 images it’s quite bonkers. Codex was updated with Computer Use (which I told you about last week), in-app browser and a bunch of other tools that match GPT 5.5 intelligence.

Meanwhile, Anthropic launched an incredible research preview of Claude Design, finally admitted that Claude was dumb and reset quotas across the board, while breaking the trust of the community with removing Claude code from the pro plan.

We’ve also got great open source updates, Kimi K2.6 and Qwen 3.6 27B are both great performers!

We were live on the stream for almost 4 hours today waiting for GPT 5.5 and finally got it and tested it live on the show + had Peter Gostev on from Arena who had early access and shared with us his insights. Let’s get into it!

OpenAI’s GPT 5.5 is here - SOTA AI intelligence you can actually use (Release Blog)

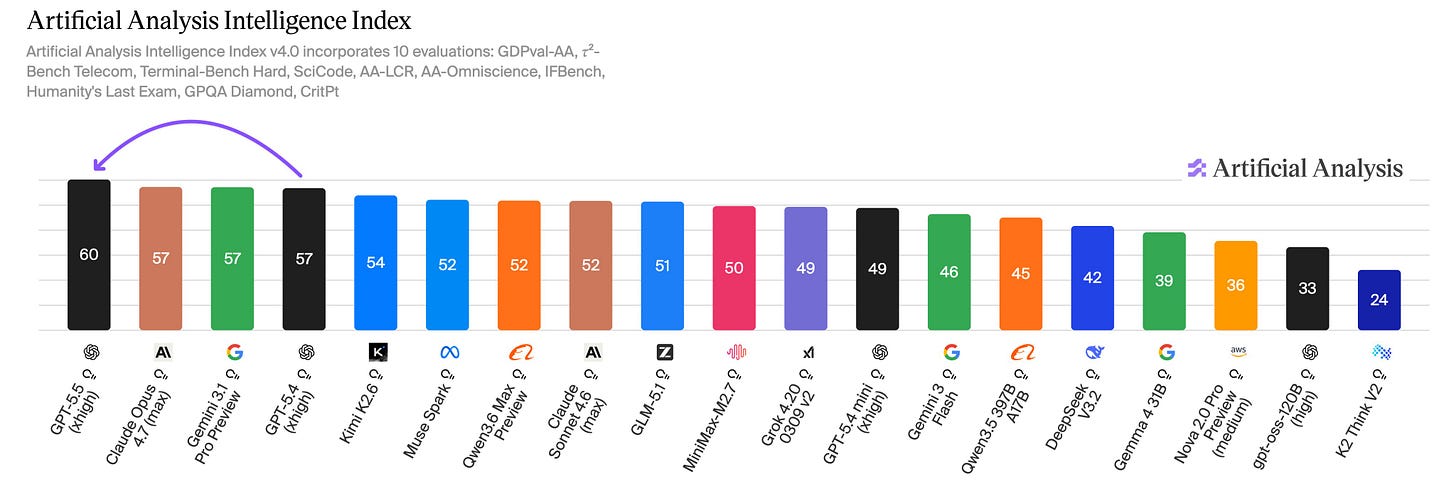

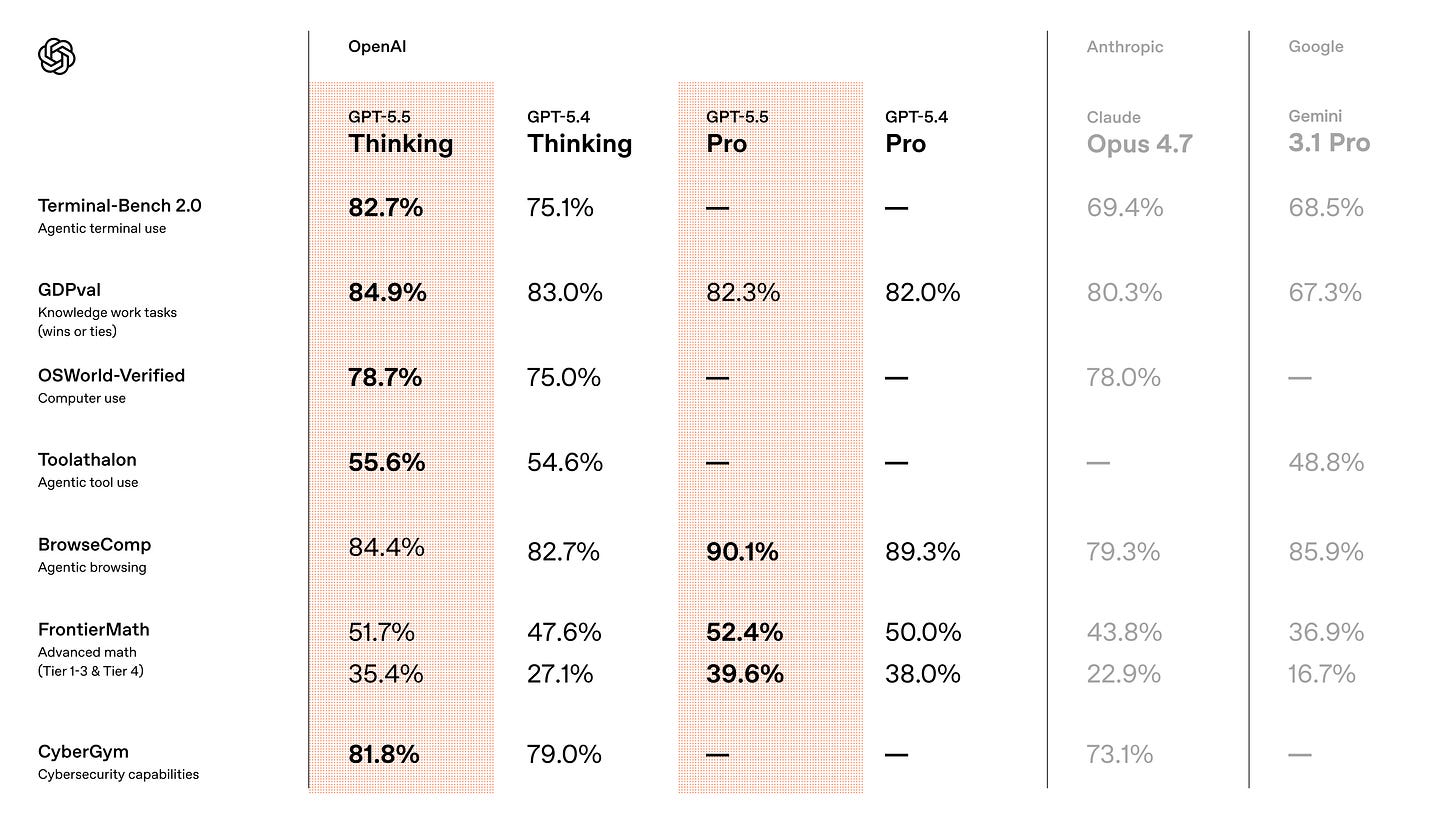

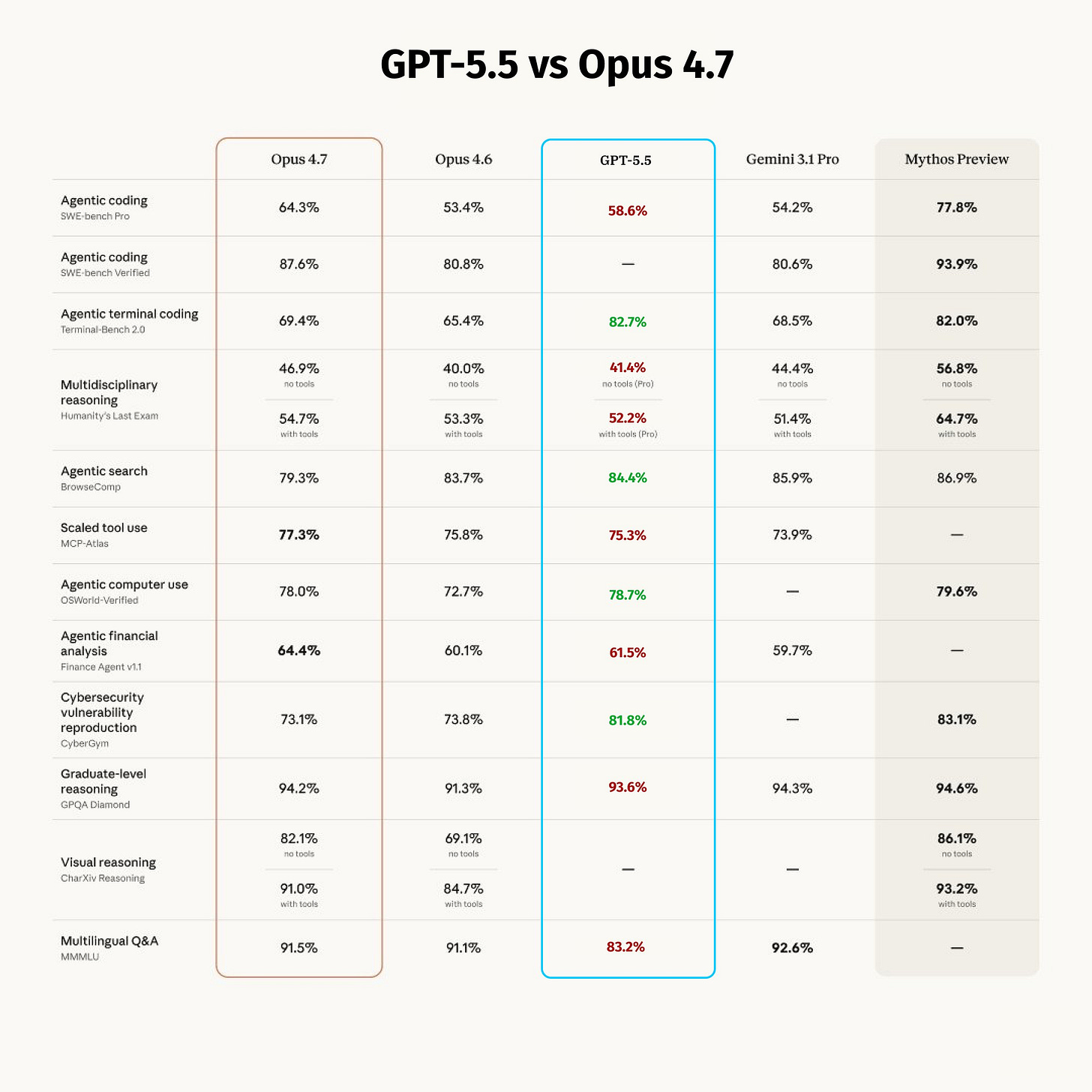

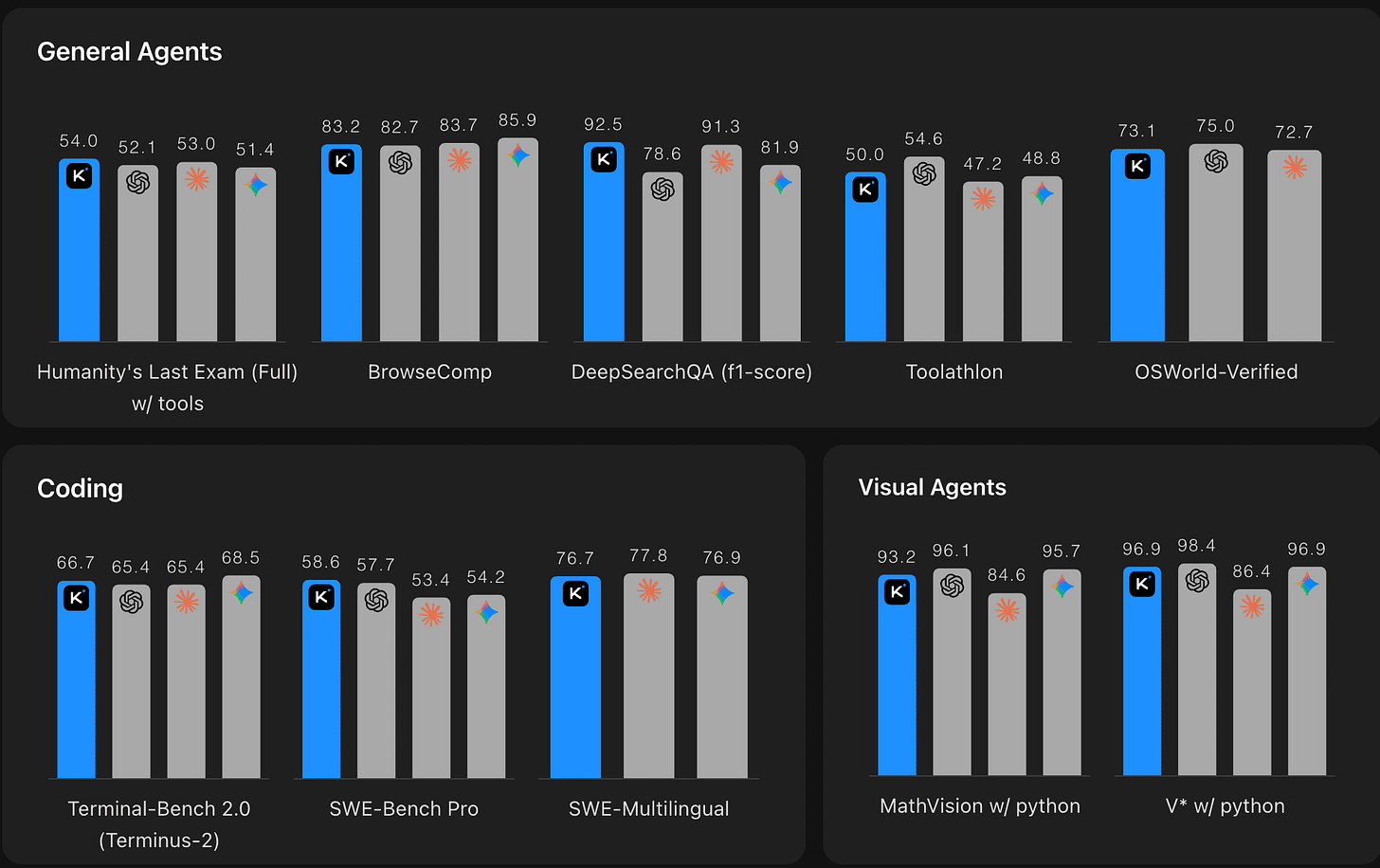

OpenAI finally gave us all access to their latest intelligence boost, GPT 5.5 thinking (and GPT 5.5 Pro). These models take the crown across many benchmarks, including TerminalBench (82.7%), GPDval (84%) and more. You can see the highlited versions on the image above. Though, its not uncommon for OpenAI to do some chart crimes, so @d4m1n created a chart that also showed the full benchmarks, including the ones GPT 5.5 is not beating Opus at, as you can see below, it underperforms on Humanity’s Last Exam, and scaled tool use.

But, benchmarks don’t tell the full story. GPT 5.5 uses significantly less tokens, compared to 5.4, about 40% less. It’s also more expensive, but given the lower token usage, it nets out at about ~20% price increase, while being more intelligence and faster.

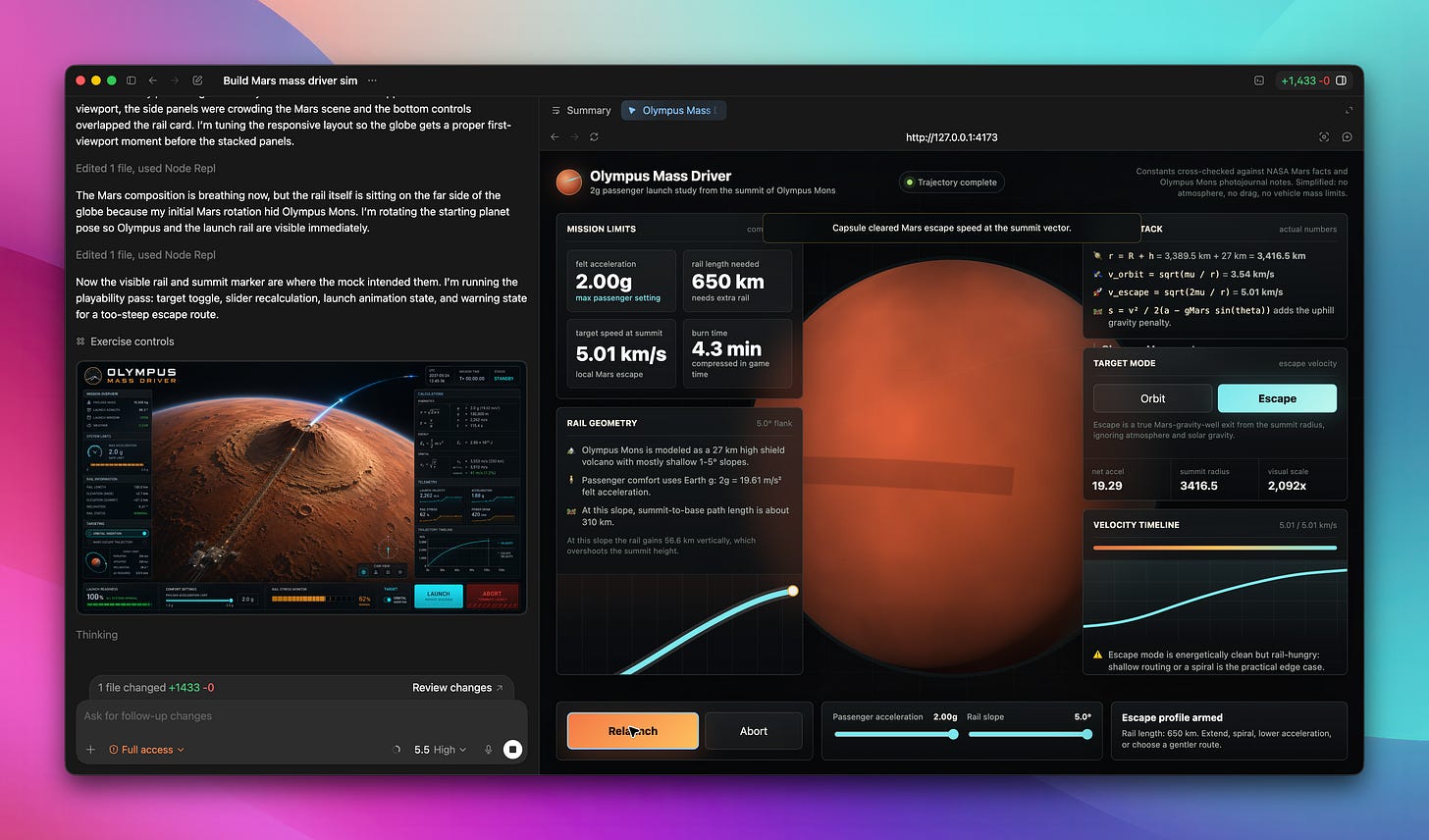

Tons of folks who had early access are reporting the same things, this model excels in long running tasks, Peter Gostev from Arena, who joined our live stream, showed us an incredible demo that ran overnight for over 8h! This model can work until the task is done, no longer just pausing in the middel asking for your input.

The real highlight is, paired with the recent GPT-image-2 (which I’ll expand on later in this newsletter), GPT 5.5 becomes an excellent UI designer. This is a big area in which Claude still has moat and OpenAI is trying to catch up here, and the real alpha now is to use both the Image gen and 5.5 in tandem to create beautiful visuals and UIs.

The main thing is, after testing it quite a few times, this only works if you generate an image outside of the session that builds the actual UI. we tried a couple of times to do it in 1 session, and the resulting UI doesn’t seem to be remotely close to the generated image.

Only after sending this image to a completely fresh session and asking for a “pixel perfect” implementation, did GPT 5.5 start to resemble the input image and rebuild the whole ui in pixel perfect fidelity!

GPT Image v2 - SOTA thinking image model, finally beating Nano Banana (Blog, Live)

Like we said, OpenAI is dominating this week, and in both instances those are great models. Though, apples to apples comparison, GPT-image-v2 is a much higher jump — from previous models — than GPT 5.5!

According to Artificial Analysis, the jump in how many people prefer GPT-image-2 in blind tests compared to other model is the higest we’ve ever seen, over 250 points. And you can clearly see it in the generations as well.

Previously this week, we did a live streaming session with Peter Gostev (from Arena) and we did a deep dive comparing this new model to GPT Image 1.5, Nano Banana and Grok Imagine, and it’s a clear winner across most categories.

Character consistency is immaculate, high resolution imagery, instruction following, are all so so good it’s a bit hard to explain in text.

Reasoning visual intelligence

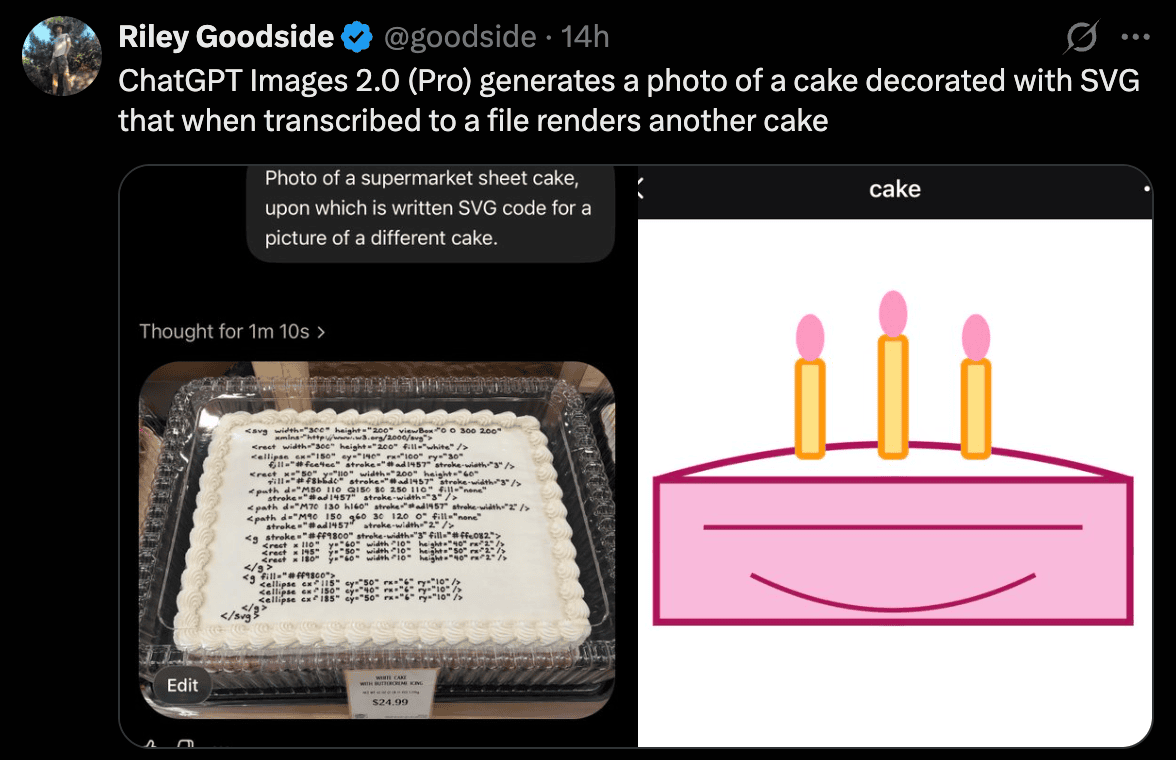

Like with Nano Banana, this model is likely based on a big GPT image, it’s no longer just diffusion, as you can see, it reasons! And apparently the more reasoning you give it (if you choose GPT pro) the better it’ll be. The examples are indeed wild, the model can generate images of code that works, generate functional QR codes and bar codes!

The craziest thing people figured out it can do, is functional 360 imagery (equirectangular format), you can just ask the model to create a 360 image of “scene” and then drop this in to a 360 viewer!

Peter shows us on the show how he combined GPT 5.5 and Image v2 to create a sort of “street view” from a bunch of 360 images, it blew our minds. He literally spun up an overnight GPT 5.5 task in Codex that planned out the hanging gardens of Babylon, generated hundreds of equirectangular images, stitched them into a walkable interface, and had it running 8+ hours without babysitting. A street view of a place we don’t actually know what it looked like, hallucinated from latent space. What a time.

Day one availability is wide: Figma, Canva, Adobe Firefly, fal.ai, and Microsoft Foundry all have it. Nano Banana dominated for what felt like an eternity in AI time (it was really only a few months 😅), and finally OpenAI has a proper answer.

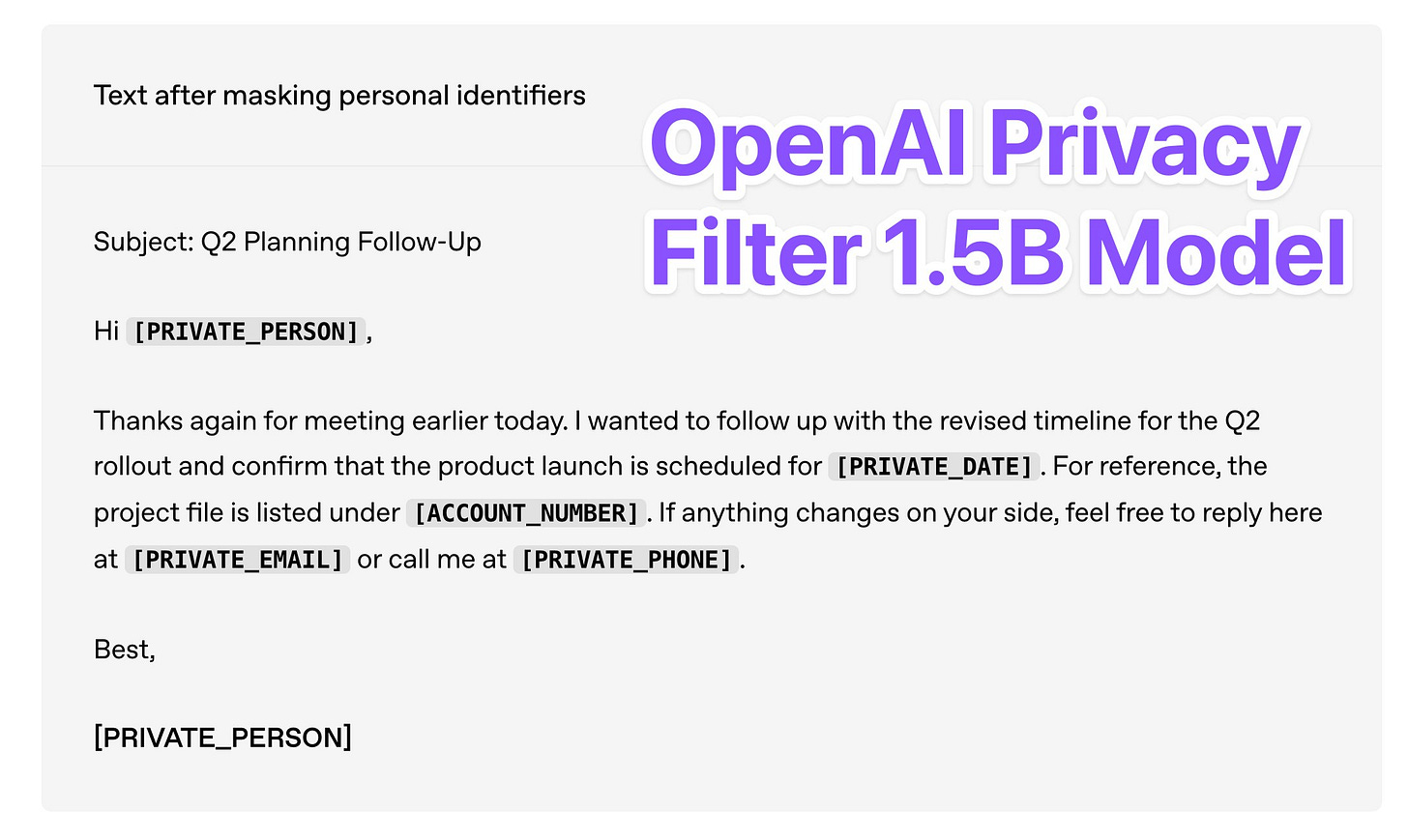

OpenAI is dropping models on HF - Privacy Filter, a 1.5B apache 2.0 PII reduction model (X, HF)

I’ve told you the’ve been cooking this week! OpenAI open sourced a genuinly useful model called Privacy Filter, that has 1.5B parameters with only 50M active, small enough that it runs in fully offline in your browser (check out this incredible web demo by our friend Xenova)

This model is specifically built to anonymize and filter our personally identifiable information (PII), things like names and addresses, but more importantly bank accounts and API keys!

This, in the era of agentic assistants is extremely important and I’m very happy that OpenAI is open sourcing here, specifically because while it’s great generally, this model is great for fine-tuning on your own data!

Pairing this with something like CrabTrap, a new open source proxy with LLM as a judge for agents like OpenClaw, and you’re hardening your setup so that your private details won’t leak, even if someone manages to prompt inject your agent!

In every other week, CrapTrap would deserve a segment of its own, it is really a novel solution to the “AI agent can leak your creds” problem, created by Brew CEO, as they run agents inside Brex, but this week is insane, so... you get a link and we move on 🙂

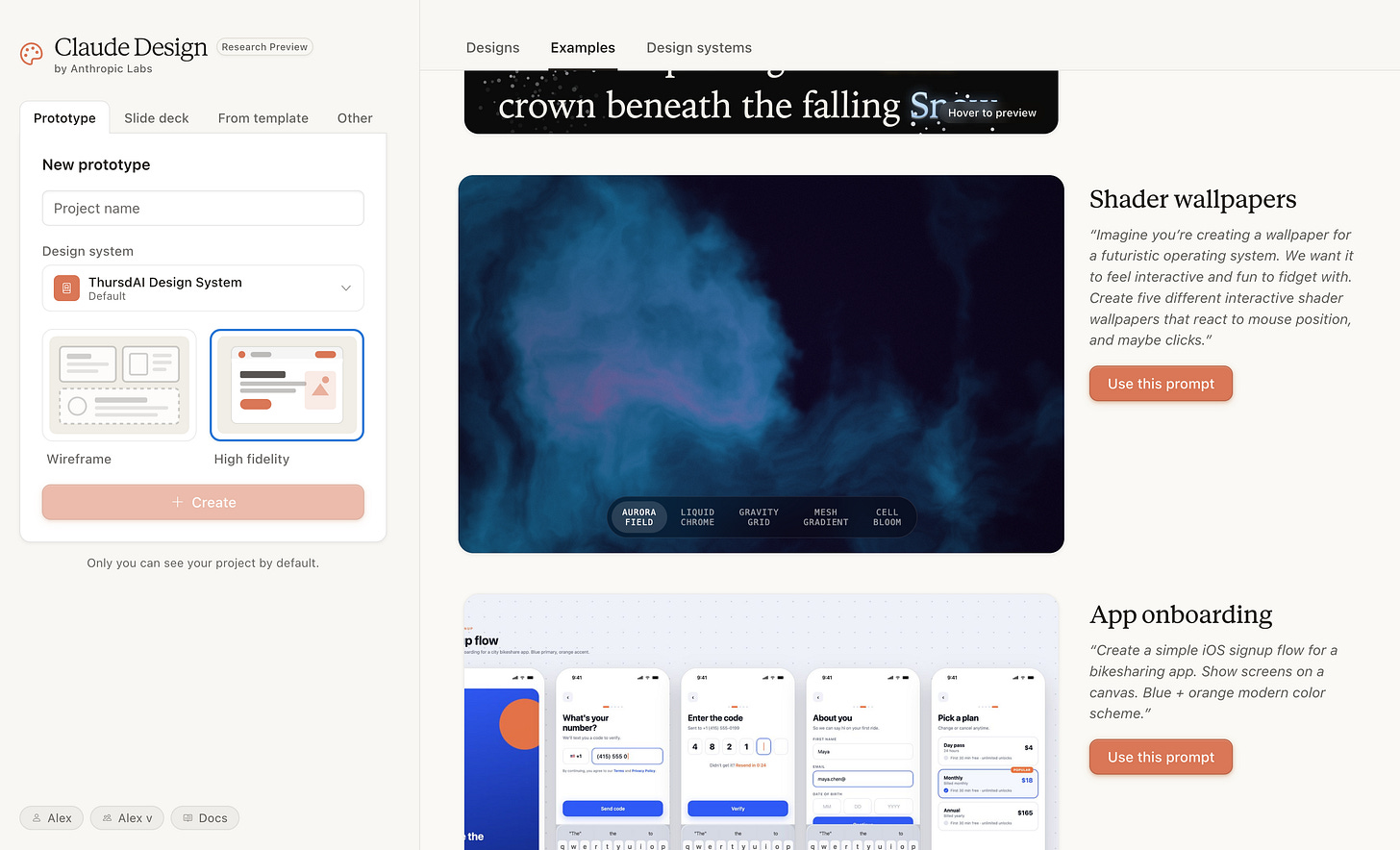

Claude Design - Anthropic’s figma killer? (try it, deep dive)

This launched on Friday (come on Anthropic, why are you launching things on a friday?!) and nearly tanked Figma stock (16% down since). It didn’t help that Mike Krieger who runs product at Anthropic and co-leads Anthropic Labs, quit the Figma board just a few days before this release.

Claude Design is a new, separate interface for Claude, with its own usage meter, that exists only on web, and only for Max subs for now. We all know that Claude is great at frontend design, but this is an interface that wraps Claude, with some incredible “designer like” tools. Knobs to edit font sizes, point and click interface to highlight elements for Claude to fix.

The highlight for me, what broke my brain on the live stream, was the “talk to the design” feature, where you turn on the microphone, talk to Claude, and while you point, it “knows” what you’re pointing at!

So you can say “here, fix THIS thing” without saying what that thing is, and Claude will just fix it, by looking at where your cursor was at the time. This ... this feels like magic.

The huge unlock in Claude Design is the initial “brand guidelines” process, in which you ask Claude to create a holistic brand identity (based on your website code, screenshot, Figma file etc) and then, every new project, can have that brand identity preserved, with the right fonts, colors, logos etc. I dropped the show notes from this week and asked for an interactive infographic website using the brand guidelines.

This really does feel like a “new kind” of product, I’ve worked with designers before, the interaction model with Claude Design feels very much like working with a designer, showing them what you like and don’t like. And like working with a designer, it’s expensive! Claude Design uses Claude 4.7 and buuurns through tokens! I’ve tapped out of my weekly quota in less than 4 projects!

Luckily, Anthropic this week admitted that they’ve dubmed down Claude, and reset the quotas, so I was able to show it on the live show.

This week’s Buzz — W&B LEET TUI gets Workspace mode

Our W&B LEET TUI went viral a couple weeks back (local terminal UI for watching run stats, metrics, and system health - built for folks training on remote boxes who don’t want to alt-tab to a browser), and the team shipped a big follow-up this week: workspace mode.

Multi-run workspaces live, metadata filtering, system metrics (GPU stats included), console logs, and — my favorite — images rendered directly in the terminal . The whole web workspace experience, now in your SSH session.

Demo video and full announcement here. pip install wandb, give it a spin.

Open Source AI

Kimi K2.6 - Opus at home (if you have a data center) (X, HF, Live)

Moonshot AI dropped Kimi K2.6 this week, a 1 Trillion parameter MoE with 32B active, 384 experts, 256K context, under a modified MIT license. The headline numbers are wild: SWE-Bench Pro at 58.6 (beating GPT-5.4 and Opus 4.6), BrowseComp at 83.2, HLE with tools at 54.0.

Wolfram ran it on his own Wolf Bench and it came out as the best open source model he’s ever tested — essentially matching Sonnet 4.5 on terminal bench with the Terminus agent harness, and beating Opus 4.6 inside OpenClaw. That’s a crazy sentence to write.

Pricing on Cloudflare Workers AI is $0.95/M input, $4/M output — roughly 15x cheaper than Opus. If you have the budget to run it.

Now, the calibrated take: Yam showed us a report from @BrightMind where Kimi failed pretty badly at rendering a 3D lava lamp while every other frontier model nailed it. Artificial Analysis has Kimi at #4 on their intelligence index (54) behind the three frontier labs. So it’s definitely a bit benchmaxxed on agentic coding, but it’s also genuinely good at agentic coding, which is the use case most people care about right now. My own test: it overthinks a lot, generates a lot of tokens (which hits your wallet even at those low prices) and I wasn’t very happy with it during my live test. The frontend design of it is meh, and it did feel benchmaxxed.

Bottom line: if you’re building an OpenClaw setup and you want Opus-adjacent quality without paying Opus prices, Kimi K2.6 could be the move. They also shipped Kimi Code CLI as a companion to Claude Code / Codex CLI.

Alibaba drops Qwen 3.6 27B - (Actually sonnet at home)

This one is special because it’s genuinely, actually runnable at home. It’s a dense 27B model under Apache 2.0, and it beats Alibaba’s own ~400B Qwen3.5 flagship MoE on every major coding benchmark. SWE-bench Verified 77.2, Terminal-Bench 2.0 at 59.3 (matching Opus 4.5), SkillsBench 48.2 (beating Opus 4.5 at 45.3).

With Unsloth’s dynamic GGUFs, this runs on 18GB of RAM. A used RTX 3090 under $1000 or a 24GB Mac Mini and you’re running something genuinely comparable to Sonnet 4.5 at home. Nisten has been daily-driving it and said people are calling it “Sonnet 4.5 at home” - it’s not drop-in replacement perfect (it struggled with hard git merges in his testing), but for non-critical work? Absolutely there.

Natively multimodal, 262K context extendable to 1M. There’s also a sibling, Qwen3.6-Max-Preview, available on their API if you want the frontier version.

Great great open source model!

Quick hits

A bunch of stuff worth knowing about that didn’t get full segments:

Google Gemini Deep Research + Deep Research Max on Gemini 3.1 Pro (announce) — autonomous research agents that navigate web + your custom docs. Plus native chart generation and MCP support in the API.

Google Gemini Enterprise Agent Platform (launch) — evolution of Vertex AI for enterprise agent builders.

ChatGPT Agents “Hermes” leak — an agents builder/studio with templates and Slack integration incoming per @btibor91.

Codex now has 4M users per the team, and they open-sourced Euphony, a visualizer for Codex session logs.

SpaceX / Cursor $60B deal — the structure is either a $60B acquisition or a $10B collaboration experiment. The thesis being whispered: are developer traces the missing training ingredient for frontier coding models? Very spicy, very Elon.

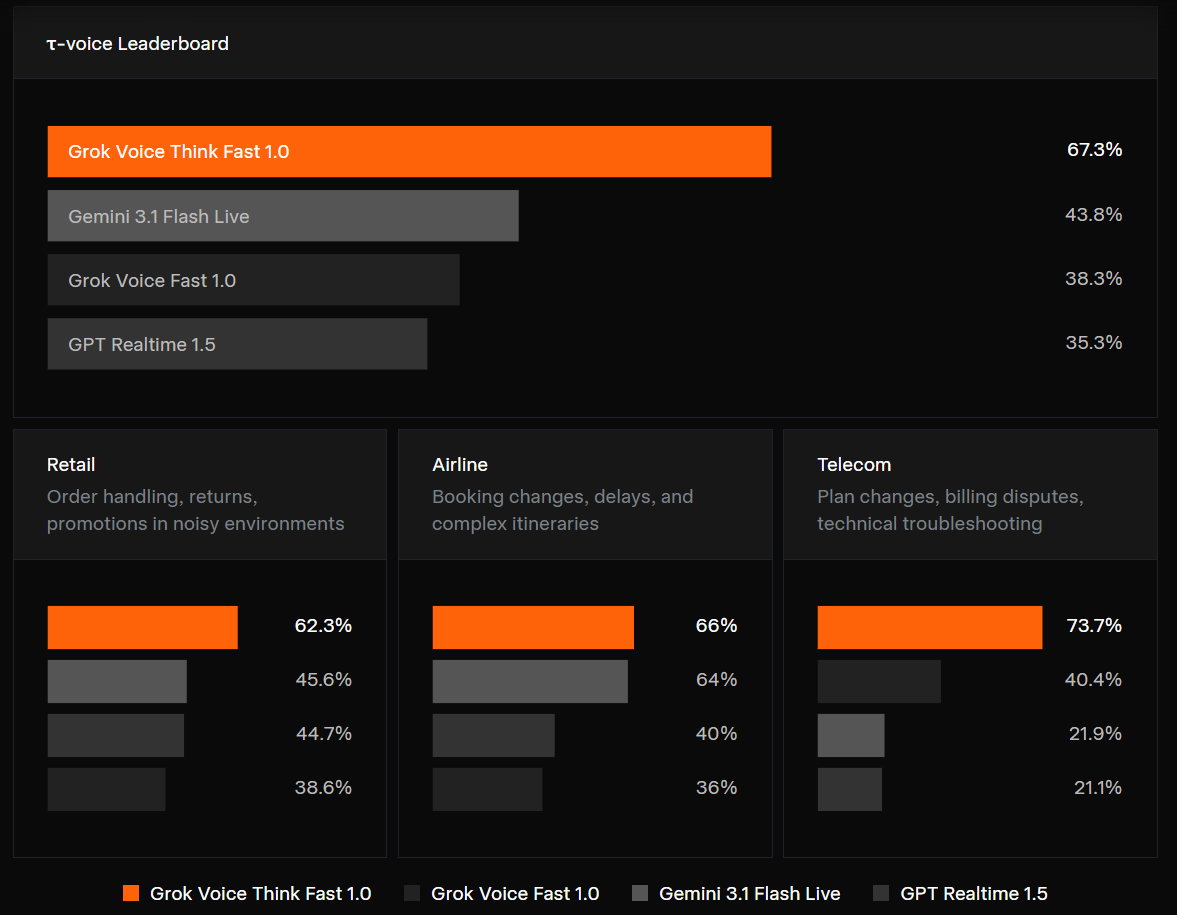

Speaking of Elon, XAI released Grok-Voice-think-fast 1.0 (Blog) - it’s their fully end to end omni model that takes customer calls and is already deployed at scale at Starlink! Very interesting contender to Gemini Flash live model we covered before. The benchmarks look insanely good

Phew

I said at the top this was one of the more intense weeks in AI in recent memory, and I genuinely mean it. We were live on the stream for almost four hours. I’ve done five livestreams since last Thursday. GPT 5.5 dropping mid-show was the cherry on top. Between Codex becoming ambient, GPT Image v2 rewriting the ceiling for generative visuals, Claude Design moving a stock price, two incredible open source drops in Kimi and Qwen, and OpenAI quietly re-committing to open source — this was a lot.

If you’re feeling the FOMO, you’re not alone. We live this stuff and I still feel it. My ask this week: bookmark the livestreams, play with GPT Image v2 (it’s genuinely the most fun I’ve had with an image model in a long time), and if you’re deploying agents in production, go read the CrabTrap source code this weekend.

See you next Thursday — same place, same time, probably another launch that disrupts us mid-show. That’s the world now 🤷

ThursdAI - Apr 23, 2026 - TL;DR

Hosts and Guests

Alex Volkov - AI Evangelist & Weights & Biases (@altryne)

Co-Hosts - @WolframRvnwlf @yampeleg @nisten @ldjconfirmed @ryancarson

Peter Gostev (@petergostev) - Arena AI

Big CO LLMs + APIs

OpenAI launches GPT-5.5 and GPT-5.5 Pro — SOTA across the board (Blog, Livestream)

OpenAI GPT-Image-2 — biggest Arena Elo jump ever, thinking mode for images (X, Eval site, Livestream)

OpenAI Codex — Background Computer Use + Chronicle (screen memory), hits 4M users (Chronicle)

GPT-5.5 pre-launch leak in Codex dropdown (X)

Anthropic Claude Design — research preview on Opus 4.7, Figma -7% (X)

Anthropic resets all Claude quotas, admits degradation, allows OpenClaw CLI back (X)

Anthropic ARR crosses $30B

Google Gemini Deep Research + Deep Research Max on Gemini 3.1 Pro (X)

Google Gemini Enterprise Agent Platform (X)

ChatGPT Agents “Hermes” leak — builder/studio + Slack integration (X)

OpenAI clinician/medical model + workspace agents released

Open Source LLMs

Tools & Agentic Engineering

This week’s Buzz - Weights & Biases

W&B LEET TUI goes workspace mode — multi-run, GPU metrics, images in terminal (X)

Voice & Audio

StepAudio 2.5 TTS — natural-language control of emotion and delivery (X)

Deals & Industry

SpaceX/xAI <> Cursor — $60B acquisition or $10B collaboration structure