Hey yall, Alex here, writing this from sunny London, at the first ever AI Engineer conference in Europe!

What a show we have for you today! First, let me catch you up on what’s important: Anthropic, this week announced a whopping $30B ARR up from 19B in Feb, while also telling us about Claude Mythos Preview their next gen HUGE model that they won’t release to the public (yet?) that finds crazy vulnerabilities in existing code bases. Apparently OpenAI will follow up with a similar non-public model soon.

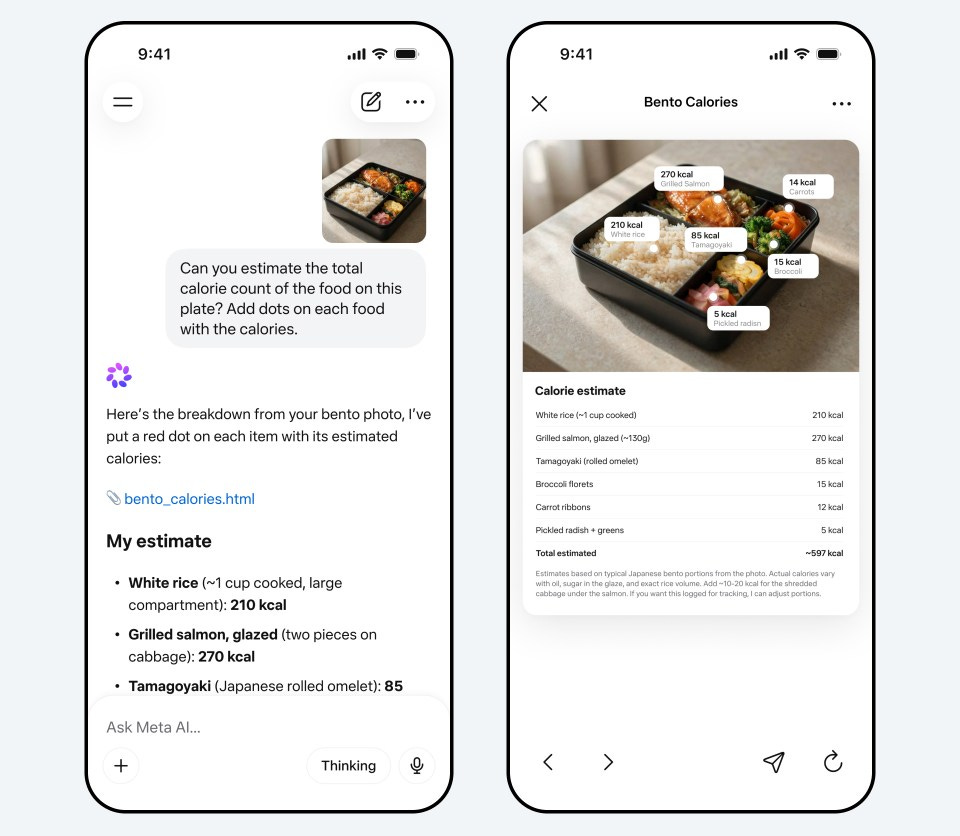

The Meta Superintelligence Lab led by Alex Wang finally showed what they were working on, Muse Spark, the smaller of their upcoming models on a complete new infrastructure (MSL announcement, Simon Willison’s deep dive on the 16 hidden tools).

In other news:

Z.AI released GLM 5.1 in OSS finally (HF weights), Seedance 2.0 finally available in US on Replicate, OpenAI testing out GPT-image-2 on LM Arena under codenames, HappyHorse from Alibaba takes the video crown, and Mila Jovovich (5th Element, Resident Evil) releases agentic memory plugin called MemPalace (Ben Sigman’s transparent correction thread is worth reading).

We had 5 guests today on the show, we kick off with @swyx the founder of AI Engineer and host of Latent Space. We then chatted with @petergostev from Arena (formerly LMArena) about Mythos and the compute wars, then Vincent Koc, the second most prolific contributor to OpenClaw, then our friends VB from OpenAI and Omar from DeepMind, both previously at HuggingFace. This is a busy busy show, and given the time-zones, I unfortunately don’t have time for a full weekly writeup, but as always, I will share the raw notes and post the video (lightly edited).

AI Engineer - London

ThursdAI came a long way since the first AI Engineer conference, but many who read this don’t know, that was my big break. Swyx invited me to cover the first AIE in San Francisco in 2023, and I remember, I was in an Uber to the airport, the driver asked me what I do, and I, for the first time said “I host a podcast”. I (and ThursdAI) owe a lot to Swyx, and AIE team, and it’s been incredible to see how big they’ve grown and how many great speakers this event hosts!

The term AI Engineer has drifted in those 3 years, but also has the term Software Engineer. Swyx predicted this nearly 3 years ago, what I don’t think he predicted, is that all engineers are now AI Engineers, and this includes domains like Agens (OpenClaw), Context and Harness Engineering, Evals and Observability, Voice & Vision all of which are tracks in this conference.

I was really surprised to see how many of the talks/speakers here are native to London (after all, Deepmind is from here, OAI, Anthropic, Meta have offices here) and the latest boom in agents, OpenClaw, Pi were all Europe based as well, and they are joined the AI Engineer stage.

Oh, and there’s also a Giant Inflatable Claw at the entrance, yup, for pictures and vibes, and to show off how quickly the OpenClaw took over the mind-share.

Anthropic announces $30B ARR and Mythos, their next model, will not be released to the public.

The thing that everyone will tell you, is that Anthropic is on a roll, this is obviously connected to their upcoming IPO this year. We’ve been covering many issues on their part, but this week we saw them posting about a HUGE increase in ARR, from 19B in February to 30B in April, passing OpenAI at $25B. That last fact though, is kind of disproven because they report on ARR differently, OpenAI apparently only counts their cloud revenue from Microsoft per the information.

The growth is undeniable though, and so is the most unprecedented release announcement, Claude Mythos Preview, which was rumored for a bit and now was announced proper. With project Project GlassWing, Anthropic has announced that this model is SO good at cyber security and finding bugs in code, that they cannot share it with the public, and through GlassWing they will share it with companies like Microsoft, Linux, CrowdStrike and a bunch of others, to harden their security.

This is it folks, this is the first time, where a model was “announced” but deemed too risky to release. Now, is it truly “too risky”? Previously, folks thought that DALL-E is too risky, or cloning voice tech is too risky, and now it’s everywhere. The capabilities catch up even in OpenSource.

But the facts are, Anthropic says they’ve found a 27-year old bug in OpenBSD (famously very secure), and that this model is very very good at connecting the dots between several, seemingly inacuous bugs, to string them together into one coheren exploit.

This is, indeed scary. Just last week, one of the top security researchers in the world, Nicolas Carlini, now at Anthropic, gave a talk at Black Hat, showing off these results, and saying that these models since December and definitely recently have passed him as a security engineer. If you haven’t seen this talk, watch it, then try to estimate if Anthropic did the right thing by only releasing this model to enterprises first.

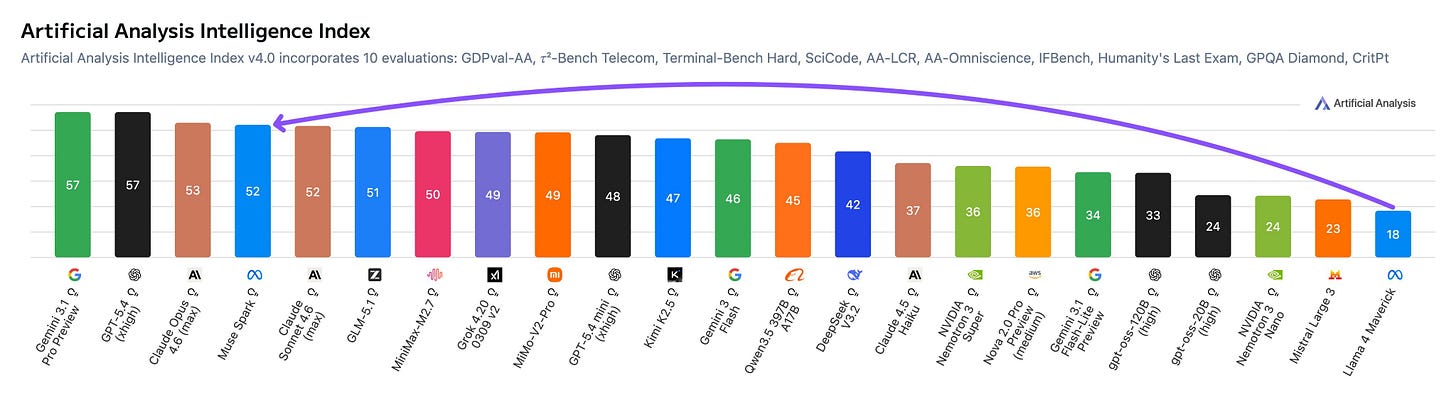

But on the show, Peter Gostev from Arena gave me a take on this that I haven’t been able to shake. Peter pulled up his Compute Wars chart live on the show — and the picture is that OpenAI is way ahead of Anthropic on compute, with Anthropic only recently getting a noticeable bump (which lines up suspiciously well with Mythos being trainable in the first place). His read: “it sounds cooler to say it’s too risky to release than ‘we can’t serve it.’” The official partner pricing is $25 / $125 per million tokens — 5x Opus 4.6 — but if you don’t have the GPUs to serve it broadly, the price doesn’t matter. In the year of the IPO, the company that cannot serve a model says the model is too dangerous to serve. Make of that what you will.

This also reframes the whole rate-limit drama with OpenClaw. Anthropic didn’t ban OpenClaw — I want to be very clear about this because the discourse went sideways. What they did is they made it significantly more expensive for Max-tier subscribers to use Opus through OpenClaw, which pushed a lot of people over to GPT-5.4 via Codex. Same root cause: they’re out of compute. The freshly announced Anthropic + Google TPU deal (Google already owns ~10% of Anthropic) is them trying to fix this — though as Peter noted, it’s pretty wild that Google is propping up a direct competitor to their own DeepMind team. Same pattern as their original $2B Anthropic investment ending up propping AWS Bedrock against Google Cloud. Big Google contains multitudes.

Meta Superintelligence Labs ships Muse Spark — Llama is dead, long live Muse

Llama is dead, long live Muse. This week Meta finally showed what the very expensive Meta Superintelligence Labs under Alexandr Wang has been cooking, and the answer is Muse Spark — the smaller of their new model family, built on a fully rebuilt AI stack from scratch in just 9 months. Nine months is wild for that kind of overhaul, and the headline number people are quoting is that they reach Llama 4 Maverick capability with over 10x less compute.

Spark is intentionally small and latency-optimized — it’s not trying to be the biggest, it’s trying to be the first step on Meta’s new scaling ladder. But the benchmarks in certain areas are nuts: 86.4 on CharXiv Reasoning (beats Opus, Gemini, GPT-5.4), and the one that really got me — 42.8 on HealthBench Hard vs Opus at 14.8 and Gemini at 20.6. They trained it with data curated by over 1,000 physicians and it shows. They also shipped a Contemplating mode which is parallel multi-agent reasoning, hitting 58.4% on Humanity’s Last Exam with tools. Coding is the acknowledged weak point (77.4 on SWE-Bench Verified vs Opus 80.8) but for v1 from a brand new stack, this is extremely respectable.

Meta is Back!

The real story isn’t any single benchmark though, it’s distribution. Spark is rolling out across meta.ai, WhatsApp, Instagram, Threads, Messenger, and Ray-Ban Meta glasses — billions of users. Meta went from open Llama to a closed consumer model and they’re clearly playing a different game now (though Wang says future Muse versions might be open-sourced).

The deep-dive that’s really worth your time is Simon Willison’s post where he poked at the meta.ai chat UI and got the model to spit out descriptions of 16 hidden tools behind the scenes — full Code Interpreter with persistent Python 3.9, a visual grounding tool that does pixel-precise object detection (bounding boxes, point coordinates, counting — it located 8 objects including individual whiskers and claws on a generated raccoon), sub-agent spawning, file editing, and semantic search across Instagram/Threads/Facebook posts. It’s basically an entire agentic harness baked into the chat UI. Jack Wu from MSL confirmed the tools are part of a new harness built specifically for Spark’s launch. Meta stock went up 7% on this. They are very much back in the frontier game.

Guest highlights

We had an unprecedented packed show with 5 guests (also this is the shortest show we’ve ever

Swyx kicked us off with vibes from the AI Engineer floor — harness engineering as the dominant theme (gains are coming from the harness, not the weights), the rise of skills (English-as-programming-language) absorbing more of that harness work, and his thesis that supply-chain attacks like the recent light LLM and Axios incidents mean you should basically vendor everything — pip fork instead of pip install. We also chatted about how MCP has gone from “the most exciting protocol” to “settled and stable, therefore less interesting,” which is a great problem to have.

Peter Gostev from Arena (you saw a lot of him in the Mythos section above) also dropped a bonus on us: Arena just released 3 years of historical leaderboard data and actual prompt datasets on Hugging Face. He used to literally scrape the arena website by hand into Google sheets to make those overtime leaderboards we all loved — now it’s all public. Also: he confirmed that Seedance 2.0 jumped ~80 ELO points above the next video model on Arena, which is unprecedented — video models normally cluster within 10 points of each other.

Vincent Koc — the #2 OpenClaw maintainer after Peter Steinberger — joined us fresh off the OpenClaw track stage. The OpenClaw codebase is now ~1.5 million lines of code including unreleased iOS and Android native apps. GitHub literally caps the issue/PR counter at “5K+” and they hit the ceiling. We talked about OpenClaw 2026.4.5 which ships /dreaming GA (Light/Deep/REM phases that defrag agent memory and write a human-readable Dream Diary to DREAMS.md), built-in video and music generation across 4 backends, GPT-5.4 as the new default, prompt-cache reuse improvements, and Control UI + docs in 12 new languages. Vincent’s framing of dreaming was beautiful — “how do you explain agent memory to a mom? You call it dreaming.” He also gave my favorite line of the show on the GPT-5.4 personality problem: incredible at coding, but soulless. (For what it’s worth, I came home after watching Project Hail Mary, cloned the Rocky voice, dropped it into my OpenClaw, and it was magical. That’s the kind of thing you can only do when the harness and the model are decoupled.)

VB from OpenAI told us Codex just hit 3 million weekly active users — up from 2 million last month. We talked plugins (the Stripe / Supabase / shadcn ones that ship as packages), sub-agents (yes, one is named Jason), and Guardian Approvals — an experimental mode that classifies each tool call by risk and only escalates the dangerous ones to you, so you don’t have to YOLO-mode everything. The story that stuck with me though is his 9 AM Codex automation: every morning it reads his Slack mentions, cross-references Gmail and Calendar, and creates 5-minute pre-brief calendar events for upcoming meetings. None of that is “coding.” That’s the super-app future hiding inside a “developer tool.” I’m stealing this workflow.

Omar Sanseviero from Google DeepMind came on to celebrate Gemma 4 crossing 10M+ downloads with 1,000+ Gemma-4-based fine-tunes already on HF (and Gemma family total is now over 500M downloads). Gemma 4 is also the foundation for the next generation of Gemini Nano on Pixel/Samsung devices. Lama.cpp vision capability fixes are landing. Gemma 4 is also live on W&B Inference if you want to play. Wolfram (whose entire household runs on Pixel + Google AI Studio, including his 70-year-old mother on voice unlock) was in heaven.

This Week’s Buzz

A short but spicy week from Weights & Biases:

W&B Automations are LIVE. You can now wire event triggers from your training runs (completion, eval thresholds, drift) into notifications, GitHub Actions, deployments, infra shutdowns — closing the loop from experiment to production. Pairs really well with the iOS app we recently shipped, so you can get a ping on your phone the moment something interesting happens on a run.

GLM 5.1 is live on W&B Inference (alongside Gemma 4 from last week) — the team is moving fast to host the best open models the moment they drop.

Wolfram published a deep dive on “more reasoning is not always better” on the W&B blog — the research behind his finding that giving models more thinking tokens can actually make them dumber on certain tasks. It’s the in-depth version of what we discussed on the show last week, with all the data. Go read it on wandb.com.

Also: shout out to everyone who came up to me at AI Engineer and said hi. The Wolf Bench mentions in particular made my day. If you’re listening to this and you’re at AIE — come find us, we’ll be around tomorrow too.

That’s it for this week — newsletter is short because the show was long and London is calling. As always, thanks for reading and listening 🫡

TL;DR April 9 - show notes and links:

Hosts and Guests

Alex Volkov – AI Evangelist & Weights & Biases (@altryne)

Co-Hosts – @WolframRvnwlf @yampeleg @nisten @ldjconfirmed

Guests: @swyx (AI Engineer / Latent Space), @petergostev (Arena, formerly LMArena), @reach_vb (OpenAI / Codex), @vincent_koc (OpenClaw #2 maintainer), @osanseviero (Google DeepMind / Gemma)

Big CO LLMs + APIs

Anthropic announces Project Glasswing and Claude Mythos Preview, a cyber-defense frontier model too dangerous to release publicly (X, Announcement)

Anthropic’s Claude Mythos is so powerful they won’t release it — found zero-days in every major OS and browser, escaped its sandbox, and scored 93.9% on SWE-bench (X, X, X, X)

Anthropic ARR jumps from $19B (February) to $30B in April — secondary tender sale completed, employees not selling ahead of IPO

Anthropic + Google TPU deal — Anthropic getting massive compute commitment from Google (who already owns ~10% of Anthropic), with Peter Gostev’s Compute Wars chart showing the gap to OpenAI closing

Anthropic ships Managed Agents — fully hosted agent runtime + infrastructure. Selling outcomes, not tokens

Meta launches Muse Spark, the first model from Meta Superintelligence Labs, with natively multimodal reasoning, multi-agent Contemplating mode, and deep health/visual capabilities (X, Blog)

Simon Willison deep dives into Meta’s Muse Spark model and uncovers 16 hidden tools including visual grounding and sub-agents in the meta.ai chat UI (X, Blog, Announcement)

Open Source LLMs

GLM-5.1 from Z.ai is #1 open-source on SWE-Bench Pro at 58.4%, runs autonomously for 8 hours with 1,700+ agent steps (X, HF, Arxiv)

Gemma 4 crosses 10M+ downloads, 1,000+ Gemma-4-based fine-tunes on HF. Did really well on Arena considering size — Peter Gostev confirmed it smashed many models on the Pareto curve

Nisten’s pick: Hermes 27B — trained specifically to be paired with the Hermes harness, allegedly distilled from Opus API. Model + harness shipped together as a portable unit

Tools & Agentic Engineering

OpenClaw 2026.4.5 — biggest release since 4.0:

/dreaminggoes GA (Light/Deep/REM memory consolidation with a Dream Diary in DREAMS.md), built-in video + music generation across 4 backends, GPT-5.4 as new default, prompt-cache reuse improvements, Control UI + docs in 12 new languages (Release, Vincent, Dreaming docs, FOD#147)OpenClaw codebase now ~1.5M lines including unreleased iOS + Android native apps. GitHub literally caps at “5K+” PRs/issues — they hit the ceiling

Anthropic did NOT ban OpenClaw — they made Max-tier subscription usage of Opus via OpenClaw significantly more expensive, pushing many users to GPT-5.4 via Codex

Codex hits 3M weekly active users — up from 2M last month. VB walked through plugins (Stripe, Supabase, shadcn), sub-agents, Guardian Approvals (auto-classify tool-call risk), and experimental hooks

Cursor: remote agents + code review agent (78% issues caught pre-merge)

MemPalace: Milla Jovovich and Ben Sigman’s open-source AI memory system goes viral with 26K GitHub stars in 2 days, claims top benchmark scores, then transparently walks back overstated claims (X, GitHub, X, X, GitHub)

This Week’s Buzz (Weights & Biases)

W&B Automations are LIVE — event triggers from your runs into notifications, GitHub Actions, deployments. Pairs nicely with the new iOS app

GLM-5.1 and Gemma 4 both up on W&B Inference

Wolfram published an in-depth blog post on his finding that more reasoning is not always better (models can get dumber with more thinking time) — full writeup on wandb.com

Vision & Video

Seedance 2.0 launches in the US — on Replicate with up to 9 reference images, 3 videos, and 3 audio files for cinematic AI video generation (X, Announcement). Peter Gostev confirmed it jumped ~80 ELO points above the next video model on Arena — a massive gap where most video models cluster within 10 points

HappyHorse-1.0, a mysterious 15B video model from Alibaba’s Taotian Group, takes #1 on Artificial Analysis video arena beating Seedance 2.0, Kling 3.0, and Grok Video (X, X, X, X, Blog)

The Harry Potter “Drip Wizards” AI slop trend — Seedance-powered Hogwarts videos going hugely viral

AI Art & Diffusion & 3D

Show notes & key moments

Swyx on harness engineering: gains are coming from the harness, not the weights. The big labs are investing more and more in harness — it’s not going away. Skills (English-as-programming-language) are increasingly absorbing harness work

Swyx on AI Engineer tracks: MCP is “more settled and stable, therefore less interesting.” Coding agents track is bigger this year (Cursor, Factory, super-long-running). Voice & Vision split from Generative Media — multimodality as a single track no longer makes sense

Swyx on supply chain attacks: light LLM and Axios issues mean you should “vendor everything” —

pip forkinstead ofpip install. Tool requests becoming prompt requestsPeter Gostev on Mythos pricing: $25 / $125 per M tokens (~5x Opus 4.6). But the real reason it’s not public isn’t safety — Anthropic likely just doesn’t have the compute to serve it

Peter Gostev on Compute Wars: OpenAI is way ahead of Anthropic on compute. The new Google TPU deal is Anthropic catching up — and weird that Google is propping up a competitor to DeepMind. (Same pattern as when Google’s $2B Anthropic investment effectively propped up AWS vs Google Cloud)

Peter Gostev on Arena data: Arena released 3 years of historical leaderboard data + actual prompts as datasets on Hugging Face. Previously he was scraping it by hand into Google Sheets — now he has Databricks access

VB on Codex workflows: every morning at 9 AM, Codex automation reads his Slack mentions, cross-references Gmail and Calendar, and creates a 5-minute pre-brief calendar event for upcoming meetings. None of it is “coding” — it’s all plugins + connectors

Vincent Koc on the GPT-5.4 personality problem: model is incredible at coding but “soulless.” Wolfram noticed it back in December and cancelled his subscription. Alex cloned the Rocky voice from Project Hail Mary and put it in his OpenClaw — “amazing”

Vincent Koc on Dreaming: three phases (REM, core, deep sleep) that defrag agent memory. The dream log is for the human in the loop — makes memory inspectable in a way a non-technical person (a mom) can understand

Vincent Koc on architecture: the open-source flood forced OpenClaw into a plugin architecture. “Not Lego — Ikea.” Refactored ~1M lines in 9 days at 2 AM at NVIDIA before Jensen’s keynote

Omar Sanseviero on Gemma 4: 500M+ total Gemma downloads across all variants. Gemma is the foundation for the next generation of Gemini Nano on Pixel/Samsung. Lama.cpp vision capability fixes shipping

Wolfram’s Pixel/Google household: kids using AI Studio + Antigravity to build games, his 70-year-old mother using voice unlock on her Pixel