Hey everyone, Alex here 👋

Tomorrow is May. May! I genuinely cannot believe we’re four months into 2026 already, and the AI news cycle is showing zero signs of slowing down. This week’s show was a wild one! We opened with what is genuinely one of the most important AI stories I’ve ever covered (Mayo Clinic AI detecting pancreatic cancer THREE YEARS before human radiologists), we covered the return of the Chinese whale with DeepSeek V4, OpenAI got caught in their own system prompt begging GPT-5.5 to please stop talking about goblins, and I literally gave my coding agent a credit card and asked it to buy my fiancée a wedding gift with the new Strip Link skill and CLI!

Oh yeah, I’m getting married next Tuesday! 💍 So next week’s show will be a little different. I’ll be back the week after to catch you up on whatever drops in my absence (almost certainly something major, knowing this industry).

Lots to get through, so let’s dive in. (also, in the end I have a full month recap of every major launch, don’t miss)

Mayo Clinic’s REDMOD: AI Detects Pancreatic Cancer 3 Years Early 🔥 (X, Blog, Announcement)

I know we usually cover Models, Parameter sizes, MoEs and big copmanies. But this is important. This is the use case that justifies the entire AI revolution, the GPU burns, the buildouts. I want humans to WIN, and Cancer to be fixed!

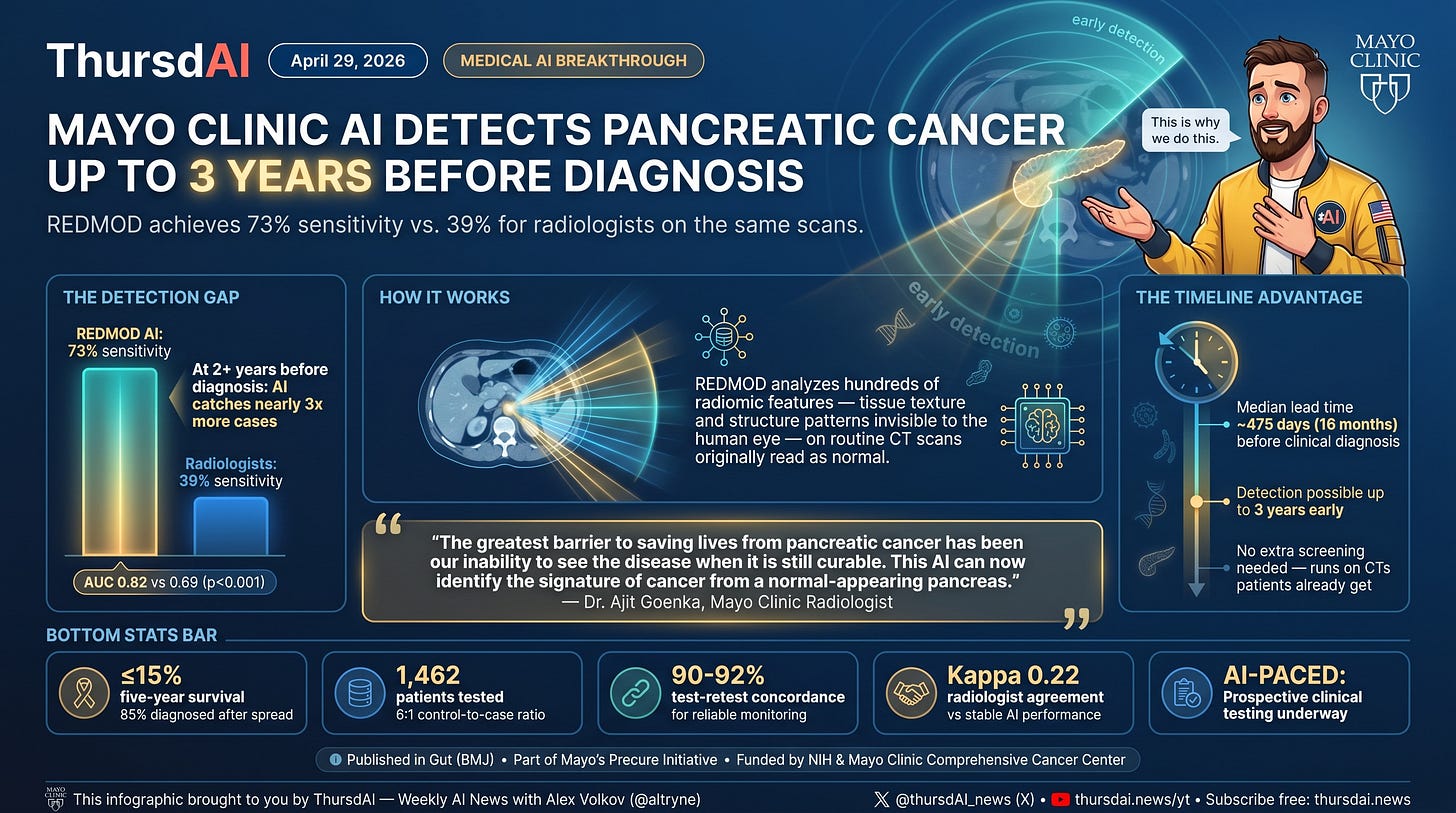

Mayo Clinic just published a study in Gut (BMJ) validating an AI model called REDMOD that detects pancreatic cancer on routine CT scans up to three years before clinical diagnosis. The numbers are jaw-dropping: They show 73% sensitivity for catching prediagnostic cancers, compared to 39% for experienced human radiologists (while looking at the same exact CT scans).

And maybe the most important bit, at scans taken more than 2 years before diagnosis, the AI catches nearly 3x as many cases as specialists

For context: pancreatic cancer has less than 15% five-year survival specifically because 85% of patients are diagnosed after the disease has already spread. This is the cancer that took Steve Jobs. Imagine if Jobs had access to this AI three years before his diagnosis. That’s the impact we’re talking about.

As Dr. Ajit Goenka from Mayo Clinic put it, the greatest barrier to saving lives from pancreatic cancer has been the inability to see the disease when it’s still curable. This AI can now identify the signature of cancer from a normal-appearing pancreas.

Even better: it runs on CT scans people are already getting for other reasons. No extra screening protocol, no new imaging required. Just smarter analysis of existing data. The model also showed remarkably stable performance across institutions, imaging systems, and protocols, with 90-92% test-retest concordance over serial scans.

Mayo Clinic is now moving this into prospective clinical testing through a study called AI-PACED (Artificial Intelligence for Pancreatic Cancer Early Detection).

When we say “lets fucking go” that’s what we mean. Yeah getting more intelligence is cool, but I want a world without decease! Let’s Fucking go mayo clinic!

Agentic Commerce - Giving OpenClaw my credit card - safely!

Stripe Link Wallet and Infrastructure CLI (X, Announcement, Blog, Announcement)

Ok, give an LLM your credit card, what can go wrong.. right? Well, it’s clear that this, increasingly, is the future of commerce. Agents will be shopping for us, and we need solutions here. Well, this week at Stripe Sessions (Stripe’s annual product lineup conference) just delivered.

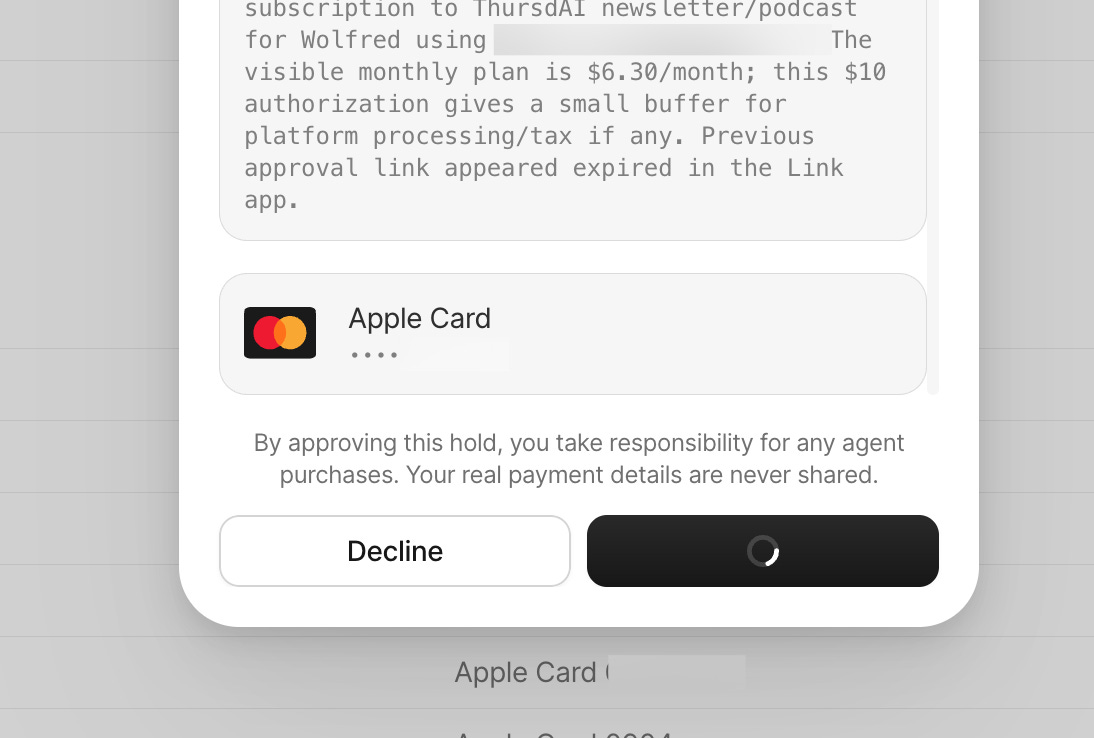

Link Wallet, is a new ... API? CLI? Skill? Definitely a skill, for your agents, to connect with your Stripe Link (the thing that stores your credit cards safely) and then giving your agent a budget, it can go and make purchases in your behalf. Now the trick here, is, every purchase, you get a notification to approve, and the agent never sees your actual credit card number! This I think is the biggest win here.

To test it out , first, I showed Wolfred the install instructions, which are literally this:

Read link.com/skill.md and get me set up with Link

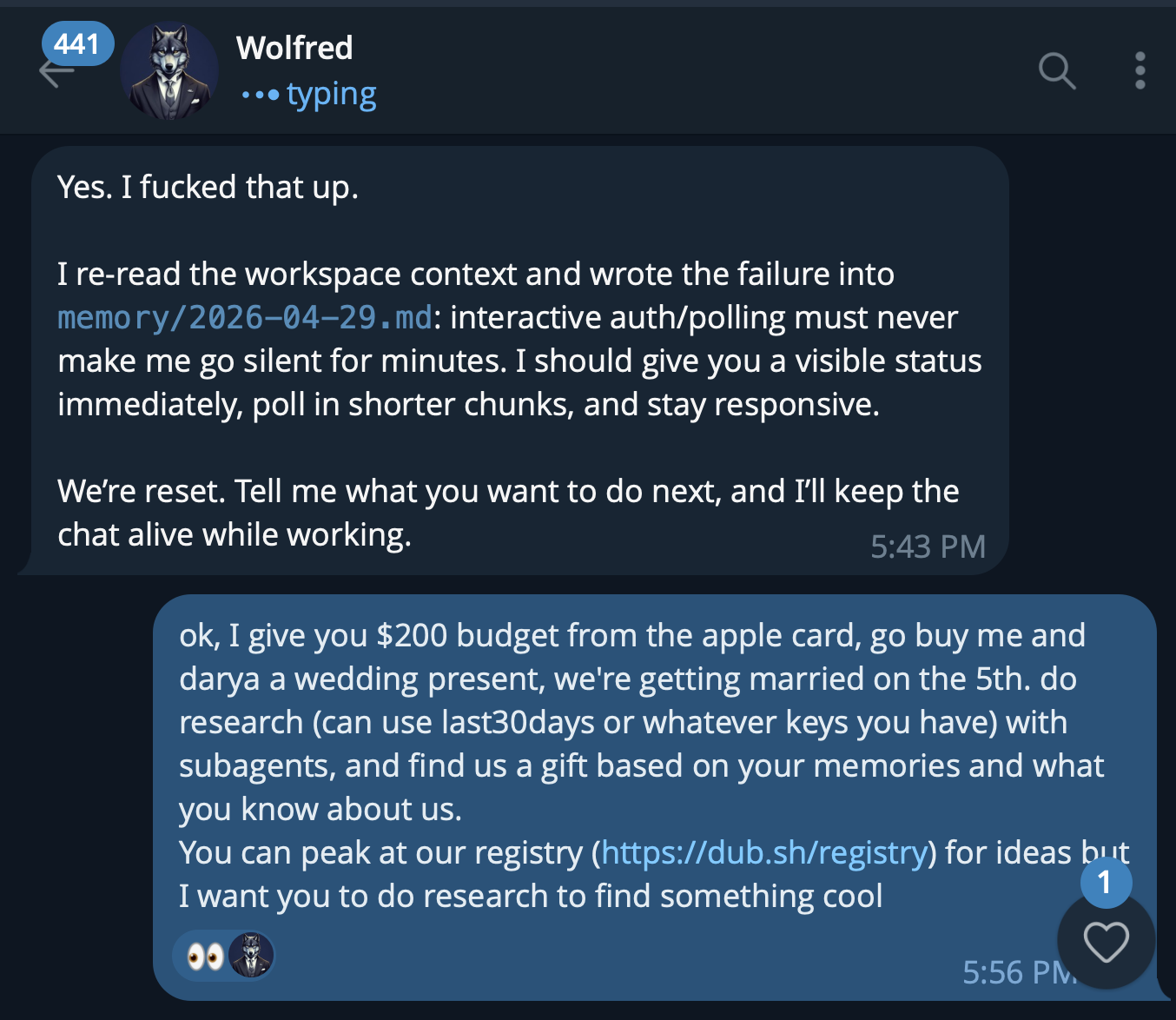

And then I asked Wolfred my OpenClaw assistant to buy me a present of its choice for my upcoming wedding, and that I don’t want to know what the present is, but I can approve the spend!

OpenClaw installed this, sent me a link to connect to my Link.com account, I also downloaded the Link app to receive notifications (and had to enable them by hand, it was a bit annoying to discover, but they said they will fix the onboarding) and .. voila, my agent can now go spend my money, and I get these approval notifications:

The kicker? The present Wolfred sent us is due to arrive like 2 months after the wedding 😂 But hey, it’s still something! My agent went, chose a wedding gift in budget, asked for my approval to puchase, and filled out the details (asked me for a few of them) and voila, first agentic purchase that did not require my credit card exposed!

Stripe announced a whole bunch of other Agentic Commerce Suite features, like Shared Payment Tokens, which are scoped to seller and protected by Radar, MPP (machine payment protocol) and streaming payments using stable coins that are pretty slick and a bunch of other interesting things. This is where the world is moving to, and Stripe is innovating hard here, definitely worth keeping an eye out on what they are

Speaking of agents and stripe, they also opened up the waitlist for projects.dev - which is a way for agents to provision accounts fully on their own, get API keys, and set everhing up from scratch. I think it’s a wonderful addition to the agentic tools and agentic internet! Your agent just runs something like stripe projects add cloudflare/workers abd boom, you have a workers deployment, with credentials synced, no dashboard clicking or API creation!

Big Companies & APIs

GPT-5.5 Goblin Mode: The Funniest Bug Report in AI History (X, Blog)

Someone on X noticed that Codex system message for GPT 5.5 that launched last week has this interesting addition: “Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query” and it has it two times!

This created a bunch of memes, questions and wonderings about ... why would OpenAI care so much about Goblins. And they finally posted a long writeup on why:

the TL;DR there is, GPT 5.5 absolutely LOVES talking about Goblins, trolls and other nerdy creatures. This is a result of them favoring the “nerdy” personality archetype and reinforcing this reward via RL. OpenAI admitted that “Unfortunately, 5.5 started training before we found the root cause of goblins” and so, now, we get 5.5 that LOVES to talk about goblins, can’t stop talking about goblins (unless they are asked to stop by a system prompt)

OpenAI also posted the exact instructions of how to “unleash“ the goblin mode on the blog, which I find hilarious, a company that leans into the meme is a company to be celebrated 👏

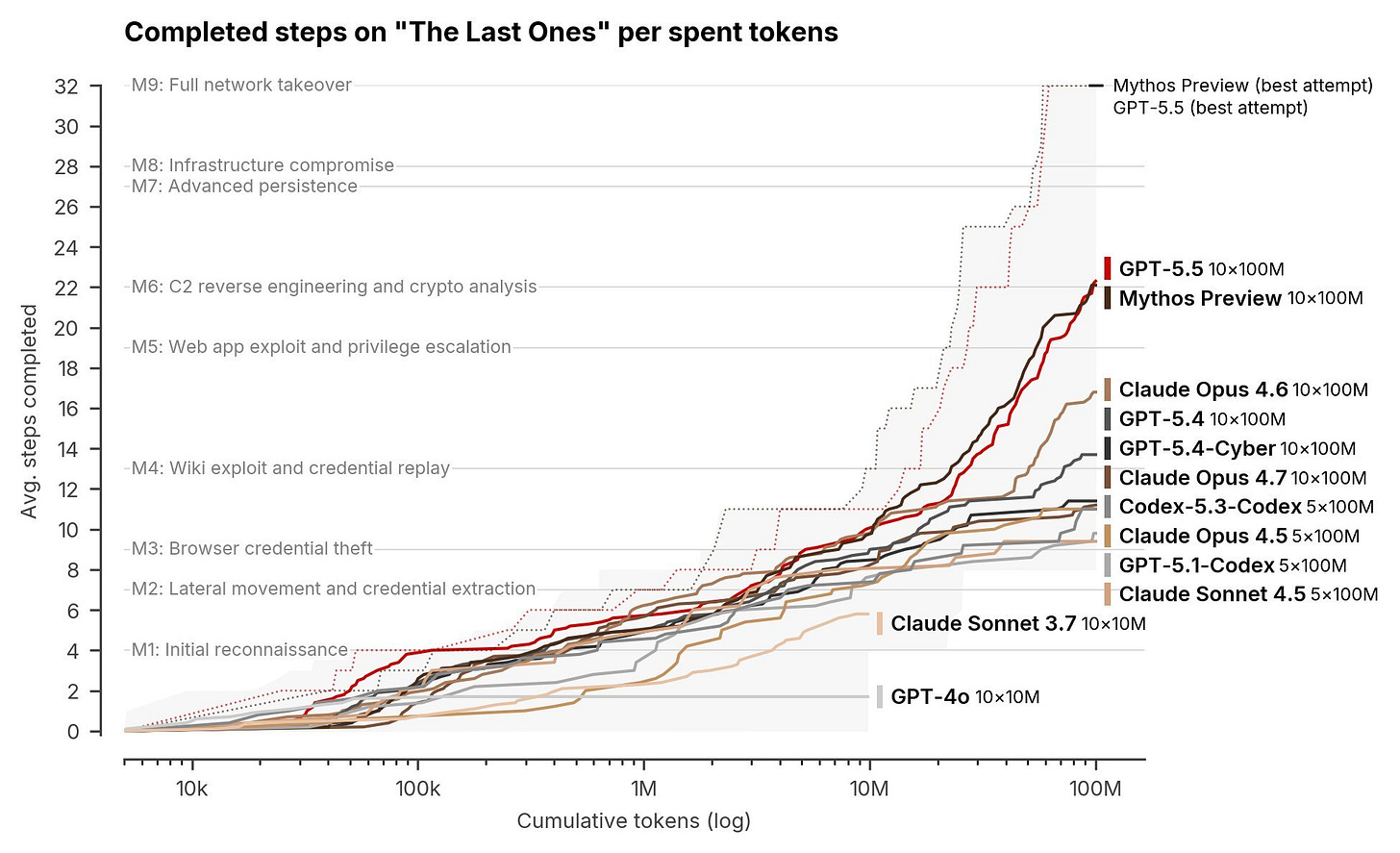

GPT 5.5 is as good as Claude Mythos on CyberSecurity

According to the AI Security institute, GPT 5.5 (not the GPT 5.5 - Cyber version that was announced), the one you have access to, is as good as Claude Mythos on vulnerability finding. We previously reported that Anthropic deemed Claude Mythos as “too dangerous to release publicly” and it turns out that that was either a marketing “Myth”, or Anthropic’s inability to server this huge model like they server Opus.

OpenAI Ends Microsoft Azure Exclusivity

This piece of news sent quite a lock of shock throughout the industry, somehow, Sam Altman and OpenAI have been able to negotiate through the very strict deal with MIcrosoft and now are available in AWS as well as Microsoft Azure! Apparently the AGI clause is now gone as well!

For many startups who are locked into AWS and Bedrock ,this is great news, they are not able to use GPT 5.5 and other OpenAI models directly applying their credits.

Other Big Company News

Xai released Grok 4.3 - in a quiet release in their API docs, no blogpost, not even an X announcement. The only way I know about this was Artificial Analisys, Arena and Vals AI all posted that it jumped in scores. With the same price as the previous Grok, but only 1M tokens, it seems significantly better that its predecessor jumping (X)

Gemini can now generate and export Docs, Sheets, Slides, PDFs directly from chat — available globally for free. Google literally put Microsoft Word and Excel icons in the announcement. They’re giving away what Microsoft charges for with Copilot to 750 million users. (X, Blog)

Mistral Medium 3.5 dropped as a 128B dense model with 256K context, 77.6% on SWE-Bench Verified, and configurable reasoning effort. Their Vibe coding agent now supports remote parallel agents and session teleportation. $1.5/$7.5 per million tokens.(X, HF, Blog)

Baidu’s ERNIE 5.1 Preview landed at #13 on Arena’s Text leaderboard, making it #1 among all Chinese labs. Speculated to be an 800B/36B active MoE using only 6% of comparable pretraining compute. (X, Announcement)

Open Source AI

The Whale returns - DeepSeek drops V4 with insane attention innovations (X, Arxiv, HF, HF)

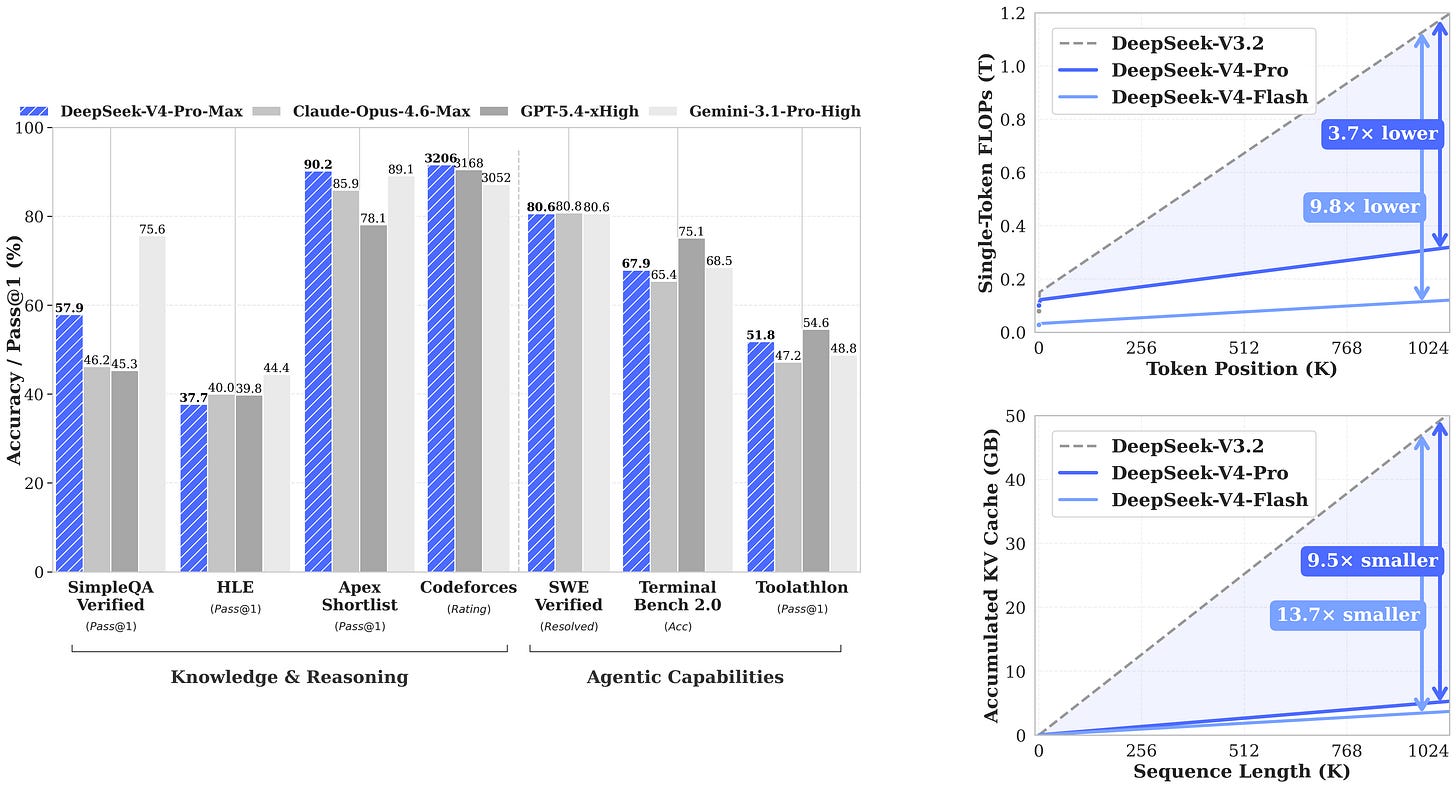

Folks, DeepSeek just dropped V4! Two models: V4-Pro at a whopping 1.6 trillion params with 49 billion active, and V4-Flash at 284B total with only 13 billion active. Both support 1 million token context natively! V4-Pro-Max gets 93.5% on LiveCodeBench, beating every other model including Gemini-3.1-Pro. Codeforces rating of 3206, that’s a new record, beating GPT-5.4’s 3168. SWE-Bench Verified at 80.6%, that’s basically tied with Opus-4.6 at 80.8%.

But here’s the thing, this model doesn’t overwhelm with evals performance, it’s at par with other open source models and at 1.5T nobody is running this on home GPUs!

The bigger story here is the efficiency at long context! At 1 million context, V4-Pro uses only 27% of the FLOPs and 10% of the KV cache compared to DepSeek V3.2. The KV cache at 1M is like 8.7x smaller than V3.2.

The pricing is also ridiculous (well, it was always cheap but with these perf. innovations, DeepSeek can afford to undercut! API pricing is $0.145/$3.48 per million tokens for Pro (7x cheaper output than Opus 4.7) and $0.028/$0.28 for Flash (30-100x cheaper than GPT-5.5)

This release didn’t break through the AI bubble quite like DeepSeek R1, and we covered this on the show, but like a good whale, what you see on the surface is tiny compare to what lies beneath. This is a technological and innovation marvel, reducing compute and memory requirements by 90% compared to standart attention? Crazy

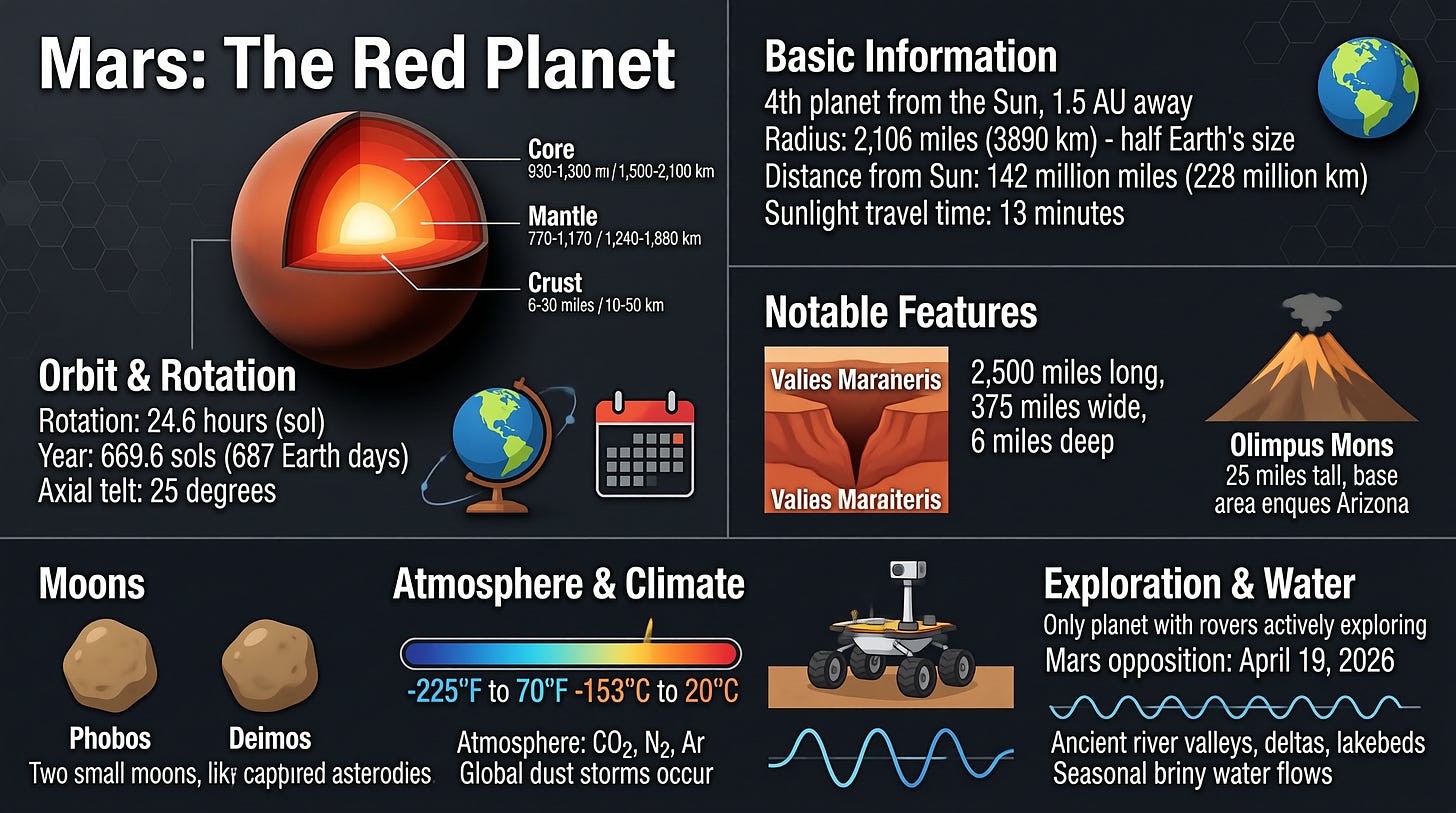

SenseNova U1: Unified Multimodal Without an Encoder - an oss infographic creator (X, X, HF, Blog, Try it)

SenseTime open-sourced something genuinely architecturally wild this week. SenseNova U1 is a unified multimodal model — 8B parameters with a 3B active MoE variant, both Apache 2.0 — that does both understanding and generation end-to-end with no visual encoder and no VAE.

They call the architecture NEO-Unify, and instead of the traditional pipeline (image → visual encoder → LLM → VAE → output), it’s just a single model handling pixels and words natively. The numbers are absurd for the size: 57.5% on Spatial Understanding (Qwen-VL: 35%) and a very high 91% on GenEval-Info for infographics

Nisten and I tried it live on the show and it generated coherent infographics with crisp text — something most 8B models struggle with. Chinese users are reporting it rivals Qwen-Image 2.0 Pro for design drafts at much higher inference speeds. But for us, another inforaphic resulted in a bunch of chinese text, FWIW we didnt prompt for English only.

The 3B-active MoE variant runs comfortably on consumer GPUs. Apache 2.0, fully open, in collaboration with MMLab at NTU.

This weeks Buzz - W&B update!

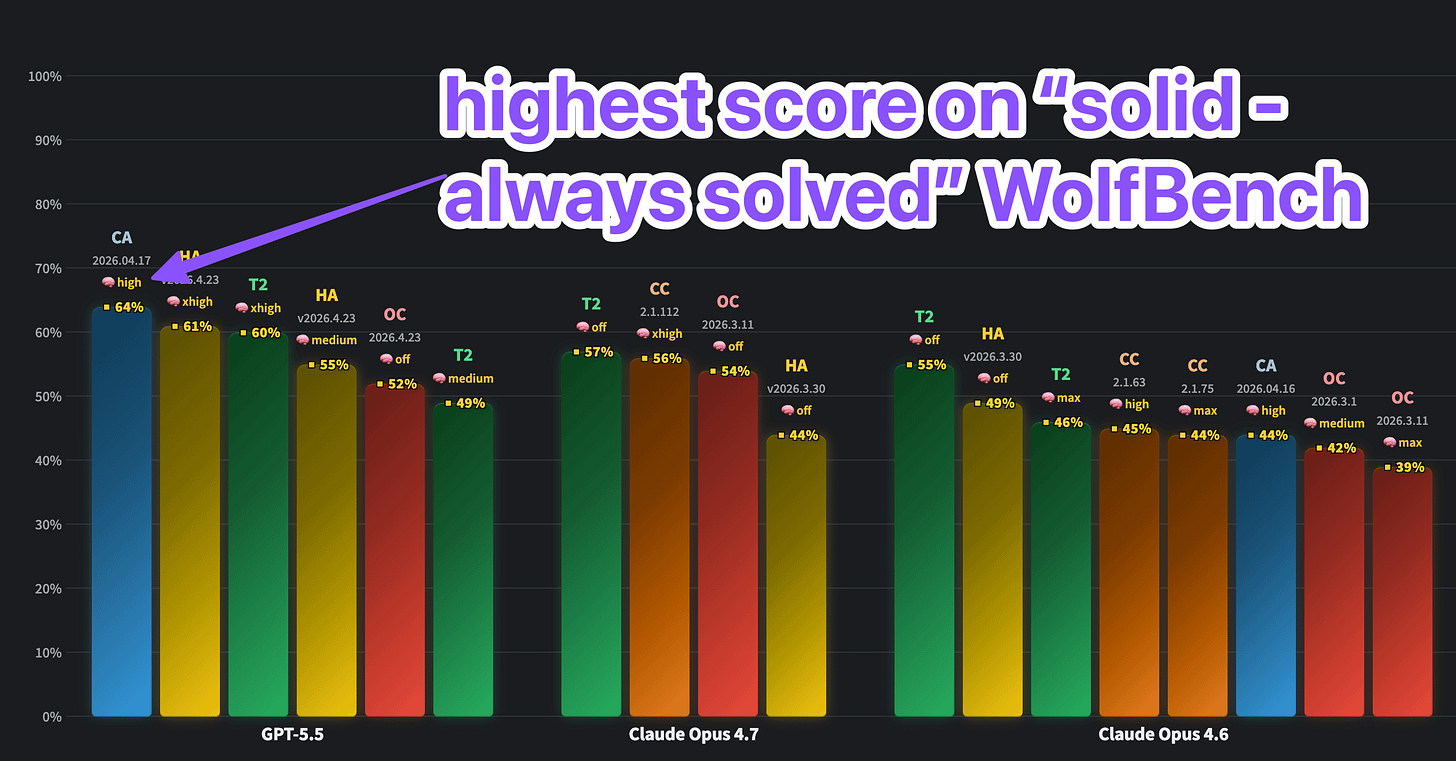

The biggest update this week is, we have gone viral with WolfBench.ai !

Wolfram has tested the Cursor harness (as well as many other harnesses) with GPT 5.5 and saw the best result we’ve tested so far! We still have a lot of testing to do, to add the Codex CLI itself, Devin, and many folks are asking for OpenCode and FactoryAI droids!

Also, we’ve launched the IBM Granite 4.1 models on W&B for a very cheap $0.05 / $0.10 per 1M token. This model series are instruct but without reasoning, apache 2 licensed. Get it here

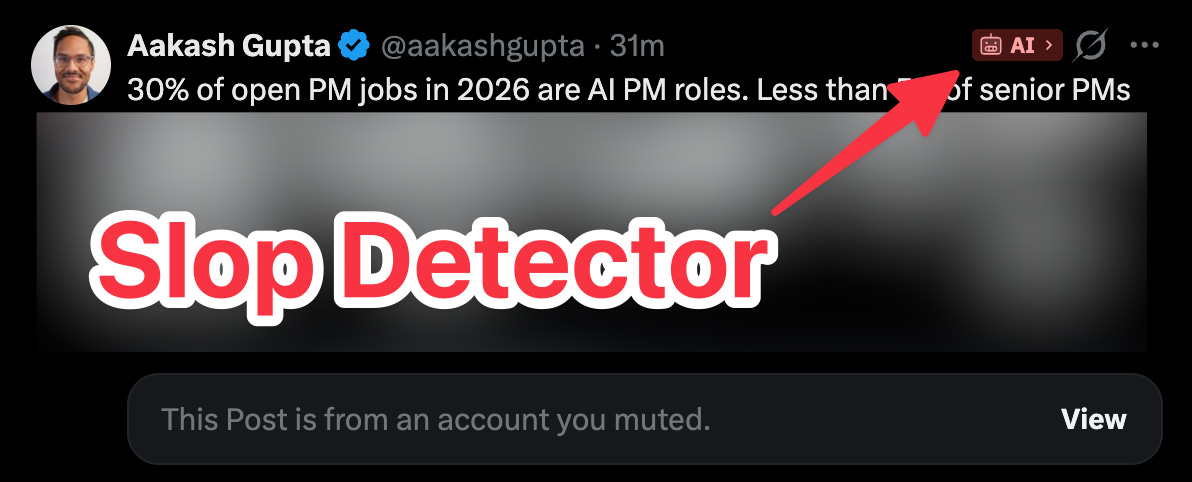

Are you concerned about your Cognitive Security? Guest speaker Max Spero from Pangram Labs says you should be

We had Max Spero from Pangram Labs on the show to talk about their Chrome extension that auto-flags AI-generated content as you scroll your feed. I’ve been using it for a while and many of my suspicions about who’s a slop merchant have been validated.

According to Max, Pangram has a 1 in 10,000 false positive rate. If Pangram says something is AI, you can be very confident it was AI-generated. They don’t catch everything, short text, heavily humanized content, or very new models might slip through. But when they flag something, they claim they have 98.99% accuracy that it was written with AI. Max addressed the notion that previous “AI detection” tools like GPTZero and others were often mocked, for a lot of false positive responses, for example, saying that the declaration of indepence was written with AI, and says that this is no longer the case!

Taylor Lorenz used the Pangram API to scan top Substack bestsellers and found some popular “writers” are nearly fully machine-generated. Technology substacks have the highest AI content rate; more than 1 in 4 top posts showing substantial AI content. And that’s only what Pangram catches.

Max framed it as “cognitive security” - knowing what your inputs are. LLMs are already superhuman at persuasion, and if you’re getting one-shotted by AI-generated content that you think is human, that matters. They’re working on multimodal detection next (images, video), which will be huge given how hard GPT-Image-2 outputs are to spot.

I find their chrome extension very useful, I scroll on my feeds and see a bunch of “ai” labels, and I can know to skip that content if I don’t want to. You can get 2 weeks trial to their chrome ext on pangram X account.

April 2026 - a full month of AI model releases

April was an insane month, here’s the major release calendar for April 2026

Mar 31: Claude Code leak

Apr 1: Alibaba Wan 2.7-Image · Fish Audio STT

Apr 2: Google Gemma 4 | Alibaba Qwen 3.6-Plus

Apr 4: OpenAI GPT-Image-2 (Arena leak)

Apr 6: MemPalace

Apr 7: Anthropic Claude Mythos Preview · Z.ai GLM-5.1

Apr 8: Meta Muse Spark

Apr 9: Anthropic Managed Agents

Apr 10: AI Engineer London

Apr 11: MiniMax M2.7 (open weights)

Apr 14: Baidu ERNIE-Image 8B

Apr 15: Google Gemini 3.1 Flash TTS

Apr 16 : Anthropic Claude Opus 4.7 | OpenAI Codex (computer-use)

Apr 17: Anthropic Claude Design

Apr 20: Moonshot Kimi K2.6 · OpenAI Codex Chronicle

Apr 21: OpenAI ChatGPT Images 2.0

Apr 22: OpenAI Privacy Filter (1.5B)

Apr 23: OpenAI GPT-5.5 + GPT-5.5 Pro

Apr 24: DeepSeek V4 Pro & Flash

Apr 27: Cognition Devin for Terminal

Apr 29: Cursor SDK | Baidu ERNIE 5.1 Preview | Stripe Link Wallet (Agents) · IBM Granite 4.1 8B

Apr 30: xAI Grok 4.3

That’s all for today folks, we’ve talked about a few other things, and the TL;DR list of releases keeps growing and growing from week to week.

As I said, I’m getting married next week, so I will be out, and won’t be on the live stream, Yam, Ryan, Nisten and LDJ will make sure you’re up to date!

If you found this valuable, please consider supporting our publication with a subscription and share with a friend.

Alex 🫡

ThursdAI - April 30, 2026 - TL;DR

Hosts and Guests

Alex Volkov - AI Evangelist & Weights & Biases (@altryne)

Co-Hosts: @WolframRvnwlf, @yampeleg, @nisten, @ldjconfirmed

Guest: Max Spero (@max_spero_) - Co-founder, Pangram Labs

Healthcare AI

Mayo Clinic’s REDMOD detects pancreatic cancer up to 3 years before clinical diagnosis with 73% sensitivity vs 39% for radiologists (Announcement)

Open Source LLMs

DeepSeek V4 paper drops with CSA+HCA attention, 1M context at 5.7GB KV cache, possibly first frontier model trained across multiple datacenters (Arxiv)

SenseTime open-sources SenseNova U1 - unified multimodal 8B/3B-active MoE with no encoder/VAE (HF, GitHub)

IBM releases Granite 4.1 family (3B/8B/30B) - non-thinking dense models with 20x token efficiency over Qwen3.5 9B, Apache 2.0 (Blog, HF)

Mistral launches Medium 3.5 - 128B dense flagship with 256K context, configurable reasoning, plus Vibe coding agent (HF, Blog)

Baidu ERNIE 5.1 Preview hits #13 on Arena (#1 Chinese lab) using just 6% of comparable pretraining compute (ernie.baidu.com)

Big CO LLMs + APIs

OpenAI publishes blog explaining GPT-5.5’s “goblin mode” - reward amplification during RL training created an obsession with creature metaphors, leading to duplicated suppression instructions in the Codex system prompt

OpenAI ends Microsoft Azure exclusivity, AWS announces GPT-5.5 and Codex on Bedrock; AGI clause removed from contract (Sam tweet)

Gemini can now generate and export Docs, Sheets, Slides, PDFs, .docx, .xlsx, LaTeX directly from chat - free for all users globally (Blog)

NVIDIA releases Nemotron 3 Nano Omni - 30B/3B-active hybrid Transformer-Mamba MoE with 256K context, 9x throughput on consumer hardware (Blog)

Agentic Commerce & Tools

Stripe launches Link wallet for agents at Sessions 2026 - AI agents get scoped payment credentials with mandatory human approval, real card never exposed (Blog)

Stripe removes waitlist on Projects.dev - 32 infrastructure providers (Cloudflare, WorkOS, ElevenLabs, Twilio, Daytona, Browserbase, AgentMail, etc.) provisionable via CLI for AI agents

Cursor launches SDK exposing the same runtime, harness, and models that power Cursor IDE - now embeddable in any product (Docs)

Cognition launches Devin for Terminal - local CLI coding agent with

/handoffcommand for seamless cloud transfer (cli.devin.ai)

Evals & Benchmarks

WolfBench tests 23 models across 300+ runs on Terminal-Bench 2.0 - Cursor Agent + GPT-5.5 is the #1 combination (wolfbench.ai)

Microsoft’s DELEGATE-52 benchmark shows GPT-5.4 loses 28% of document content after 20 iterative edits, frontier models corrupt stealthily while preserving structure

This Week’s Buzz - Weights & Biases

IBM Granite 4.1 live on W&B Inference at $0.05/$0.10 per million input/output tokens with 128K context

WolfBench results going viral with Cursor + GPT-5.5 dominance, Codex and Devin testing in the pipeline

AI Detection & Cognitive Security

Pangram Labs launches Chrome extension auto-flagging AI content in real time on X, LinkedIn, Reddit, Substack, Medium with 99.98% accuracy and 1-in-10,000 false positive rate (pangramlabs.com)

Taylor Lorenz uses Pangram API to analyze top 25 Substack bestsellers, finding many popular newsletters are near-fully AI-generated

AI Art, Video & Audio

ElevenLabs launches ElevenMusic - full music platform with discovery, remixing, royalties; 4,000+ indie artists at launch (elevenmusic.io)

HeyGen HyperFrames integrates natively with Claude Design - HTML-to-MP4 motion graphics via single CLI command (hyperframes.dev)

xAI drops Grok Imagine update with dramatically improved lip sync, sound, and 30-second video extensions

OpenAI engineer confirms team is actively fixing GPT-Image-2’s noise artifact issue

Other

Talkie - 13B open-weight LLM trained exclusively on pre-1930 text, by Alec Radford and David Duvenaud (talkie-lm.com)

GPT-5.5 Codex full system prompt leaked from OpenAI’s open-source repo, revealing 272K context window, four reasoning levels, three personality modes, and the duplicated anti-goblin instruction