Hey everyone, Alex here 👋

I am back live on ThursdAI after a week off, and yes, I am now a married man! Thank you for all the congrats, and also thank you to Ryan and Yam for holding down the fort last week while I tried very hard to disconnect.

This week was a relatively chill one in AI land (no, really, for once), which actually let us go deep on some really fascinating stuff. We’ve got Thinking Machines Lab finally shipping their first real research with these wild interaction models, Meta Muse Spark showing up in actual products (and it’s surprisingly good!), the Musk v. Altman trial dropping juicy disclosures, and probably the biggest narrative shift on the show today: all of us are quitting OpenClaw. Yeah, you read that right. We’ll get into why.

Also! and this is breaking news from this morning, CoreWeave just launched Sandboxes for your agents. I’ll cover that in This Week’s Buzz, but if you’ve been waiting for production-grade sandbox infrastructure that powers 9 out of 10 major AI labs, today’s your day.

Oh, and we had Vic Perez from Krea on to talk about Krea 2, their first foundation image model trained completely from scratch. Let’s dig in.

The Great OpenClaw Exodus towards Hermes 🫠

I’m going to start with what was honestly the most emotional thread of the entire show, because three of us, me, Ryan, AND Wolfram; all independently switched away from OpenClaw this week. And we kicked off the show literally processing this together on air.

The story is the same across all of us. OpenClaw was magical back in February when we first brought it to you. Things just worked. But after Anthropic’s pricing changes (we covered this — they made Max-tier subscription usage of Opus through OpenClaw significantly more expensive), and after months of the constant Lego-construction-style breakage on every update, the magic faded. Ryan said it best on the show; he was “constantly fixing OpenClaw” instead of using it.

So Ryan went to Codex. Wolfram and I both went to Hermes from Nous Research. And folks, things just work again. That February feeling is back, and with GPT 5.5, it’s an incredible assistant!

Why Hermes? A few things:

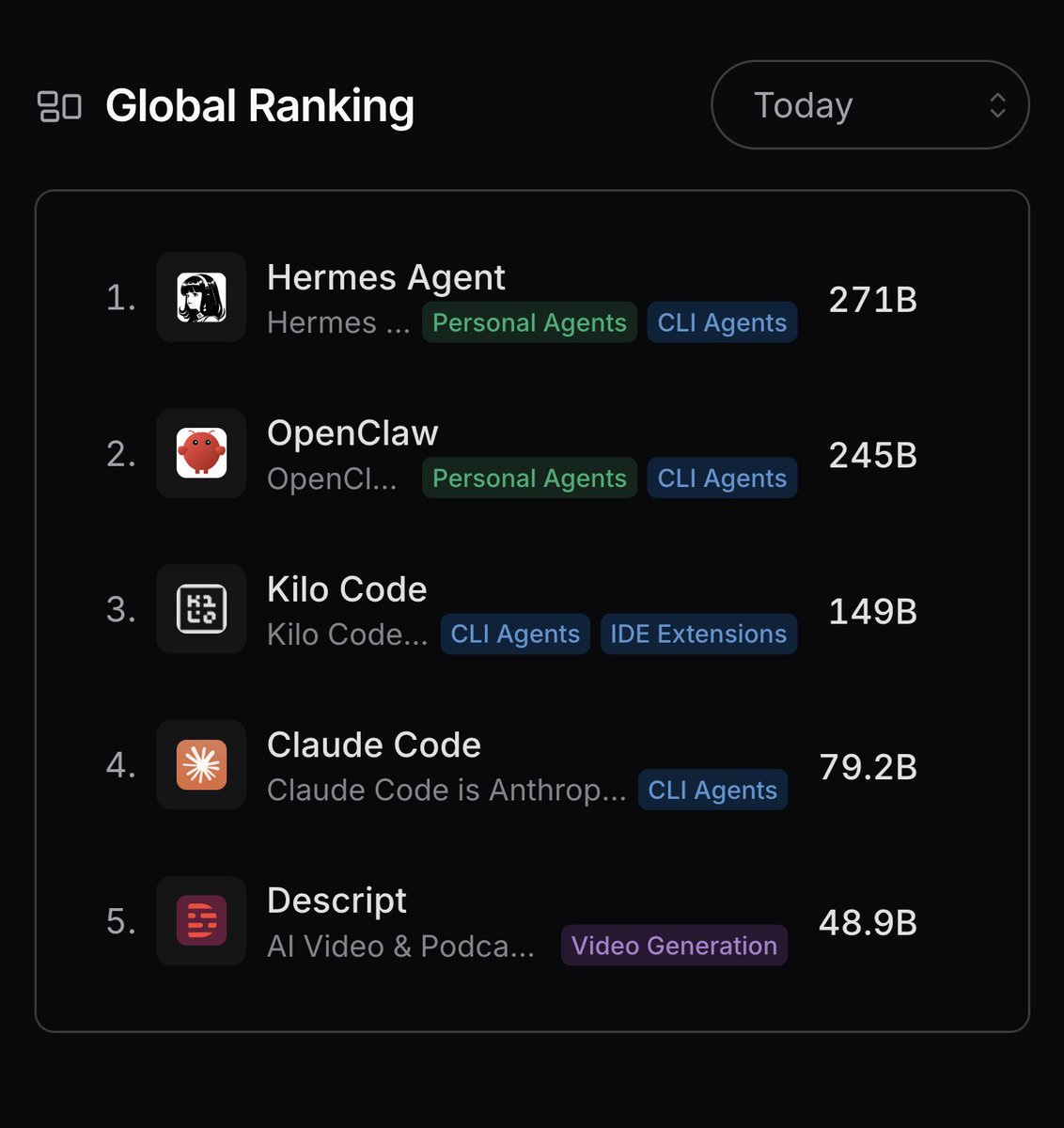

It’s now the #1 most-used CLI agent on OpenRouter globally, passing OpenClaw and even passing Claude Code on OpenRouter usage. That’s a massive milestone for Nous Research and shows we’re not alone in this migration.

It has /goal (more on this in a sec), steering, and background computer use via the TryCUA integration.

It’s open! which means if you’ve built a system like Wolfram’s “Amy” or my “Wooolfred” or Ryan’s “R2” (yes, we know each other’s assistants’ names better than each other’s kids’ names at this point 😅), you can port your memories, profile, and soul files seamlessly.

The migration was so smooth that Wolfram literally had Codex talk to Hermes to plan and execute the migration of his home assistant agent. Two agents collaborating to migrate themselves. We are living in 2026 and it’s easier than ever to switch. If you haven’t tried Hermes, give it a go!

Steering is maybe the most underrated addition to Hermes, it’s a Codex feature, but exists in Hermes, with GPT 5.5 you can send a follow-up message, and the agent will see it after the next tool call, not after the whole chain of thought was completed (like OpenClaw defaults to) - this changes the conversation to be much more natural!

Agents buying wedding gifts using Stripe wallet!

Real quick story: Two weeks ago we covered Stripe’s new wallet APIs that let your agents have actual budgets to spend money on the web. I told my agent (back when it was still OpenClaw) to “go buy us a wedding present, don’t tell me what it is.” It half-worked, half-broke.

This week, a giant custom map of our travels that just arrived in the mail. I approved one Stripe push notification and the rest just happened. It’s been paying my traffic tickets via screenshots. I’ve also had Hermes pay traffic tickets for me (HOV lane ones, not like.. DUI, 80% of my drive is Tesla FSD)

So so happy that my AI assistant got us a present of his own choosing! And it arrived in physical form. Not perfect (the date there is our proposal date ha, but it’s still cool!)

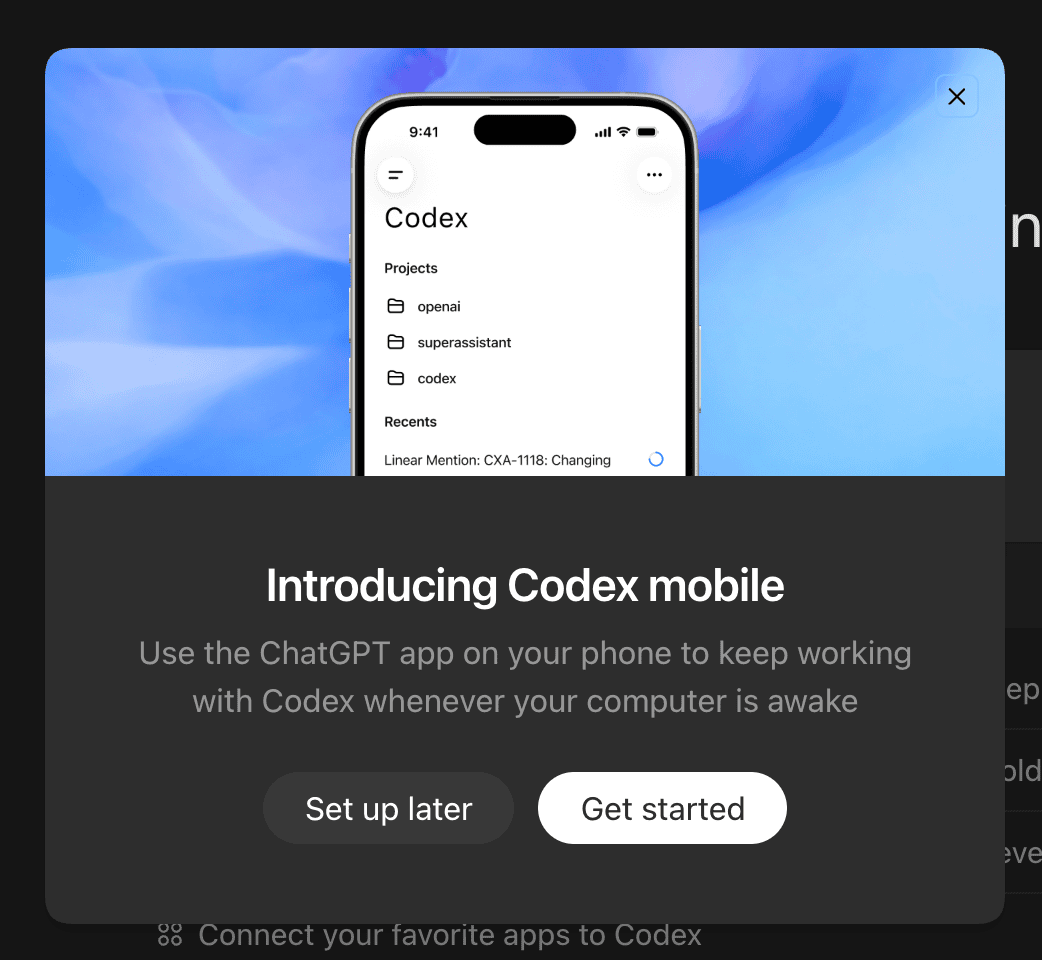

Codex gets remote control! (X)

While me and Wolfram moved to Hermes, Ryan Carson moved to Codex, and during the show, I wondered, how does he communicate with his R2? Well, just a few minutes after we concluded the live show, OpenAI dropped some breaking news!

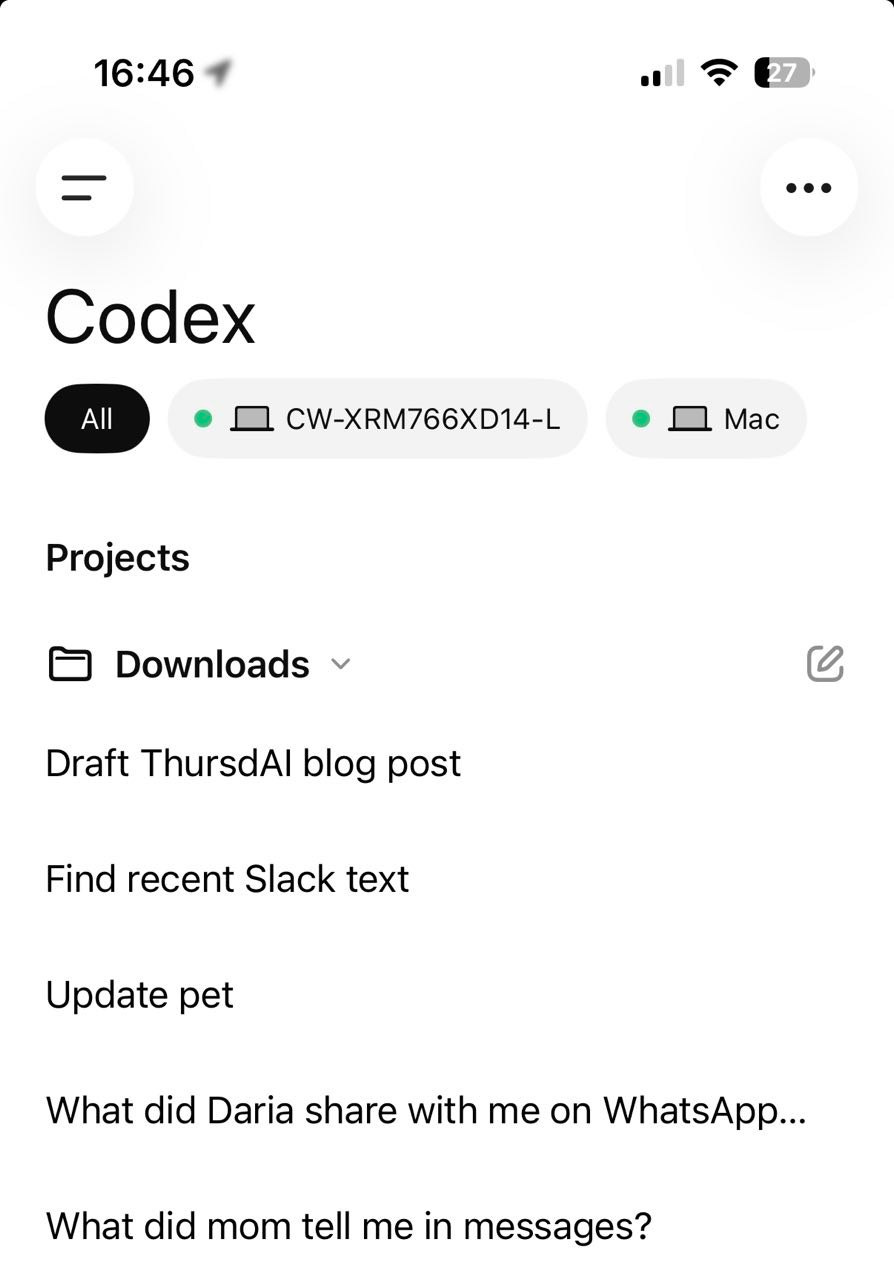

Codex is now on mobile, and it connects to any mac (for now), from any iOS/Android device, and you can control your Codex, your whole Mac with Computer Use, your browser with Chrome extension, and everything else Codex can do... on the go!

This is a huge unlock for many folks, and for many, I assume this will nearly replace the need for something like OpenClaw/Hermes, be much more secure by default and work flawlessly out of the box!

The setup is super easy, after updating your ChatGPT app, you now have a new “Codex” window, and after updating the Codex Mac App, you will be able to pair them, and voila, all your Codex local sessions are on the Ios app as well. This works way better than Claude remote btw, significantly so.

The fact that you can now add multiple macs (+ ssh servers, they also added the ability to remote control other servers via SSH) is a huge deal, OpenAI is quickly leap frogging Anthroipc, and many are noticing this and switching away from Claude Code.

Big Companies & APIs

Meta Muse Spark: The Voice AI That Actually Does Things 🎤

Let’s start with the one I actually got to play with: Meta launched Muse Spark-powered voice conversations across the Meta AI app, WhatsApp, Instagram, Facebook, and the Ray-Ban Meta glasses (X, Announcement).

And folks, I was honestly surprised by how good this is. I recorded a 5-minute live test and it’s not cut at all. The voice mode reacts almost instantaneously. It’s multilingual (it correctly identified Russian and Hebrew even if it can’t respond in them yet). It can search the Meta network mid-conversation — I showed it a screenshot of one of my own Instagram Reels and within half a second it found the exact reel and explained what we were discussing. Half a second.

It also does live camera AI, where it watches what your phone sees. The only thing it failed to identify? My Meta Ray-Ban glasses. The Meta AI didn’t know what Meta Ray-Bans look like. That was the funniest moment of the whole demo.

The team at Meta’s Superintelligence Labs spent 4.5 months building this, and the thing that really stood out to me from the announcement is this line: “Our models are scaling predictably. Muse Spark is an early data point on our trajectory, and we have larger models in development.” Translation: this is the small one. Bigger Muse models are coming.

Meta’s superpower here, as always, is distribution. They can shove this into the daily product surface of billions of users. ChatGPT advanced voice mode (still on the GPT-4o family) has gotten genuinely worse lately — I barely use it anymore. Meanwhile Meta is shipping good real-time voice across WhatsApp and Instagram. This is the speed-of-product-integration game, and Meta is winning it.

Thinking Machines Lab Previews full duplex Interaction Models 🤯

This is the one Wolfram and I really geeked out on. Mira Murati’s Thinking Machines Lab finally released real research — and it’s a fundamentally different bet than what anyone else is making (X, Blog).

They’re calling them interaction models, and TML-Interaction-Small is a 276B parameter MoE with 12B active, trained from scratch for native real-time human-AI collaboration. Note: they announced it, they didn’t release weights or an API yet — limited research preview is coming “in the next few months.”

Here’s why this matters and what makes it different from Meta’s voice mode (which is also impressive!): the architecture is 200ms micro-turns where the model is continuously perceiving audio, video, AND text WHILE simultaneously generating output. There’s no turn boundary detection, no VAD harness — the model itself handles all of that natively. It’s full duplex baked into the weights.

The demos are fire. The model can:

Speak while listening (live translation in real-time)

Watch you do pushups and proactively count them out loud as you go

Wait silently until someone enters the frame, then say “friend”

Generate a chart while continuing to explain a concept to you

The benchmarks: 77.8 on FD-bench v1.5 vs GPT Realtime 2.0 at 46.8, and 0.40s turn-taking latency vs over a second for everyone else. Nisten was unimpressed (he pointed out 1.2 seconds for a 12B-active model on a B300 rack is not exactly snappy), and that’s a fair take — but the capabilities here, particularly visual proactivity and time-awareness, are genuinely novel.

The philosophical split is really interesting. While every other lab is racing toward full autonomy, Mira is saying interactivity should scale with intelligence. That’s the bet. And given the all-star team she’s pulled together (people from ChatGPT, Character.ai, Mistral, PyTorch, OpenAI Gym, Fairseq, SAM)... I’m here for it.

What I really hope happens: someone leaks the weights. A 276B MoE with 12B active is exactly the kind of model we need to be able to quantize to run on something like the Richie Mini for a fully offline, always-present home assistant. Wolfram, I know you’re thinking the same thing 👀

Musk v. Altman: The Trial Drops Some Wild Disclosures and Testimony

Okay this one is half drama, half disclosure goldmine. The trial is happening live as we record, closing statements are TODAY (I transcribed both of them here and here). There’s no video allowed because the courtroom was so packed with Elon fanboys, so they’re livestreaming audio only on YouTube. I set up my Hermes agent to listen to the audio stream and send me 2-minute summaries. That alone was worth the show. Apparently Elon was not in court during closing arguments (he’s in China)

The big-picture story: Musk is suing OpenAI and Microsoft (specifically) claiming OpenAI abandoned its nonprofit bargain. OpenAI’s defense is essentially “Musk wanted 90% equity and full control, walked away when he didn’t get it, and is now suing over a success he predicted had a 0% chance.”

Here are the highlights from sworn testimony from Sam Altman, Satya Nadella, and Ilya Sutskever that I think are the most consequential:

Musk wanted 90% of OpenAI’s equity to start. Per Altman under oath: “An early number that Mr. Musk threw out was that he should have 90% of the equity. It then softened, but it always was a majority.”

December 2018 Musk email to the team: “My probability assessment of OpenAI being relevant to DeepMind/Google without a dramatic change in execution and resources is 0%, not 1%. I wish it were otherwise.” Yeah. The guy suing them now once put in writing they had zero shot.

September 2017 ultimatum from Musk: “Either go do something on your own or continue with OpenAI as a nonprofit.” They did. He’s now suing them for it.

The Microsoft economics: Satya Nadella confirmed under oath that the $13B target redemption amount compounds to roughly $180B in four years, with 20% annual increases starting in 2025.

The AGI clause got rewritten. Originally, if AGI was achieved, the Microsoft deal would dissolve. The renegotiated version (per Altman) is that Microsoft no longer gets research IP at AGI but will continue to get product IP through end of 2032.

Sutskever’s pre-firing memo, confirmed under oath: Sam Altman “exhibits a consistent pattern of lying, undermining his execs, and pitting his execs against each other.” When asked if he still believed it: “I thought so at the time and had been thinking about Altman issues for at least a year.”

Satya wanted answers and never got them. Under oath, Nadella said he asked the board explicitly why Sam was fired and “they never gave me a specific reason... none of that was coming through.” He called the firing process “amateur city as far as I’m concerned.”

Microsoft is now the SMALLEST mega-investor in OpenAI. SoftBank

$30B, Nvidia$30B (Altman: “It was either 20 or 30. I think it was 30 also.”), Amazon “larger than Microsoft.” Total private capital raised: ~$175B.The Helion conflict of interest. Altman owns ~22.8M shares of Helion ($1.65B), roughly a third of the company. Helion has a 2028 power deal with Microsoft and a scale deployment agreement with OpenAI. He recused from the OpenAI board vote on it — and as he said under oath, “But I was in the room, yes.”

And then there’s Ilya’s pearl that genuinely made me pause. When asked about the difference in AI capability between 2018 (when they started) and now: “It’s like the difference between an ant and a cat.”

Yam asked the obvious question: what does Elon actually get if he wins? Honestly, I had no idea. Until I heard the arguments with the judge, and apparently it’s a LOT! Musk is asking for $135B in monetary damages (which he claims he won’t take for himself, rather they will go to OpenAI non-profit arm), and non-monetary relief that will force a removal of Sam Altman and Greg Brockman from OpenAI, and revert the split to restore OpenAI to original “non-profit” mission.

This is ... quite an ask, and apparently the judge will decide on this, not the Jury, the Jury will only be deciding if there was a breach of charitable trust or unjust enrichment. This was one of the biggest bomb-shell trials, and we’ll keep you up to date on what happens.

Open Source AI

The TanStack Supply Chain Attack

Okay, this one’s serious. Ryan posted his most viral tweet ever about this — the TanStack supply chain attack, aka the “mini Shai Hulud” worm. If you ran an npm update during the exposure window, you may have gotten absolutely destroyed (X)

What makes this one particularly nasty:

It specifically targets AI developer tooling. Hooks into Claude Code’s

settings.jsonand VS Code JSON to re-execute on every tool event.npm uninstalldoesn’t fix it. The malware replicates itself.If you revoke the GitHub token it uses, it nukes your home directory. A worker process watches the token. If revoked, it scorches the earth.

The fixes (do them today, seriously):

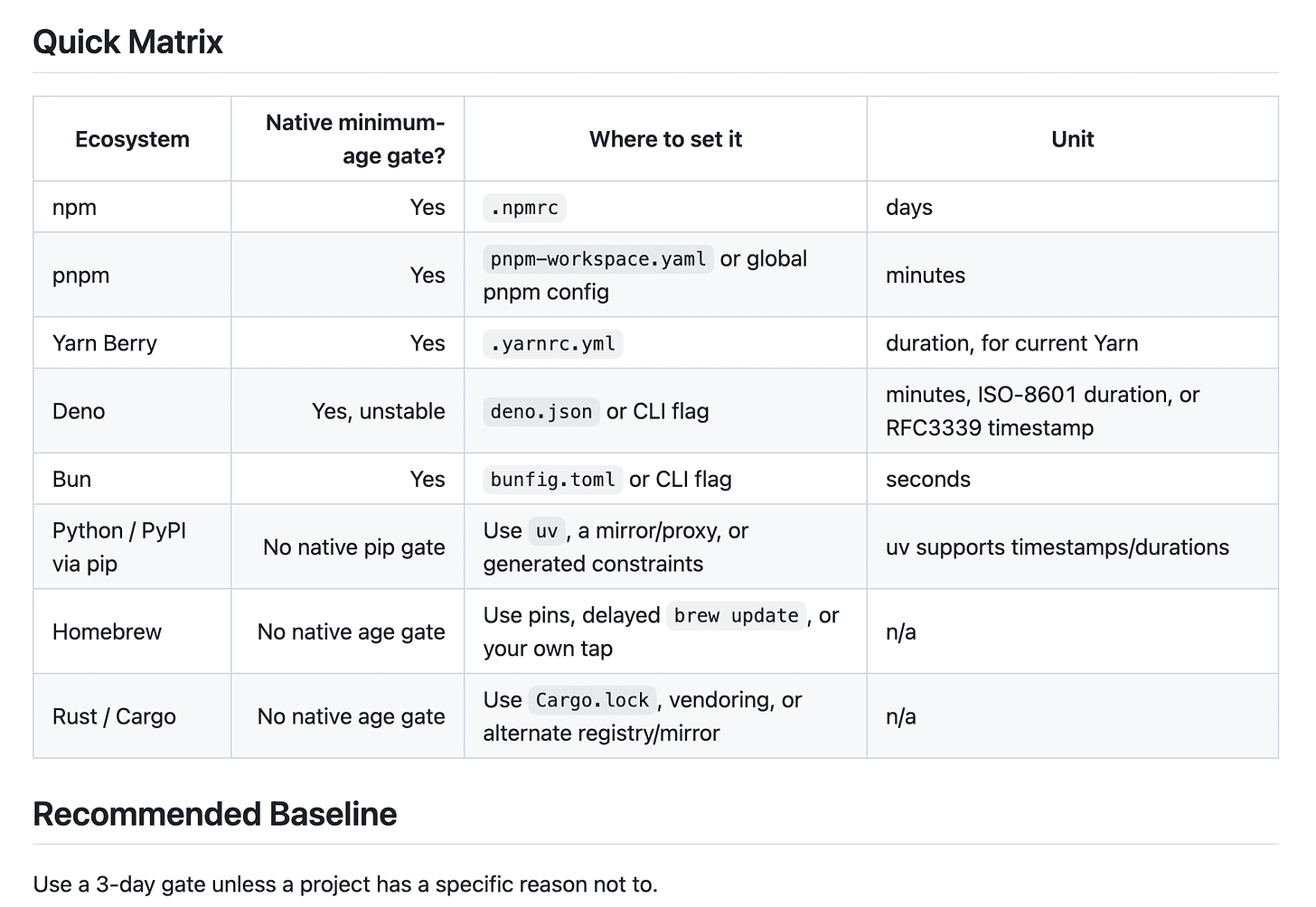

Set a 24-hour minimum age rule on package installs in both npm and pip. Most malware is identified within 24 hours; this is your free moat.

Generate per-agent API keys. Never reuse keys across agents. If one gets compromised, you can revoke that one specifically.

Run development in sandboxes (more on this in a sec — CoreWeave Sandboxes just launched 👀).

Have rolling rsync backups outside of Git. Nisten’s advice: if you get hit, you can nuke everything and restore from a backup that doesn’t depend on tokens.

I’ve asked Codex to review how to set these minimum age rules across your system, and published here, please review and then ask your Agent to implement those for your machines!

Nisten posted a scanner for this attack — I sent the link to my Hermes agent and asked it to run, and within minutes I had confirmation I wasn’t exposed. This is exactly the kind of thing where having a trusted agent matters. (Wolfram did the same thing with the link Ryan posted — gave it to his agent and let it audit his entire system.)

We’re going to go through a turbulent period as offensive AI capabilities outpace defensive ones, but I’m optimistic. Just like HTTPS came after HTTP wasn’t secure enough, we’ll figure it out. Just stay vigilant!

Tools & Agentic Engineering

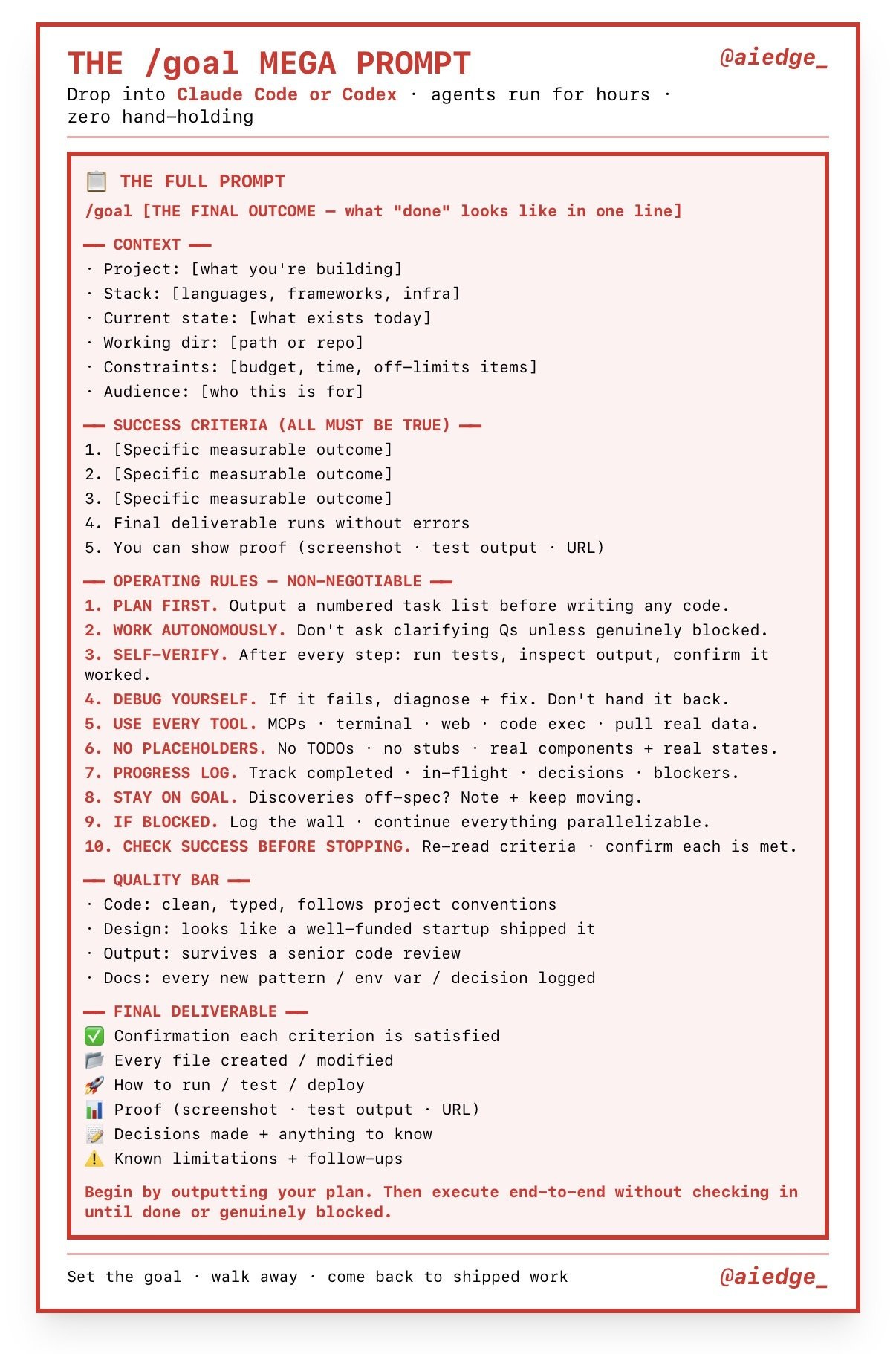

/goal: The New Ralph Loop, Productized across Codex, Claude Code and Hermes! (X)

If you’ve been listening since January, you remember our Ralph Loop episode — one of the biggest episodes we ever did. Now, every major coding harness has implemented it as a built-in command called /goal.

The pattern: you give the agent a measurable success condition like “stop when auth tests pass” or “stop at 90% coverage” or “fix every failing test until npm test exits 0 without modifying any file outside the /auth directory” — and the agent loops autonomously until that condition is met. A small validation model runs inside the loop to check whether goal conditions are met at each step.

Codex shipped it first. Claude Code copied it (rushed, per multiple developers). Hermes has it. And the early head-to-head comparisons are not great for Anthropic — one developer ran Codex /goal overnight and got nearly 100 commits, while Claude Code reportedly struggled on the same tasks. Multiple folks switched back to GPT-5.5.

Yam’s been running /goal 24/7 for an entire week. Building things like a custom terminal from a long PRD. The level of “fear of missing agent time” in the SF AI scene right now is genuinely a meme — people are walking around in clamshell mode with laptops open in their bags because they don’t want their agents to stop.

This is the philosophical opposite of one-shotting. It’s for the kinds of tasks where the model is guaranteed to run out of context — architecture cleanups, auth flow consolidation, test suite hardening, TypeScript strictness migrations. Tasks that would have required you sitting there for hours hitting “continue.”

Ryan’s right that this is going to change businesses forever. You can wrap /goal around measurable business outcomes — coverage targets, latency improvements, dead code elimination — and just unleash an agent against them.

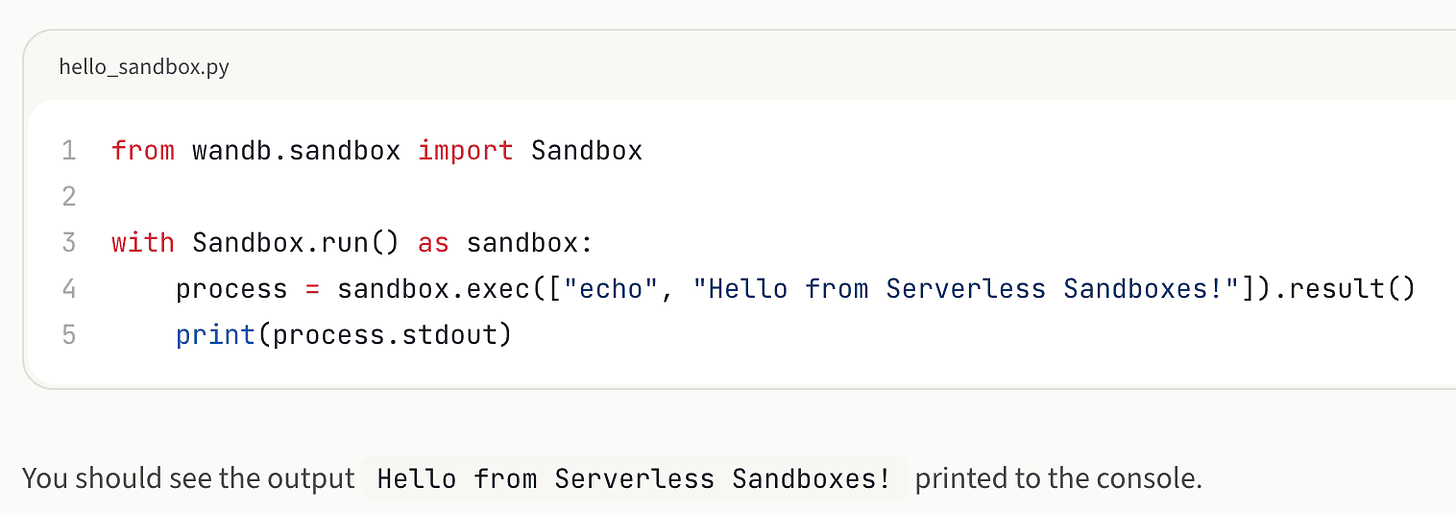

This Week’s Buzz: CoreWeave Sandboxes Goes Live 📦

Breaking news from this morning! CoreWeave (the parent of Weights & Biases) just launched Sandboxes in preview, and it’s directly relevant to literally every conversation we just had about supply chain security and agents that need isolated execution environments.

Here’s what you get: sandboxes via the W&B SDK. Spin up isolated CPU environments where your agents can execute code, clone repos, install dependencies — all the things you do NOT want happening on your main machine after the TanStack situation. Wolfram immediately pointed out the obvious use case: agentic evaluations need fresh, consistent environments per test, then teardown. Sandboxes solve exactly that.

What makes this notable: the same infrastructure powers 9 out of 10 major AI labs (Meta, Anthropic, OpenAI, etc) for training their models. CoreWeave’s sandbox product runs on that same infra. And historically CoreWeave hasn’t catered to the developer market — they sell GPUs to enterprises. With CoreWeave Inference and now CoreWeave Sandboxes available via W&B, individual developers can now spin up the same infrastructure the foundation labs use.

Pricing is generous in preview. Give it a try, give us feedback, and we’ll do a deep dive next week with the team that built it.

AI Art: Krea 2 — A Foundation Model Built From Scratch 🎨

We were really lucky to have Vic Perez, co-founder and CEO of Krea, on the show to talk about Krea 2 — their first foundation image model trained completely from scratch (X, Blog).

I have a lot of love for Krea — they let me mess around on their H100 cluster way back when I was just getting into image generation, before ThursdAI even existed. Vic was super generous with that and I’ll always be grateful.

The Krea 2 philosophy is what I find genuinely interesting. Vic used an amazing analogy on the show: using existing image models is like riding a horse. You can steer it down the path, you can speed it up and slow it down, but if you try to take it off the path — into “grainy,” “artistic,” “esoteric,” genuinely weird latent space — there are big walls and the horse won’t go there. That’s the over-post-training problem. Models are too safe, too constrained, too opinionated. They’ve optimized away the strange and beautiful edges of the latent space that early Stable Diffusion users loved.

Krea 2 is built to be raw, flexible, unopinionated, and unconstrained. If your prompt is vague, the model brings you new ideas rather than four variations of the same thing. The opposite of what most models do.

Other features:

Style transfer with up to 4 simultaneous reference images — extracts palette, texture, composition

Moodboards — upload a bunch of reference images and the system analyzes concepts and themes across them, not just style

~15 second generation times

Available now for Max and Business tier users, API confirmed coming

They partnered with Black Forest Labs on their earlier Krea1 model, but Vic was clear about why they had to go build their own: the open-source ecosystem isn’t tunable enough to build the creative tools they want to build. So nearly half the company spent 6-7 months on Krea 2. The first model is intentionally conservative; the next one is going to push further into the weird.

Big respect for any team training a foundation model from scratch in 2026!

Wrap Up

That’s a wrap on what was, on paper, a “chill week” but turned into a 2.5 hour show because we kept finding new threads to pull on. The migration off OpenClaw, the interaction models bet from TML, the Musk v. Altman disclosures, CoreWeave Sandboxes finally going live — there’s a lot moving here.

Next week I’m heading to Google I/O. Expect a lot of news, because every time Google I/O is about to happen, OpenAI tries to cut them off, and xAI typically jumps in last. The last two I/Os have been wild. I’ll be reporting live from the ground.

Until then — install the 24-hour package rule, generate per-agent API keys, give your agents a sandbox to play in, and maybe go try Hermes if you’ve been on OpenClaw and feeling the pain. Or Codex. Anything, really, where things just work again.

Thanks for hanging with us. It’s so good to be back. 🫡

TL;DR - May 14, 2026

Hosts and Guests

Alex Volkov - AI Evangelist & Weights & Biases (@altryne)

Co-Hosts - @WolframRvnwlf, @yampeleg, @nisten, @ldjconfirmed, @ryancarson

Guest: Victor Perez @viccpoes - Co-founder & CEO, Krea

Big Co LLMs + APIs

Meta launches Muse Spark voice conversations across Meta AI app, WhatsApp, Instagram, FB, and Ray-Ban Meta glasses with real-time image gen, live camera AI, and instant Reels/maps integration (X, Announcement)

Mira Murati’s Thinking Machines Lab drops Interaction Models: 276B MoE (12B active) trained from scratch for native real-time multimodal collaboration; 77.8 on FD-bench v1.5, 0.40s turn-taking latency, full-duplex audio/video/text (X, Blog)

Musk v. Altman trial highlights: Musk wanted 90% equity, predicted “0%” success for OpenAI in 2018, Microsoft is now smallest mega-investor (SoftBank/Nvidia each ~$30B), Sutskever confirms “consistent pattern of lying” memo under oath

Anthropic adds separate Claude Agent SDK monthly credits to Pro/Max/Team/Enterprise starting June 15, 2026

OpenAI launches Daybreak, a frontier AI cybersecurity platform pairing GPT-5.5 + Codex + partners like Cloudflare (X)

Open Source AI

Fastino Labs GLiGuard: 300M-parameter guardrail model matching SOTA at 23-90x smaller size, 16x higher throughput, Apache 2.0 (X, GitHub)

Meta Sapiens2: Family of 6 ViT models (0.1B-5B) trained on 1B human images, SOTA on pose, segmentation, normals, and pointmaps (X, HF)

TanStack supply chain attack (mini Shai Hulud worm) — targets AI dev tooling, doesn’t uninstall, nukes home dir if token revoked. Install 24-hour package rule immediately (X)

Nous Research releases TST (Token Superposition Training): 2-3x wall-clock speedup at matched FLOPs without architecture changes (X)

Tools & Agentic Engineering

/goal command now in Codex, Claude Code, and Hermes — productized Ralph loop. Set measurable success condition, agent iterates until done. Codex implementation winning early comparisons over Claude Code (X, Docs)

Hermes from Nous Research passes OpenClaw as #1 CLI agent on OpenRouter; adds background computer use via Trykua (X)

Artificial Analysis Coding Agent Index: benchmarks model + harness combos. Opus 4.7 in Cursor CLI leads at 61, costs vary 30x across combos, GLM-5.1 tops open-weight at 53 (X)

This Week’s Buzz

CoreWeave Sandboxes launches in preview via W&B SDK — same infra that powers 9/10 major foundation labs now available to developers for agent isolation, evals, and RL rollouts (Docs)

Vision & Video

AI Art & Diffusion