Hey friends, welcome to ThursdAI Oct - 19. Here’s everything we covered + a little deep dive after the TL;DR for those who like extra credit.

Also, here’s the reason why the newsletter is a bit delayed today, I played with Riffusion to try and get a cool song for ThursdAI 😂

ThursdAI October 19th

TL;DR of all topics covered:

Open Source MLLMs

Adept open sources Fuyu 8B - multi modal trained on understanding charts and UI (Announcement, Hugging face, Demo)

Teknium releases Open Hermes 2 on Mistral 7B (Announcement, Model)

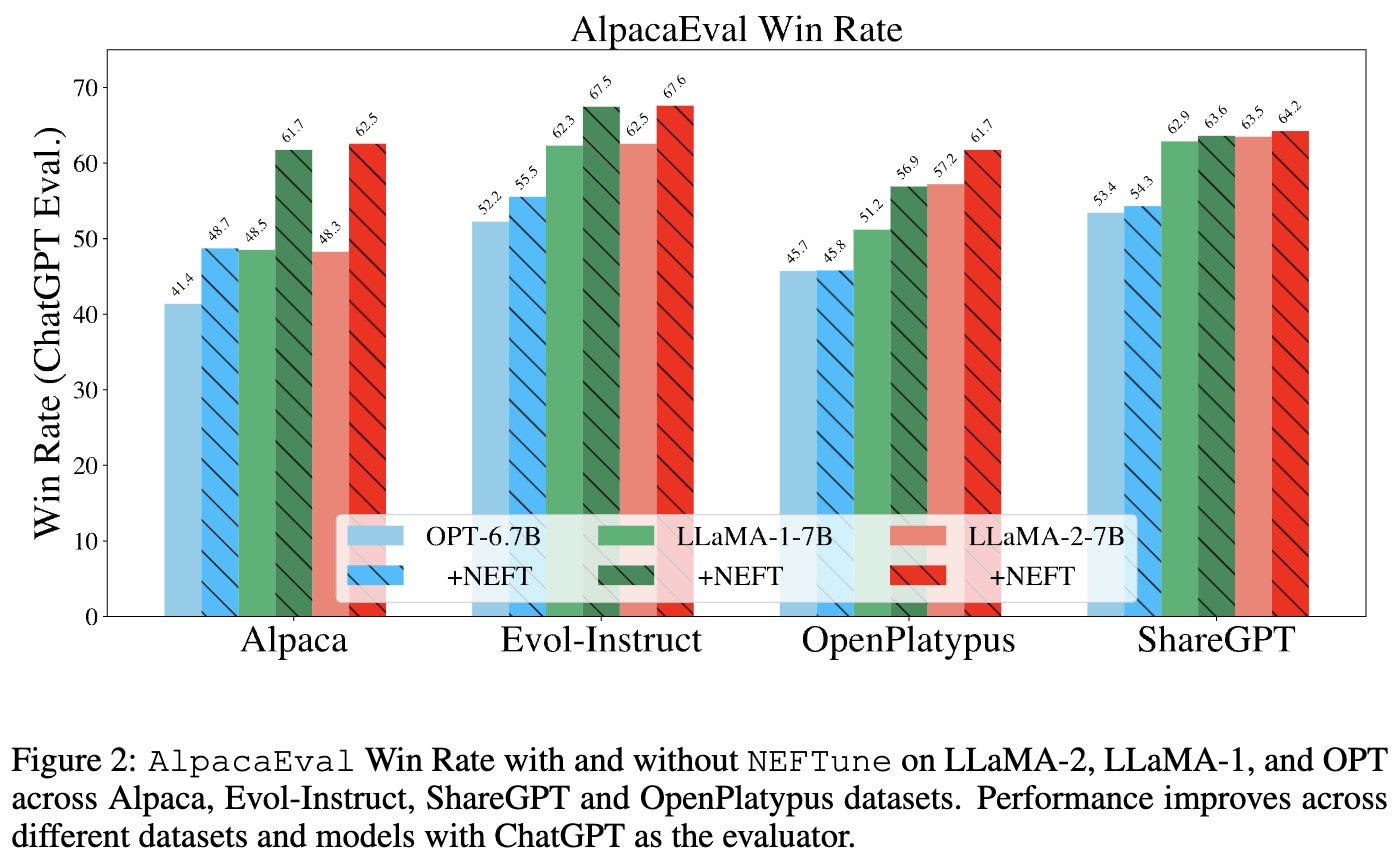

NEFTune - a "one simple trick" to get higher quality finetunes by adding noise (Thread, Github)

Mistral is on fire, most fine-tunes are on top of Mistral now

Big CO LLMs + APIs

Inflection Pi got internet access & New therapy mode (Announcement)

Mojo 🔥 is working on Apple silicon Macs and has LLaMa.cpp level performance (Announcement, Performance thread)

Anthropic Claude.ai is rolled out to additional 95 countries (Announcement)

Baidu AI announcements - ERNIE 4, multimodal foundational model, integrated with many applications (Announcement, Thread)

Vision

Meta is decoding brain activity in near real time using non intrusive MEG (Announcement, Blog, Paper)

Baidu YunYiduo drive - Can use text prompts to extract precise frames from video, and summarize videos, transcribe and add subtitles. (Announcement)

Voice & Audio

Near real time voice generation with play.ht - under 300ms (Announcement)

I'm having a lot of fun with Airpods + chatGPT voice (X)

Riffusion - generate short songs with sound and singing (Riffusion, X)

AI Art & Diffusion

Adobe releases Firefly 2 - lifelike and realistic images, generative match, prompt remix and prompt suggestions (X, Firefly)

DALL-E 3 is now available to all chatGPT Plus uses (Announcement, Research paper!)

Tools

LMStudio - a great and easy way to download models and run on M1 straight on your mac (Download)

Other

ThursdAI is adhering to the techno-optimist manifesto by Pmarca (Link)

Open source mLLMs

Welcome to multimodal future with Fuyu 8B from Adept

We've seen and covered many multi-modal models before, and in fact, most of them will start being multimodal, so get ready to say "MLLMs" or... we come up with something better.

Most of them so far have been pretty heavy, IDEFICS was 80B parameters etc'

This week we received a new, 8B multi modal with great OCR abilities from Adept, the same guys who gave us Persimmon 8B a few weeks ago, in fact, Fuyu is a type of persimmon tree (we see you Adept!)

In the podcast I talked about having 2 separate benchmarks for myself, one for chatGPT or any MultiModal coming from huge companies, and another for open source/tiny models. Given that Fuyu is a tiny model, it's quite impressive! It's OCR capabilities are impressive, and the QA is really on point (as well as captioning)

An interesting thing about FuYu architecture is, because it doesn't use the traditional vision encoders, it can scale to arbitrary image sizes and resolutions, and is really fast (large image responses under 100ms)

Additionally, during the release of Fuyu, Arushi from Adept authored a thread about visualQA evaluation datasets are, which... they really are bad, and I hope we get better ones!

NEFTune - 1 weird trick of adding noise to embeddings makes models better (announcement thread)

If you guys remember, a "this one weird trick" was discovered by KaiokenDev back in June, to extend the context window of LLaMa models, which then turned into RoPE scaling and YaRN scaling (which we covered in a special episode with the authors)

Well, now we have a similar "1 weird trick" that by just adding some noise to embeddings at training time, the model performance can grow by up to 25%!

The results very per dataset of course, however, considering how easy it is to try, literally:

It's as simple as doing this in your forward pass

if training:

return orig_embed(x) + noise

else:

return orig_embed(x)We should be happy that the "free lunch" tricks like this exist.

Notably, we had a great guest, Wing Lian the maintainer of Axolotl, a very popular tool to streamline fine-tuning, chime in and say that in his tests, and among the discord folks, they couldn't reproduce some of these claims (as they are adding everything that's super cool and beneficial for finetuners to their library) so it remains to be seen how far this "trick" scales, and what else needed to be done here.

Similarly, back when the context extend trick was discovered, there was a lot of debates about it's effectiveness from Ofir Press (author of ALiBi, another context scaling methond) and futher iterations of the trick made into a paper and a robust method, so this develompment is indeed exciting!

Mojo 🔥 now supports Apple silicon Macs and has LLaMa.cpp level performance!

I've been waiting for this day! We've covered Mojo from Modular a couple of times and it seems that the promise behind it starts to materialize. Modular promises an incredible unbelieavable 68,000X boost over vanilla python, and it's been great to see that develop.

Today (October 19) they have released their support of Mojo Lang on Apple silicon which most developers use, and it's a native one and you can use it right now via CLI.

A friend of the pod Aydyn Tairov, hopped on the live recording and talked to use about his LLama.🔥 project (Github) that he ported to the Apple silicon, and showed an incredible, LLaMa.cpp like performance, without crazy optimizations!

Aydyn collected many LLaMa implementations, including Llama.cpp, LLama.c by Karpathy and many others, and included his LLama.mojo (or Llama.🔥) and saw that the mojo one is coming very very close to LLama.cpp and significantly beats Rust and Go and Julia examples (on specific baby llama models)

The Mojo future is bright, and we'll keep updating with more, but for now, go play with it!

Meta is doing near-real time brain → image research! 🤯

We've talked about fMRI signals (and EEG) signals being translated to diffusion imagery before, and this week, Meta has shown that while fMRI signals to brain imagery is pretty crazy on it's own, using something called MEG (non invasive Magnetoencephalography) they can generate and keep generating images based on the brain signals, in near real time!

[TK video here]

I don't have a LOT to say about this topic, besides the fact that as an Aphant (I have Aphantasia) I can't wait to try this on myself and see what my brain actually "sees"

Baidu announces ERNIE and a bunch AI native products including maps, drive, autonomous ride hailing and more.

Baidu has just wrapped up their biggest conference of the year, BaiduWorld, where they announced a new version of their foundational model called ERNIE4, which is a multimodal (of unknown size) and is now integrated into quite a few of their products, many of which are re-imagined with AI.

A few examples beyond a basic LLM chat like interface are, a new revamped map experience with an AI assistant (with voice) built in to help you navigate and find locations, a new office management app that handles appointments and time slots called InfoFlow, and it apparently even does travel booking, to an AI "google drive" like product called YunYidou, that is able to find video content, based on what was said and when, and even pinpoint specific frames, summarize and do a bunch fo other incredible AI stuff, here's a translated video of someone interacting with YunYinou and asking for a bunch of stuff one after another.

Disclosure: I don't know if the video is edited or in real time.

Voice & Audio

Real time voice for agents is almost here, chatGPT voice mode is powerful

I've spent maybe 2 hours this week, with chatGPT in my ear, using the new voice mode + AirPods. It's almost like... being on a call with chatGPT. I started talking to it in the store, asking for different produce to buy for a recipe, then drove home and ask it to "prepare" me for the task (I don't usually cook this specific thing) and then during my cooking, I kept talking to it, asking for next steps. With the new IOS the voice mode shows up as a live activity and you can pause it and resume without opening the app:

It was literally present in my world, without me having to watch the screen or type.

It's a completely new paradigm of interactions when you don't have to type anymore, or pick up a screen and read, and it's wonderful!

Play.ht shows off an impressive <300ms voice generation for agents

After spending almost 2 hours talking to chatGPT, I was thinking, why aren't all AI assistants like this, and the answer was, well... generating voice takes time, which takes you out of your "conversation flow"

And then today, play.ht showed off a new update to their API that generates voice in <300ms, and that can be a clone of your voice, with your accent and all. We truly live in unprecedented times.

I can't wait for agents to start talking and seeing what I see (and remember everything I heard, via Tab or Pendant or Pin)

Riffusion is addictive, generate song snippets with life-like lyrics!

We've talked about music gen before, however, Riffusion is a new addition and is now generating short song segments with VOICE! Here are a few samples, and honestly, I've procrastinated writing this newsletter because it's so fun to generate these, and I wish they went for longer!

AI Art & Diffusion

Adobe releases Firefly2 which is significantly better at skin textures, realism, and hands. Additionally they have added a style transfer which is wonderful, upload a picture of something with a style you'd like, and your prompt will be generated in that style, it works really really well. The extra details on the skin is just something else, though I did cherry pick this example, the other hands were a dead give-away, still, the hands are getting better across the board!

Plus they have a bunch of prompt features, like prompt suggestion, ability to remix other creations and more, it's really quite developed at this point.

Also: DALL-E is now available to 100% of plus users and enterprise, have you tried it yet? What do you think? Let me know in replies!

That’s it for October 19. If you're into AI engineering, make sure you listen to the previous weeks podcast where Swyx and I recapped everything that happened on stage and off it in the seminal AI Engineer summit. And make sure to share this newsletter with your friends who like AI!

For those who are 'in the know', emoji of the week is 📣, please DM or reply with it if you got all the way here 🫡 and we'll see you next week (where I will have some exciting news to share!)