Hihi, this is Alex, from Weights & Biases, coming to you live, from Yosemite! Well, actually I’m writing these words from a fake virtual yosemite that appears above my kitchen counter as I’m not a Vision Pro user and I will force myself to work inside this thing and tell you if it’s worth it. I will also be on the lookout on anything AI related in this new spatial computing paradigm, like THIS for example!

But back to rfeality for a second, we had quite the show today! We had the awesome time to have Junyang Justin Lin, a dev lead in Alibaba, join us and talk about Qwen 1.5 and QwenVL and then we had a deep dive into quite a few Acronyms I’ve been seeing on my timeline lately, namely DSPy, ColBERT and (the funniest one) RAGatouille and we had a chat with Connor from Weaviate and Benjamin the author of RAGatouille about what it all means! Really really cool show today, hope you don’t only read the newsletter but listen on Spotify, Apple or right here on Substack.

TL;DR of all topics covered:

Open Source LLMs

Alibaba releases a BUNCH of new QWEN 1.5 models including a tiny .5B one (X announcement)

Abacus fine-tunes Smaug, top of HF leaderboard based Qwen 72B (X)

LMsys adds more open source models, sponsored by Together (X)

Jina Embeddings fine tune for code

Big CO LLMs + APIs

Google rebranding Bard to Gemini and launching Gemini Ultra (Gemini)

OpenAI adds image metadata (Announcement)

OpenAI keys are now restricted per key (Announcement)

Vision & Video

Voice & Audio

Meta voice, a new apache2 licensed TTS - (Announcement)

AI Art & Diffusion & 3D

Microsoft added DALL-E editing with "designer" (X thread)

Stability AI releases update to SVD - video 1.1 launches with a webUI, much nicer videos

Deep Dive with Benjamin Clavie and Connor Shorten show notes:

Benjamin's announcement of RAGatouille (X)

Connor chat with Omar Khattab (author of DSPy and ColBERT) - Weaviate Podcast

Very helpful intro to ColBert + RAGatouille - Notion

Open Source LLMs

Alibaba releases Qwen 1.5 - ranges from .5 to 72B (DEMO)

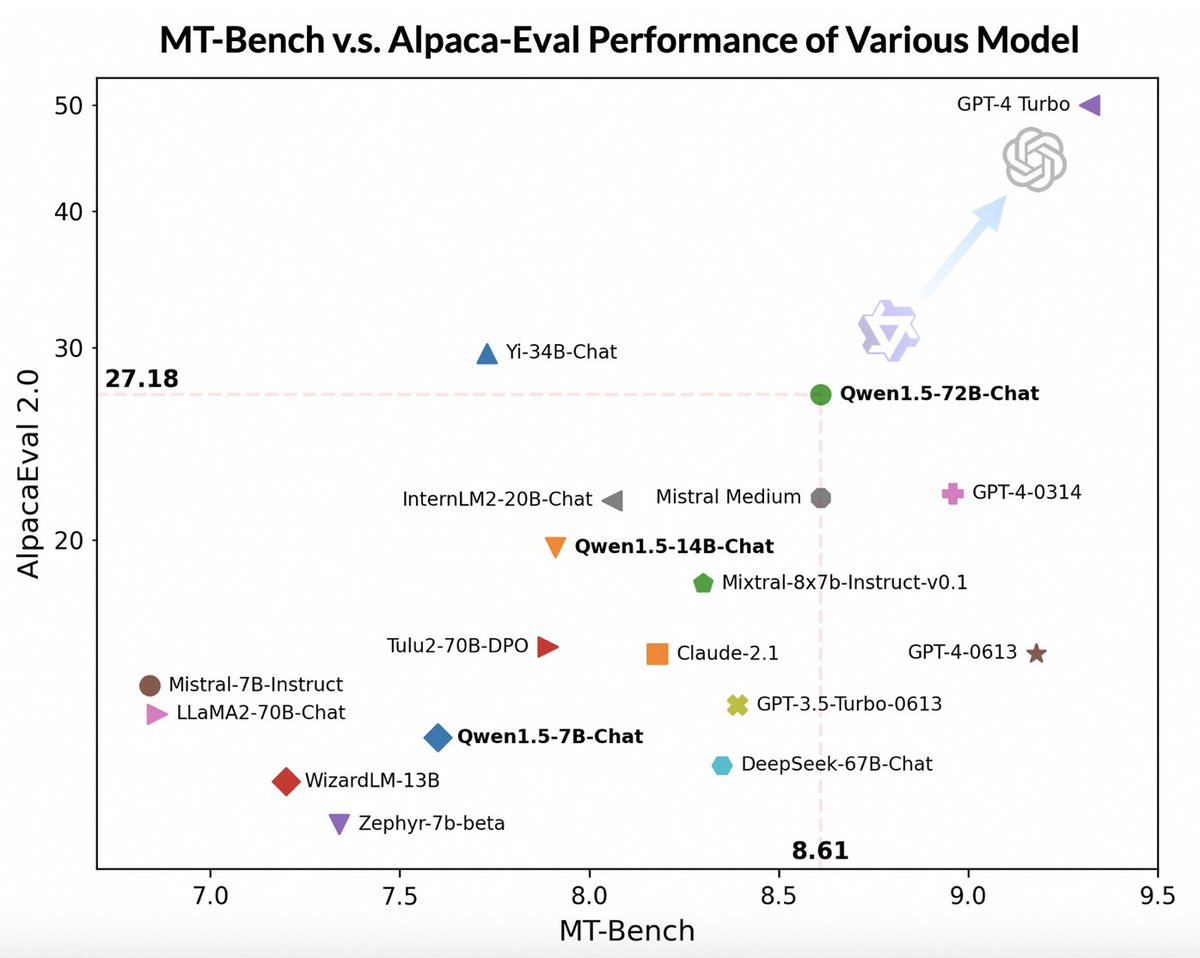

With 6 sizes, including 2 new novel ones, from as little as .5B parameter models to an interesting 4B, to all the way to a whopping 72B, Alibaba open sources additional QWEN checkpoints. We've had the honor to have friend of the pod Junyang Justin Lin again, and he talked to us about how these sizes were selected, that even thought this model beats Mistral Medium on some benchmarks, it remains to be seen how well this performs on human evaluations, and shared a bunch of details about open sourcing this.

The models were released with all the latest and greatest quantizations, significantly improved context length (32K) and support for both Ollama and Lm Studio (which I helped make happen and am very happy for the way ThursdAI community is growing and connecting!)

We also had a chat about QwenVL Plus and QwebVL Max, their API only examples for the best open source vision enabled models and had the awesome Piotr Skalski from Roborflow on stage to chat with Junyang about those models!

To me a success of ThursdAI, is when the authors of things we talk about are coming to the show, and this is Junyang second appearance, which he joined at midnight at the start of the chinese new year, so greately appreciated and def. give him a listen!

Abacus Smaug climbs to top of the hugging face leaderboard

Junyang also mentioned that Smaug is now at the top of the leaderboards, coming from Abacus, this is a finetune of the previous Qwen-72B, not even this new one. First model to achieve an average score of 80, this is an impressive appearance from Abacus, though they haven't released any new data, they said they are planning to!

They also said that they are planning to finetune Miqu, which we covered last time, the leak from Mistral that was acknowledged by Arthur Mensch the CEO of Mistral.

The techniques that Abacus used to finetune Smaug will be released an upcoming paper!

Big CO LLMs + APIs

Welcome Gemini Ultra (bye bye Bard)

Bard is no longer, get ready to meet Gemini. it's really funny because we keep getting cofusing naming from huge companies like Google and Microsoft. Just a week ago, Bard with Gemini Pro shot up to the LMSYS charts, after regular gemini pro API were not as close. and now we are suppose to forget that Bard even existed? 🤔

Anyhow, here we are, big G answer to GPT4, exactly 10 months 3 weeks 4 days 8 hours, but who's counting?

So what do we actually get? a $20/m advanced tier for Gemini Advanced (which will have Ultra 1.0) the naming confusion continues. We get a longer context (how much?) + IOS and android apps (though I couldn't find it in IOS, maybe it wasn't yet rolled out)

Gemini now also replaces google assistant for those with androids who opt in (MKBHD was somewhat impressed but not super impressed) but google is leaning into their advantage including home support!

Looks like Gemini is ONLY optimized for English as well

We had quite the conversation on stage from folks who upgraded and started using, including noticing that Gemini is a better role player, and less bland, but also that they don't yet support uploading documents besides images, and that the context window is very limited, some said 8K and some 32K but definitely on the lower side.

Also from Google : a llama.cpp wrapper called localllm (Blog)

OpenAI watermarks DALL-E images and adds per key API limits (finally) (Blog)

OpenAI's using something calledC2PA for pictures made by DALL-E 3, whether you're chatting with ChatGPT or using their API. It's a way to show that DALL-E 3 actually created those images. But it's just for images right now, not for text or voice stuff. Adding this info can make the files up to 32% bigger, but it doesn't mess with the quality. The tags tell you if the source was DALL-E 3, ChatGPT, or the API by including special signatures and stuff. Just a heads up, though, this C2PA thing isn't perfect. The metadata could get wiped either on purpose or by mistake.

They also released an update to the developer experience that allows you to track usage but also restrict usage per API key! Very very needed and helpful!

This weeks Buzz (What I learned with WandB this week)

First part of the live series with the Growth ML team was live and AWESOME!

Vision

BRIA - Open-Source background removal (non commercial)

📷 Introducing Open-Source Background Removal by @BriaAI 📷 Now live on @huggingface, RMBG v1.4 excels in separating foreground from background across diverse categories, surpassing current open models. See demo [https://t.co/DDwncjkYqi] #BriaAI #OpenSource #AI @briaai https://t.co/BlhjMMNWxa

Voice

MetaVoice (hub)

1.2B parameter model.

Trained on 100K hours of data.

Supports zero-shot voice cloning.

Short & long-form synthesis.

Emotional speech.

Best part: Apache 2.0 licensed. 🔥

Powered by a simple yet robust architecture: > Encodec (Multi-Band Diffusion) and GPT + Encoder Transformer LM. > DeepFilterNet to clear up MBD artefacts.

That's it for us this week, this time I bring you both the news segment AND the deepdive in one conversation, hope it's not super long, see you here next ThursdAI! 👏

Full Transcript:

[00:00:00] Intro and housekeeping

[00:00:00]

[00:00:00] Alex Volkov: You're on ThursdAI, and I think it's time for us to get started with the recording and the introduction.

[00:00:26] Alex Volkov: Happy, happy Thursday everyone! Today is February 8th, 2024. I don't know, This is the second calendar year the Thursday is happening in, so I don't know if I need to mention the year or not but we're well on our way into 2024 and you're here on Thursday, I, the Thursday I is the space, the newsletter, and the podcast to keep you up to date with all of the very interesting things that are happening in the very fast moving world of ai.

[00:00:58] Alex Volkov: Hopefully by now, all of you already have ThursdAI in your podcast, wherever you get a podcast, Spotify, recently YouTube as well, which is weird. But with this introduction, I will just say, hello myself, basically. Hey everyone. My name is Alex Volkov. I'm an AI evangelist with Weights & Biases.

[00:01:15] Alex Volkov: Weights & Biases is the reason why this comes to life to you. And there's going to be a little segment about Weights & Biases in the middle here as well, and I'm joined on stage. Often, and pretty much every week by great friends, experts in their fields. As we talk about everything AI related this week, especially we're going to have some interesting things.

[00:01:34] Alex Volkov: Those of you who come back week after week. Thank you, and we love that you're part of the community, and it's great to see how many people just return, and those of you who are new, we're here every week and The community doesn't stop after we finish the space. There's a bunch of spaces. I think our friend AlignmentLab had the space that went on for the full week, I think.

[00:01:55] Alex Volkov: I don't know if he ever slept. That's maybe why he's not here on stage. But we're here every week for the two hours to give you updates for the first hour and definitely some very interesting deep dives that has been happening, that have been happening for the past few Weeks, I want to say, so I just want to shout out some friends of ours that recently we were featured in the deep dives.

[00:02:16] Alex Volkov: We've talked with Maxime Lubon, who trained the Beagle series and then also gave a deep dive with us about model merging. That was really fun. And on the last deep dive, we talked with the Lilac folks and they're building an open source tool. That lets you peer into huge data sets, like imagine millions of rows, data sets, and they chunk and cluster this. And we've talked about the importance of data sets in creation of LLMs or large language models.

[00:02:46] Alex Volkov: And they've taken the huge data sets of the folks to usually come up on ThursdAI. Technium from Nous Research just released their Hermes dataset, for example. And the folks in Lilac talked to us about how that would be visualized and how you can see which parts of it is comprised of.

[00:03:03] Alex Volkov: It's quite an interesting conversation about how to approach the training and fine tuning area. And we haven't often talked about dataset curation and creation, so that conversation was a very nice one. So we have deep dives. I will say that last weekend, I also interviewed, and that's probably going to come up as a separate episode.

[00:03:24] Alex Volkov: I interviewed Sasha Zhadan from Moscow, and this was a first for me. And I just want to like, highlight where this weird thing takes me, because that's not ThursdAI, and that's not about the news. That was just literally about AI stuff. So this guy from Moscow, and this will be dropping on ThursdAI podcast soon.

[00:03:42] Alex Volkov: This guy from Moscow built a bot that auto swipes for him on Tinder. And that bot started using gpt instruct, and then moved to gpt chat, gpt etc, and then moved to gpt 4. And he talks about how this bot kept improving with the improvement of AI. And then he autoswiped a wife, basically. And then this was, this took over the Russian ex.

[00:04:08] Alex Volkov: I don't know if you guys are on the Russian side of ex, but I definitely noticed that everybody, that's all they could talk about. This guy Previously also did some shenanigans with OpenAI stuff. And so it was a very interesting conversation, unlike anything that I did previously on ThursdAI.

[00:04:21] Alex Volkov: And definitely that's coming more as a human interest story than anything else. But it's very interesting. And also his fiance also joined and we talked about the morality of this as well. And it was really fun. So if that kind of new type of content also interests you definitely check out.

[00:04:37] Alex Volkov: That's probably not going to end up on X.

[00:04:40] Alex Volkov: And I think with this, it's time to get started. , The usual way we get started here is I just run through everything that we have. Just so you know what we're going to talk about.

[00:04:52] Alex Volkov: And then we're going to start with segment by segment. So that's

[00:04:54] TL;DR and recap of the conversation

[00:04:54] Alex Volkov: Hey everyone, this is a recap of everything we talked about on ThursdAI for February 8th. 2024 and we had a bunch of breaking new stuff today, specifically around the fact that Google finally gave us something. But I'm gonna do this recap properly based on the categories. So let's go. So in the category of open source lms, we've talked about Alibaba releases a bunch of new Qwen models, specifically under the numbering 1.5.

[00:05:33] Alex Volkov: And we had the great pleasure again to talk with Justin J. Yang Lin. from Qwen team the guy who's a tech lead there and pushes for open source. And he came up and talked about why this is a 1. 5 model, not a 2 model. He also talked about the fact that they released a tiny 0.

[00:05:51] Alex Volkov: 5 billion one. This is like a very tiny. Large language model. I think it's really funny to say a tiny large language model, but this is the case. And he talked about multiple releases for Qwen. We also had, friend of the pod, Piotr Skalski from Roboflow, who's like a vision expert who comes up from time to time, and the author of I forget the name of the library.

[00:06:12] Alex Volkov: I will remember this and put this in the show notes as well. He came up and he had a bunch of plays with the visions part of the Qwen. ecosystem, and we've talked about QNVL plus and QNVL max with Justin as well, and we've talked about their potential for open sourcing these models. They also released a 72 billion parameter model that's now part of the top of the Hug Face leaderboard, which is super cool.

[00:06:34] Alex Volkov: So definitely a great conversation. And I love it when the authors of the things that we talk about come out and talk about the, in ThursdAI. We then smooth, smoothly move to the next topic where Abacus, the company Abacus AI, there is Finetune that's now top of the Hug Face leaderboard, and that's based on QN72B, and not even the new one, the previous one, so 1.

[00:06:54] Alex Volkov: 0, and that's now the top model on Hug Face leaderboard, and that has an average score of over 80. And I think it's the first open source model to do and they haven't fully released the process of what they what they used in order to make this much better in different leaderboards. But they have mentioned that they're going to train this model on top of the Mikulik over Mixtral.

[00:07:17] Alex Volkov: And it's very interesting. And they also They're building some other stuff in Abacus as well. Very interesting. And then we moved to talk about LMSYS Arena. LMSYS Arena is the place that we send you to see which models users prefer better versus just the benchmarks and evaluations hung in phase.

[00:07:35] Alex Volkov: LMSYS Arena added a bunch of open source models, so shout out OpenChat again. They added another Hermes the Finetune that Technium did for Hermes on top of Mixtral, and they also added a bunch of Qwen versions as well. LMSYS adds open source, so you continuously can see which models are better and don't have to judge for yourself, because sometimes it's not very easy.

[00:07:55] Alex Volkov: We also covered JINA embeddings that are fine tuned for code. JINA from the company JINA AI and the representative Bo Wang who came, and he's a friend of the pod. We talked about their embeddings for code. Bo didn't show up this time, but maybe next time as well. Then we moved to big companies, LLMs and API, and definitely the conversation turned interesting, where multiple folks here on stage paid the new 20 tax, let's say from AI [00:08:20] for for the rebranded Bard now called Gemini and the launch of Gemini Ultra.

[00:08:25] Alex Volkov: And we've talked about how long we've waited for Google to actually give us something like this. And now we're getting Gemini Ultra and Bard is no more, Bard is Essentially dead as a brand, and now we're getting the Gemini brand. So if you used to go to BART, now you go to Gemini, but also the brain behind this also improved.

[00:08:41] Alex Volkov: So you get Gemini Pro by default for free, I think, and Gemini Ultra is going to cost you 20 bucks a month. It's free for the next two months, so you can sign up for a trial, and then you'll get Gemini Ultra. And you'll get it not only in the web interface, you also get it in iOS and Android apps. And if you're on Android, it also integrates with the Android Assistant.

[00:09:00] Alex Volkov: That's pretty cool. It has a context length of not very much, I think we said 8 or 16 or so and some folks contested this in the comments, so we're still figuring out the context length, and it looks like the context length for that is Restricted with the UI, less on the API side, and Gemini Ultra did not release an API yet.

[00:09:17] Alex Volkov: So we've talked about Gemini Ultra and different things there. We also covered that OpenAI adds image metadata to all DALI generations, whether through the UI or through the API, this image metadata can be stripped. So it's not a watermark per se, but it's definitely helpful. And there also the OpenAI gives us a little bit of a developer experience thing where you can restrict.

[00:09:36] Alex Volkov: Per key on API keys different possibilities. So if one key gets stolen, you can lock only that one, or you can restrict it to only like a specific use as well. In the vision video category, we've talked about the new model for background removal called RMBG from Bria AI. It's not a fully commercial license, but you can play with this now.

[00:09:57] Alex Volkov: There's a demo I'm going to add to the show notes. And also it runs fully on your client via the efforts of friends of the pod Zenova from Transformers. js. And it's pretty cool to have a model that removes background super like with two clicks with no back with no servers. And in the voice and audio category, we talked about MetaVoice, a new.

[00:10:14] Alex Volkov: licensed Apache 2 licensed text to speech model, not from Meta, even though it's called MetaVoice, and it's funny it's pretty decent and has zero shot voice cloning which means that you can provide a piece of your voice and fairly quickly get a your voice speaking back to you generated. And we also talked about breaking news from NVIDIA AI, something called Nemo Canary 1B, which is a ASR model, Automatic Speech Recognition model, that's now top of the leaderboards on Hug Face, and it beats Whisper on everything, including specifically for four languages.

[00:10:48] Alex Volkov: It's trained on 8, 500 hours 85, 000 hours of annotated audio, and it's very fast conformer encoder as well. We barely covered this, but Microsoft added DALI editing with the designer. So if you remember, Microsoft also did a rebrand. It used to be called Bing Chat, and now it's called Copilot.

[00:11:07] Alex Volkov: And that Copilot now adds capabilities that don't exist in other places, like GPT, ChatGPT with DALI. So Microsoft's DALI now is involving the designer thing, and they have cool things where you can edit images. On the fly, you can click on different segmented objects from your generated image and say, Hey, redo this in a different style.

[00:11:27] Alex Volkov: The video for this is super cool. I'm going to add this in the show notes. And it's very interesting to see that Mali Microsoft with their co pilots is moving away from where the capabilities is for ChatGPT exist. We also barely, briefly mentioned and glanced through this, but Stability AI released an update to stable video diffusion, including a web UI that you can use now, and it's not only a model, it's a web UI as well, and that web UI is pretty cool, if you didn't get an access to it, I'll link to the show notes, I think it's now possible to register, much nicer videos, and obviously it's in the open source.

[00:11:59] Alex Volkov: as much as possible. So super cool. But the web UI shows you other people's video attempts. You can actually use their prompts to create videos of your own. They have some controls. It's very nice. Then I think we talked a little bit at the end there about Vision Pro and my experience with this as it comes to AI.

[00:12:15] Alex Volkov: We didn't dive in into Vision Pro, even though this is my new, this is my new toy in life. And I'm very happy to participate in the renaissance of spatial computing. And we covered like the intersection of AI and spatial computing. And I think the very interesting part of today's ThursdAI was thanks to two new guests, Benjamin Clavy and Connor from Weaviate, and we've talked about DSPy and Colbert, or Colbert, and Ragatouille, which is a library to use Colbert embeddings.

[00:12:43] Alex Volkov: And we talked about what they mean, and this was a great learning kind of experience for me. And if you see these concepts on your timeline and you have no idea what we talked about, I basically played the role of, hey, I'm the village dummy, let's say. I'm gonna re ask the question about what this means, why should we use this as well.

[00:13:01] Alex Volkov: And I think this is our show today, folks. This is the quick summary. If I missed anything super big and important, please let me know.

[00:13:08] Open source LLMs and AI news

[00:13:08] Alex Volkov: But otherwise, I think we'll start with open source. All right, welcome to the open source corner. And I guess because the tradition of ThursdAI is Something releases, I go in the comments and say, Hey, I'm going to talk about this on ThursdAI. Do you want to join? And sometimes people say yes. And this is how we met Justin or Junyang here on stage. Junyang is the dev lead for the Qwen team and welcome Junyang.

[00:13:50] Alex Volkov: It's very late where you are. So I really appreciate your time here. Please feel free to unmute and introduce yourself again. Some folks already know you, but if in case some new folks are listening to us, feel free to introduce yourself. And then let's talk about the stuff that you released.

[00:14:06] New Qwen models 1.4 from Alibaba

[00:14:06] Junyang Lin: Yeah. Thanks Alex. Nice to be at Thursday. ai it's a very great program for us to talk about ai. I am j Young and you can call me Justin. I'm working in the team for the LM and LMM. And we are now working for the new LLM, Qwen 1. 5, and we are also upgrading our vision language model, QwenBL, to QwenBL Plus and Max.

[00:14:33] Junyang Lin: Plus and Max are not open sourced yet, but we have demos, so you can try in our HuggingFace organization, and you can find our demos, and you can try with Plus and Max. And the max is the best one, and I am very confident with the max demo. And about our language model today actually this week we are open sourcing QWAM 1.

[00:14:58] Junyang Lin: 5. Maybe you previously you have noticed the QWAM 2 code inside Hugging Face target based transformers. Yeah, we are moving to new codes for you to use our QUANT models because in the past few months I have been interviewing our users and they found some problems with using our code, the original QUANT code, so I'm moving a step forward.

[00:15:23] Junyang Lin: So this is why we had the QUANT 2 model, but for the model themselves actually we are still we in our judgment, we are still at the 1. 5 not 2 yet. We're still training the real Qwen 2, so this time we have Qwen 1. 5. For Qwen 1. 5 we are actually fixing a lot of problems because there are some models like 7 billion and 14 billion, there are a lot of people using these models, but they are actually quite old.

[00:15:50] Junyang Lin: They were released months ago. They have some problems for Qwen 14 billion It is actually only supporting around 2 to 4K context length, which is far from enough for a lot of users. So for this time, we have upgraded all models to supporting 32, 000 tokens. And for the sizes, we have released more sizes.

[00:16:15] Junyang Lin: Previously, we had 1. 8, which is the smallest one. But this time, we have 0. 5. only 0. 5. I used to think this one is just for experimental usage but there are some users in Twitter they found still 0. 5 can used to be do something so if you have any comments on [00:16:40] 0. 5 you can share the comments to me. And we also have 4 billion which is between 1.

[00:16:46] Junyang Lin: 8 and 7 billion. The reason why we have 4 billion is that actually when we first released 1. 8 billion it is actually popular because they would like to deploy the small model to some devices like cell phones. but they found just 1. 8 is not good enough for them to for the applications.

[00:17:07] Junyang Lin: So they want something just smaller than 7 billion, but much better than 0. 8. So we have 4 billion. Yeah. We have a wide range of sizes. These are for you to choose. And,

[00:17:19] Alex Volkov: six, six models overall Junaid?

[00:17:22] Junyang Lin: Yeah. Six

[00:17:23] Alex Volkov: Six sizes overall, but definitely more models than this, because you also released, I think for the first time, you released quantized versions as well, correct?

[00:17:32] Junyang Lin: No, but previously we have released GPDQ,

[00:17:35] Alex Volkov: Oh yeah.

[00:17:35] Junyang Lin: our convention, but this time I also have AWQ and also GGUF maybe GGUF is the new one admittedly, previously I don't know too much about AWQ and GGUF. This time I tried and everything is okay. So I just released the AWQ and GGUF.

[00:17:52] Junyang Lin: And GGUF is the new thing for me. But it is quite popular in the community. Like Elm Studio, like you introduced. To me and I found a lot of people using gguf they use in Olama. So I collaborated with Olama. So you can now just run one line of code, like Olama run QWAM. So you can use the QWAM models with Olama and you can also use it in Elm Studio.

[00:18:15] Alex Volkov: I just wanna

[00:18:16] Junyang Lin: No

[00:18:16] Alex Volkov: just a tiny pause here because I think first of all, to highlight the importance of this community, you guys are releasing a bunch of great models in open source, and first of all, just a Great. At testament to the community because you're listening to what folks have been saying, how they're reacting to your models and part of the Thursday aid, I was able to just introduce you to, to LM Studio and you guys work together.

[00:18:37] Alex Volkov: And now the second year model drops, not only you guys already pro providing us quantized versions in four and GGF stuff. It's also very easy to start using and I think, just a shout out to you guys for thinking about this because a lot of models when they release they just release a waste file and then it's up in the community to figure out how to run them, when to run them, what's the problems.

[00:18:57] Alex Volkov: And this was the issue with Gwen before. It was like harder to use and maybe only on hug and face demos. And now you guys released it with support for the most popular open source runners out there. So Ollama, if folks haven't used Ollama by now, definitely there's a CLI, just like Ollama installed this.

[00:19:14] Alex Volkov: And LM Studio, which we've talked about a bunch, so shout out LM Studio. Shout out JAGS. And I'm, I was very happy to introduce both of you. So it's been great. And I've used the small model, the baby model as well. How was the reception from the community? What have you seen people do? Have there been any fine tunes already that you're excited about?

[00:19:33] Junyang Lin: yeah this is a very great comment for helping us to improve. Yeah, previously like us, a lot of people just drop open source models and they just let the community to use it. But this is maybe, this may be not right, because we can do more to the community, maybe we can do things. more easily than the community users.

[00:19:56] Junyang Lin: So this is why we are changing our style. We try to modify our code, try to adapt to the usages to make our models more popular. And recently I found them just gradually fine tuned our models. Previously fine tuned users are inside mainland China because they have chances to talk to us, so they will know more about our models so they, they can finally fine tune it.

[00:20:24] Junyang Lin: But with the support of Lama X Tree and especially Alto wing Winland helped me a lot. Technium just introduced wing land to me, and I found some people are using X lotto to do it. I dunno if Chen I don't know if I pronounced his name he's one of the users of Qwen and he he previously got the usage of our models and then he quickly fine tuned a lot of model its name is Q U Y

[00:20:54] Alex Volkov: Oh, Stable Quan. Yeah, I think I know what the guys are talking about. Stable Quan from also Nous Research

[00:20:59] Junyang Lin: yeah, stableQwen I'm quite familiar with him, I just talked to him very much, and he just directly used our models, very quickly finding a series of models, and I find them, the quality are quite good.

[00:21:12] Junyang Lin: So this is quite encouraging for me, because you can find people are interested in your models, they can find you in it, very fast speed, and I recently found Smog by Abacus AI, but I got no chance to talk to them because I don't know who actually built the model, but I found a small 72 billion is built on Qwen 72 billion

[00:21:37] Alex Volkov: Oh, really?

[00:21:39] Junyang Lin: Open open leaderboard.

[00:21:40] Alex Volkov: Smog is the next thing we're going to talk about, so you're taking us exactly there. I think, Nisten, you have a question just before, and then we're going to move to talk about smog. Just on the community part just the names you mentioned. You mentioned Stablequan, definitely friend of the pod.

[00:21:52] Alex Volkov: You mentioned Technium introduced you to Winglian, the guy from Axolotl. All of this happens in the ThursdAI community, and I love it. I'll just say that I see Robert in the audience here. Smog is from Abacus AI, and I think Robert has some connection to Bindu, so Robert, if you can introduce Junyang to Bindu, that would be great, and then we'll figure out, like, how they use the 72B model.

[00:22:12] Alex Volkov: 72B model that you guys released is one of the more performant ones. I think it's even outperforming Mistral Medium, is that correct?

[00:22:21] Junyang Lin: Yeah it's now this version QEM 1. 5 SIMD2 BDN is for the chat model for the base model, it is actually quite similar some users have found that I admit that, and, but for the chat models, we have some improvements because this time we are not only Actually, we not only SFD the model, but we also use DBO.

[00:22:40] Junyang Lin: We have some progress in DBO. So we've reached like 8. 67 in MTBench. This is a relatively high score and we just did simple DBO and just improved the model. And we also sent our model to Chatbot Arena in Elimsys. supported by Together AI, because we have some friends in Together AI. They just built API for us, and we have been in chatbot arena, so you can try it in chatbot arena to see how it really performs.

[00:23:18] Junyang Lin: Is it really perform just like the score of MTBench? I'm not quite sure, because I'm also dependent on the users feedback.

[00:23:27] Alex Volkov: it depends on human preference. I so first of all, Justin, you're taking over my job now because you're also reporting on the stuff that I wanted to mention, but definitely a shout out for getting added to LMSYS. That's not super easy. Not every model out there on the Hagenfest leaderboard gets added there.

[00:23:41] Alex Volkov: So definitely super cool. Yeah, please go ahead. If you have anything else to

[00:23:46] Junyang Lin: for as you have mentioned Mistral Medium, I'm not sure which one is better Mistral Medium or Qwen 72 Billion from some reviews they might be similar for the Qwen 1. 5 72 Billion similar to MiQ some of my friends like Blade just tested In EqBench, the scores are very similar, but I need some more reviews to let me really know that how the 72 billion model really perform, that how is it better or is it worse than MeeQ?

[00:24:20] Junyang Lin: They're all okay for me. I just want real reviews for me. Yeah,

[00:24:23] Alex Volkov: Yeah,

[00:24:24] Junyang Lin: it.

[00:24:25] Discussion about Qwen VL with Nisten and Piotr

[00:24:25] Alex Volkov: awesome. Junaid, thank you for joining us. And Nisten, go ahead. You have a few questions, I think, about the interesting things about VL.

[00:24:34] Nisten Tahiraj: Yeah, so one thing is that the 0.5 Bs and the small models, I know Denova in the audience was specifically looking for one around that size or like a 0.3 to run on web GBU, because then even at 32 bit, which older browsers will still support it, it will still only take two gigs. So that, that would run anywhere.

[00:24:58] Nisten Tahiraj: But my question. [00:25:00] So shout out to Feliz de Nova for all that. I know he's going to do something with it but my question for you was more about the Macs and the the larger Qwen QwenVL chats are those also based off of the 72B and did you find more improvements in going with a larger LLM, and I also wanted to know your opinion on Lava.

[00:25:27] Nisten Tahiraj: The Lava 1. 6 method where they mosaic together four clip models on top to get a larger image, even though it slows down inference because now it's got a output like 2000 embeddings. So yeah, what do you think of Lava and is there more stuff to share about the Clang,

[00:25:47] Junyang Lin: VL, Max. Yeah for Plus and Max it may be, sorry for me not ready to open source it.

[00:25:57] Junyang Lin: I cannot decide these things. Yeah actually it's built on larger language models much larger than the Plus, and you can guess whether it is 72 billion. It is not that important, and we have found that The scaling of the language model is really important for the understanding of the VR models.

[00:26:18] Junyang Lin: We have tested it on the MMMU benchmark and we have found that the Max model is highly more com competitive and performs much better than the Quin bi plus. Although previously many people have thought that Quin Bi Plus is strong enough, but we found that the max had. Much better reasoning capabilities, just understand some, something like some reasoning games like poker or these things like that, some complex things that people can understand through the vision information they can somehow understand it.

[00:26:52] Junyang Lin: I think the performance might be a bit slower. Approaching Gemini, Ultra, or GPE4B for the QEMDR MAX. We were just gathering some reviews. I'm not quite sure, but

[00:27:05] Alex Volkov: From the review perspective, I want to say hi to Petr, our friend here on stage, from Roboflow. Petr is one of the vision experts here on stage. Petr, welcome. Feel free to introduce yourself briefly, but I definitely know that you got excited about some of the GwenVL Plus stuff, so definitely feel free to share some of your insights here.

[00:27:30] Piotr Skalski: Okay. Yeah. And first of all, awesome to meet somebody from Qwentin. Yeah.

[00:27:36] Piotr Skalski: So yeah I'm from Roboflow, like you said and I'm responsible there for computer vision and growth. So it's like in between of being ML engineer and marketing something like this.

[00:27:49] Piotr Skalski: And yeah, I was experimenting with Qwen, Plas and Max last week. Super impressed in my opinion. I know that you tried to be humble, maybe, but. In my opinion it's, at least on things that

[00:28:04] Junyang Lin: I test, it performs like the best compared

[00:28:08] Piotr Skalski: to other

[00:28:09] Junyang Lin: models. Thank you very much. Thanks for the appreciation.

[00:28:14] Piotr Skalski: Yeah. And especially the fact, so the biggest game changer for me, and I know that there were models that were capable of that before, is the fact that you can ground those predictions and you can, for example, point to a specific element on the image. So it's not only that you can ask questions and get answers and do OCR, but you can straight up do zero shot detection if you would like.

[00:28:40] Piotr Skalski: Yeah. Which is which is awesome. And that's something that none of the. Other popular models can do to that extent, at least on the

[00:28:51] Piotr Skalski: things

[00:28:51] Piotr Skalski: that I

[00:28:51] Piotr Skalski: tested. My question is,

[00:28:55] Piotr Skalski: do you plan to open source it? Because it's awesome that you can try it out for the API and I highly appreciate the fact that you created the, HF space and you can go there and try it.

[00:29:07] Piotr Skalski: But is there a chance that you will open source it even with the meeting? License are not necessary.

[00:29:16] Junyang Lin: Yeah personally, I would like to open source some but I cannot decide these things, but I think there's a chance I'm still promoting these things inside the core, but I cannot say too many things about these stuff, but we will try because we have found out that we ourselves can also build very good LMM.

[00:29:37] Junyang Lin: I think the gap Just between the big corp between us and the big corp. In LMM, it's very small. And we have found that our techniques or our training is quite effective. So maybe one day we'll share to the community, but for now it is still APIs and demos and I would try to think about these things.

[00:29:59] Junyang Lin: And also question about. The comparison with us and Lava, and I have just tried Lava 1. 6 not quite freQwently. I just tried it. I think it's a very good model and it it has very good performance in the benchmark results but I think the limitations of these other open source models may be that It still lacks sufficient pre training for them Skullscape just said we can do Qwen can do OCR and you can find that Qwen's reasoning capability is quite strong because we have done a lot of pre training work on it.

[00:30:39] Junyang Lin: We have done a lot of data engineering on pre training because we have capabilities of handling different resolutions and different aspect ratios so that we can use the curated, the OCR data and put them in the pre training. And when the vision length model can understand a lot of textual like linguistic information inside the images, they may do something like like we said, reasoning, and you will find that really powerful, very impressive, or things like that.

[00:31:13] Junyang Lin: Yeah I think the gap between other models and us, or also Gemini Ultra and GPT 4b, maybe still the lack of large scale data. for training. Yeah, this is my opinion.

[00:31:27] Alex Volkov: we're waiting for more data, but we're also waiting for you guys too. I just want to thank you for being the champion for open source from within the organization, and really appreciate all your releases as well. I think Piotr and Nisten, like everybody here on stage, definitely. It feels that, and thank you for coming and talking about this.

[00:31:45] Alex Volkov: Justin, feel free to stick around because the next thing we're gonna talk about, you already mentioned, which is Smog 72 B which is the top of the leaderboard. And I just read through the thread from Bindu, ready from Abacus ai and it looks like they didn't even use 1.5. I think they used 70 the previous Quinn

[00:32:02] Junyang Lin: yeah, they used the previous QUANT72B. If they are really based on the base language model there might not be a lot of differences. Because 1. 5 for the base language model 72B is actually slightly better than the original 72B for the base language model. Yeah.

[00:32:22] Alex Volkov: for the base ones. And very interesting what they

[00:32:24] Junyang Lin: the base one.

[00:32:25] Alex Volkov: So they, they don't share any techniques, but they promised to open source their techniques. They're saying like, our next goal will be to publish these techniques as a research paper and apply them to some of the best Mistral models, including Miku.

[00:32:37] Alex Volkov: So I got confused. I thought that they already fine tuned Miku, but no, they just fine tuned on top of Qwen. And now the top Hug Face leaderboard model is based, is a fine tune of Qwen, which is like also super cool.

[00:32:50] Junyang Lin: Yeah, I'm very proud of it.

[00:32:52] Alex Volkov: Yeah, congrats.

[00:32:53] Junyang Lin: They are using our model to be the top of the model. I'm also really expecting their technical report to see how they reach the top of the benchmark. But I think it is not that It is not that kind of difficult because you have a lot of ways to improve your performance in the benchmark, so we'll still see how it really performs in the real scenarios, especially for their chat models, yeah.

[00:33:18] Alex Volkov: Yeah, that's true, [00:33:20] that's often the case. But I just want to shout out that the world is changing like super fast. We're definitely watching and monitoring the Hagenface leaderboard. And performing better than Mistral Medium is impressive. And this looks at least on the MMLU, this is 77. I think they said they broke The average score of 80, this is the first model that broke the average score of 80 on the open source leaderboard on hang and face, which is super cool based on Quinn as well, and definitely worth it.

[00:33:46] Alex Volkov: I'm gonna add this link to the show notes and hopefully we'll find a way to connect you guys with the Bindu team there at Abacus to see how else this can be improved even for, and whether or not these techniques can be put on smaller models as well. I think in the open source, the last thing.

[00:34:00] Junyang Lin: expecting the chat. Yeah, I'm really expecting to chat with them. Yeah, continue,

[00:34:05] Alex Volkov: So definitely hoping that some of our friends can connect between these awesome teams and learn from each other, which I think is the benefit of speaking in the public and putting things in open source. Now, moving on, the last thing that you definitely mentioned is the update from LMSys, which is quite a few of our friends of the pod are now also part of the chatbot arena.

[00:34:24] Alex Volkov: They just announced this yesterday. They've added Three of your versions, right? They added 1.572 B, 1.57 B, 1.5, four B, and they also added open chat. So shout out the folks from Open Chat Alai and the Alignment Lab and some other friends of ours who like release open chats latest release and they also added news imis fine tune.

[00:34:47] Alex Volkov: So if you guys remember we've talked about news fine tuning on mixed mixture and that improved on the mixture of expert model from. From Mistral a little bit based on DPO data sets. So now that's also in the LMCS arena and it's now powered by Together Compute. Which I have no affiliation with besides the fact that they're awesome.

[00:35:04] Alex Volkov: They're sponsoring a bunch of stuff. And we did a hackathon together together is great. Like you can easily fine tune stuff on their platform, but now they're also sponsoring the arena, at least to some extent, which is great because we get more models and arena keeps going. And if you guys remember, or you probably use it, LMC's arena is this another great way for us to feel what human preference is in models.

[00:35:27] Alex Volkov: And for many of these models. That's what's more important than actual performance on evaluations, on leaderboards, et cetera. So definitely great update from LMCs as well. And I think that, I'm gonna ask my folks here on stage, but Nisten, Far El, if this is like anything else in open source that's super interesting this week, I think that's mostly it.

[00:35:44] Alex Volkov: We can talk about Gemini.

[00:35:48] Nisten Tahiraj: There was a data set, which I think is pretty huge of HackerNoon that they released. And oh, there was one more thing HuggingFace made a GPT store.

[00:35:58] Alex Volkov: Oh,

[00:35:59] Nisten Tahiraj: they made their own GPT store. Yes. I think that's a big,

[00:36:03] Alex Volkov: I want to hear about this, for sure. I haven't used it yet, but I invite the Hug Face folks that are listening to this to come and tell us about this, because I haven't used it yet, so I don't actually have many opinions. But yeah, they released their own open source GPT store, which is super cool, and we're going to add this maybe in the show notes, but I don't have a lot to say about this.

[00:36:24] Alex Volkov: And I think, in the spirit of Yeah, go ahead.

[00:36:27] Nisten Tahiraj: Oh, sorry. Sorry. I'll quickly say that the HackerNoon data set of tech articles, those are some Because they have a lot of guest developers I remember over the years, they had the best ones. Those articles, that data set, is extremely great for any kind of coding or website or whatever work you're doing.

[00:36:50] Nisten Tahiraj: That's because it's step by step instructions on how to build something and all the code for it, it's pretty awesome and it's at the very beginning on the Jumbotron if you guys see it from Daniel VanStream. And yeah, and it's MIT licensed and it's 6. 9 million articles and you can do whatever you want with it.

[00:37:07] Nisten Tahiraj: That, shout out to them.

[00:37:09] Alex Volkov: We'll add this again to the show notes. And as you said something about articles and code, I remembered another thing that definitely Also worth mentioning Junaid Embeddings, if you guys remember, we had a chat with Bo Wang from Juna deep dive into embeddings a while ago, and Junaid Embeddings released a fine tune for code.

[00:37:25] Alex Volkov: So just a quick shout out that embeddings can be fine tuned, embedding models can be fine tuned for specific purposes, and definitely embeddings for co and you guys re if those of us who follow from week to week, we talk about embeddings a lot. We've talked about NumX Embeddings last week, the open source full, including the training datasets.

[00:37:42] Alex Volkov: We've talked about. OpenAI changing embeddings and giving us new ones and cheaper ones. And Junaid, we had a deep dive and I definitely welcome you to go and check out that special episode with Bo Wang from Junaid and they trained their own BERT model as the backbone, the LLM backbone that decides about embeddings and they just released an update to their embeddings fine tuned for code retrieval specifically.

[00:38:03] Alex Volkov: And I think for many folks are building rack system. That's something that they should be aware of that embedding models can be also fine tuned for specific purposes like Q& A and obviously code as well. So if you haven't tried that yet and you're doing a bunch of material on top of code, for example, using some of the data sets that Nisten just mentioned, that probably there's code in there definitely check this out.

[00:38:25] Alex Volkov: I think we're moving on to the big company thing, and I don't have a big company transition, I do have this one though.

[00:38:43] Google finally lanuches Gemini Ultra

[00:38:43] Alex Volkov: Just in, as we started the space, maybe an hour before, our friends from the big G, Google finally answered the question that we've been asking since 10 months and three weeks ago, where is Google? So GPT 4 was released to us after ChaiGPT released in, I want to say December, maybe December 1st, November 31st of 2020.

[00:39:06] Alex Volkov: Then GPT 4 was released in March of 2023. And throughout this time, there was this famous video of Satya Nadella asking where is Google and where's this like 600 pound gorilla in the room of search? And we're going to make them dance. And they definitely make them dance. And we've been waiting.

[00:39:25] Alex Volkov: Where's Google? Where's Google? And Google has released. Quite a few stuff for us since then. Just for context, I think everybody knows this already. Google is the place of the birth of the transformer paper. So like most of this, the recent Gen AI explosion is, can be attributed to transformers architecture that came out from Google.

[00:39:43] Alex Volkov: Google had trained multiple models, including like Palm, and we've talked about Palm and Palm 2, and I don't even remember all the names of the models that they've released for us throughout the years. Google then also. At some point gave us BARD, which is their interface, the chat interface that people used in order to play with their models, and I think some of this was Bye.

[00:40:04] Alex Volkov: Bye. Palm, something else as well. And recently, and I think around December, they said, Hey, you know what? We're here and we have this thing called Gemini after the unification of Google Brain and DeepMind under one org. And we're going to give you Gemini Pro right now, but we'll tell you that Gemini Ultra, that was back in December.

[00:40:23] Alex Volkov: The Gemini, I guess December will tell you the Gemini Ultra is coming and it's going to be better than GPT 4 and you're going to get it soon. And we've been like saying when? And today is the day is the answer for those questions. So today we're celebrating, congrats folks at Google who finally released and upgrade to their LLM capabilities.

[00:40:41] Alex Volkov: Not only an upgrade, so much an upgrade that they've killed the Bard brand completely. No more Bard. That's what I'm understanding. No more BARD, even though that's very confusing. If you guys remember a few weeks ago, we've talked about LMSYS changes were barred with Gemini, I think, something like confusing like this, shot up to the top of the charts and just was trailing GPT 4.

[00:41:05] Alex Volkov: So like second best model in LMSYS arena was barred with GPT 4, or sorry, barred with Gemini. See how confusing this is? And now there's no more barred. But there is an LNCS. Anyway, this is like the whole naming is confusing thing, but Google, including a blog post from Sundar and everything, Google comes out with a new update and says, Hey, Bard is no more.

[00:41:25] Alex Volkov: It's now Gemini and the models are also Gemini. So that's confusing. And the models are Gemini Ultra. We finally get access to Google's answer to GPT 4 today, which is incredible. That answer is Ultra 1. 0. [00:41:40] And we can get this. As part of something like a paid premium tier that's called GMA Advanced on Google.

[00:41:46] Alex Volkov: So you can actually go right now, you can sign up, it's 20 bucks a month, and it starts 20 bucks or 30 bucks? I think it's 20

[00:41:52] Nisten Tahiraj: It's two months free

[00:41:54] Alex Volkov: Yeah, and you get two months, two months trial because they have to prove themselves to you because many people will decide whether or not they're going to go with Google or with JGPT.

[00:42:03] Alex Volkov: And we're going to talk about which one folks will prefer. I haven't tried it yet. Literally as I woke up, I had to prepare my notes for the space. I just want to say. Google, welcome to the party, we've been waiting for you, and I counted, it's been exactly 10 months and 3 weeks and 4 days since GPT 4 released that you came with the same level of, at least, based on benchmarks.

[00:42:24] Alex Volkov: And now we're gonna talk with some folks who actually tried it, Nisten, you tried it, I think Ray, you also tried it let's talk about your first impressions from BART, oh, or, sorry, Gemini.

[00:42:35] Nisten Tahiraj: One, it's heavily moderated. No one's surprised by that. It does answer and reason nicely, or at least the way it communicates, it's a lot more eloQwent, I would say. It feels nicer in the way it reasons stuff out. However, compared to Mistral Medium, or Mixtral, it doesn't quite obey you. I tried my standard question, which is just like Climb out a schedule of building a city on Mars and write the code in C and JavaScript.

[00:43:10] Nisten Tahiraj: And that's a pretty complex question for, that only the best models get. And I needed to re prompt it in order for it to give the answer. And even then, it only wrote some JavaScript. But it was really good JavaScript. However, it didn't do the rest of the task. Okay, it's not bad. It is worth using. Again, very heavily moderated.

[00:43:33] Nisten Tahiraj: As for the vision side of it, it's extremely heavily moderated. I was even telling it to count out, I had an old gaming PC on the floor with two GPUs on the side, and I told it to make me a JSON of all the parts that it sees in the picture. It won't answer questions like, that have humans in them, or even if they're like Star Wars characters or whatever.

[00:43:58] Nisten Tahiraj: But This, I thought, would be something pretty simple, and it, even this one it refused to answer. Yes is good, I think. On the, as far as the vision side goes, the model, the open source models might have it already beat, or will soon.

[00:44:19] Ray Fernando: Yeah, I wanted to add, Ankesh from Google DeepMind actually wrote because I've been posting some of this stuff, and he says, To preempt any confusion, multimodal queries don't go through Pro slash Ultra yet, but that is coming soon too.

[00:44:33] Ray Fernando: Which makes sense a little bit of why you're seeing some of that stuff. I've been seeing similar things when I've been doing some image analysis or even trying to generate images that have people. One of my examples I've just been posting on my my Twitter feed is like having to analyze a meme.

[00:44:48] Ray Fernando: So it's the hot girls meme or the hot ones meme and I was like, hey, this is very popular. Can you tell me what this meme is? And it's I'm sorry I can't because there's images of people. And then I had to do some other meme analysis with Elon Musk and it's the same type of queries. But to add to what Nisten was saying, I've been doing a lot of creative writing tasks, and the writing output has been actually really nice.

[00:45:10] Ray Fernando: And it doesn't have all that extra fluff that you normally would get from ChatGPT 4. And what I find with OpenAI's ChatGPT 4 is that they freQwently say, Hey, don't use purple prose, which is all that extra fluffy stuff you read that make people sound smart. It's I just want a regular sounding piece.

[00:45:27] Ray Fernando: And usually ChatGPT would do that and then revert back to its normal state but I find that Gemini Advanced just keeps going through it and, continues with the writing pieces of things. And for coding stuff, it's really strange. You actually cannot upload any CSV or any text files.

[00:45:43] Ray Fernando: They only let you upload images right now. So you can only have a picture of a microphone and a picture of the little icon to upload an image. Because I wanted to just do a simple analysis on my tweets with a CSV file. And it's there's no place that I see to actually upload that. And I could probably upload so many lines, but there's also a character cutoff, too, that doesn't allow me to upload a lot of code for,

[00:46:03] Ray Fernando: A code base.

[00:46:04] Alex Volkov: What's the, I was about to say this next thing. Do we know the context length? Anybody have an idea of where Gemini Ultra is at around? 'cause we know that GT four is 1 28 K and I think they recently opened this up on the UI as well. I've been noticing less restrictions. I've been able to pace like a lot more code.

[00:46:21] Alex Volkov: My, my test is, you guys know my test is the transcription of the Thursday I conversation that I past and Claude with the a hundred K context definitely takes all of it. GBT. For the pro kind of level used to refuse and now recently it's okay. Yeah, let me summarize this for you Have you guys been able to sense the context length of Gemini Ultra?

[00:46:41] Alex Volkov: Is it any close? Actually, go ahead Welcome to the stage, buddy

[00:46:46] Akshay Gautam: Hello, I just wanted to bring up that their official document mentions that it's 2k context length.

[00:46:53] Alex Volkov: Actually, we don't get greetings of the day

[00:46:57] Akshay Gautam: I see. Yeah. Yeah. Greetings of the day everybody. My name is Akshay Kumar Gautam and I'm an applied AI engineer. I was a data scientist before, but now I work with, modeling and stuff. And yeah I was literally waiting for, I tried, came out, I paid for it because why not? And and a lot of stuff.

[00:47:14] Akshay Gautam: First of all, it's really good at coding. By the way, the context length is 32K at least that's what they say. Yeah, 32K. And and the model is not good at keeping context, like that is what I was here to talk about. It will lose sense for example, if you ask it to do multiple things in a single prompt, it will not.

[00:47:33] Akshay Gautam: Unlike chatGPT, but like with coding, it's better than chatGPT in my humble opinion.

[00:47:41] Alex Volkov: so I want to talk about some advantages that Google has, the big dog definitely, because an additional thing that they released, which Chantipiti doesn't have, is ChairGPT has this, but they released an iOS and Android app, but Android also has integration with the Google Assistant, right?

[00:47:56] Alex Volkov: So you can now join this advanced or ultra tier and use this from your Android device. Now, I'm not an Android user, but I definitely understand that the ecosystem is vast and many people just use this assistant and we're waiting for Apple. We don't have anything to say about Apple specifically today, besides the fact that, they released the, maybe the next era of computing.

[00:48:16] Alex Volkov: But. There's nothing AI series, still the same series from like 2019 with some examples, but Google has now moved everybody who wants to, who pays the 20 bucks a month and has an Android device basically towards this level of intelligence, basically a GPT 4 level of intelligence. And I saw that Marques Brownlee, MKBHD on YouTube, like one of the best tech reviewers out there.

[00:48:38] Alex Volkov: He has been playing with the Android stuff, and he said that even the integration Google Assistant even uses your home stuff. So you can actually ask this level of intelligence to turn on some lights, whatever, and probably better context. Actually, you have any comments on this? Have you played with the Assistant version?

[00:48:54] Akshay Gautam: Two things first of all, Bing chat was already available on Android devices, right? The Copilot, now it's called. Copilot uses GPT 4, so it's already really good. And you can actually use a lot of voice stuff with Copilot as well, which was surprising. The Google Assistant to be honest, in terms of assistants among Siri and I have a Samsung device, so it has Bixby and, among all the AI systems, Google Assistant was the best one by far, in terms of how much you can, use it, and hoping to get access because I have paid for the Ultra, but I still don't have, access to everything.

[00:49:29] Akshay Gautam: Also, there's no API for Ultra, so you cannot actually test anything as well.

[00:49:34] Alex Volkov: we haven't gotten an API developers Sundar Pichai said the developers announcements are going to come next week. IOS hasn't updated yet. Yeah, go ahead Nisten.

[00:49:44] Nisten Tahiraj: I just really quickly tested it with the entire Lama. cpp file. I am down to 15, 000 tokens I cut it down to and it's still too long. We know it's under 16, 000 that you can paste in. I will know [00:50:00] exactly in a few minutes,

[00:50:03] Alex Volkov: So not super, super impressive in terms of like long context. I will also

[00:50:06] Nisten Tahiraj: at least not for the UI,

[00:50:08] Alex Volkov: for the UI. Usually, yeah, usually for some reason they restrict the UI or they forget to update this. And then the model itself is like way longer context, but for now not extremely impressive comparatively.

[00:50:18] Alex Volkov: And again, we're comparing the two like main flagship models OpenAI GPT 4 and now Google's Gemini Ultra. And I also want to say one thing, Gemini seems to be optimized only for English as well, even though it will answer like most of the questions other languages, but it looks like the optimization was focused on English as well.

[00:50:36] Alex Volkov: including some of the apps as well, which is, understandable, but we have to, as we're trying to compare apples to apples GPT 4 is incredibly versatile in multi language operations as well. LDJ, you have some comments? Welcome, buddy, to the stage and give us some Have you played with Ultra so far?

[00:50:55] LDJ: Yes I was actually wondering, does anybody know of plans for them to integrate this with Google Home? Because I just asked my Google Home right now are you Gemini? And it said, I'm a Virgo. And then I asked it, what AI model are you running right now? It said, sorry, I don't understand. So I don't think it's, at least mine, I don't think is running Gemini right now.

[00:51:16] LDJ: But

[00:51:17] Alex Volkov: No, so I think the announcement was

[00:51:18] Junyang Lin: to put it.

[00:51:19] Alex Volkov: The integration into Google Home will come from the Google Assistant. So if you have an Android device, you'll have Google Assistant there. That you can switch on like a smarter brain, and that you can ask it to integrate like with your home through the device. So you can ask it to do stuff in your home.

[00:51:34] Alex Volkov: But the Google Home itself, like the Google Home devices that you have, they're not talked about upgrading them, but maybe at some point, because why not? But I haven't seen anything on this yet. Anything else here?

[00:51:46] Junyang Lin: I think that'd be the perfect. Sorry. Yeah, go on.

[00:51:48] Alex Volkov: Yeah, no, that would be great. I agree with you. Being able to walk around your house and just talk with GPT 4 level intelligence to do operations, I definitely agree.

[00:51:55] Alex Volkov: That would be great. I gotta wonder anything else here on Ultra? We've talked about its code performance. We've talked about its inability to talk about people. Anything else interesting that we want to cover so far? And again, folks, it's been two hours and we're already giving you like a bunch of info, but we'll play with this going forward.

[00:52:12] Nisten Tahiraj: It's about 8, 000 the context length that you

[00:52:14] Alex Volkov: Are you serious? Wow, that's not a lot at

[00:52:17] Nisten Tahiraj: that's as much I was able to paste it like 7, 500.

[00:52:20] Alex Volkov: So yeah, folks, you heard it here first. You'll get more context than you previously got probably, but it's not a lot comparatively. Even though it can probably, it's probably a consideration of compute for Google, right? How much context to give you the model probably gets more. And it's also a vision enabled model.

[00:52:36] Alex Volkov: But I think that we've covered this enough. Gemini Ultra. It's here, it's very impressive from Google, and yet, I want to say personally, maybe a little bit underwhelming because, they need to convince us to move, and it's going to be the same price, and I don't know, let me just ask this before we move on.

[00:52:55] Alex Volkov: Anybody here on stage who has access to both plans to pay for this and not GPT?

[00:53:03] Nisten Tahiraj: I haven't paid for anything since September But I'm

[00:53:08] Junyang Lin: not the right person for this question. My company pays for like my character description. So I might keep both

[00:53:15] Alex Volkov: Interesting.

[00:53:16] Junyang Lin: paying for mine's out of pocket. I'm just going to keep both. I like the OpenAI app because it's just the multimodal picture on my phone.

[00:53:23] Junyang Lin: I'm on the go. For Google, I'm just curious because it's two months free. That just means that, they have me hooked. We'll see.

[00:53:30] Alex Volkov: Yeah, it's two months free. And then let's check in back in two months, and see how many of us kept paying. All right. I so Google also releases. a Llama CPP wrapper called Local LLM. I don't know if you guys saw this. It's pretty cool. It's an open source tool from Google that actually helps you run LLMs locally on CPUs and then also on the Google Cloud with a super easy integration.

[00:53:51] Alex Volkov: Very interesting choice. They also call out the bloke that you can download models from the bloke with their tool. And I think it's very funny that if you go on. The description of the blog of local LLM, they call this. Now, the tool, they told you in the code snippets, they say, Hey, install OpenAI.

[00:54:10] Alex Volkov: So I found it really funny. But yeah, they have a wrapper there that integrates with Google Cloud as well.

[00:54:15] OpenAI adds DALL-E watermarking and per API key restrictions

[00:54:15] Alex Volkov: Running through the big companies areas like super quick. OpenAI added watermarks to Dali images. They use this new metadata thing called C two P embeds and it embeds in the metadata.

[00:54:27] Alex Volkov: And so basically what this means for us is not that much, but when you download images from Dali generated, I assume that the same will come to Microsoft copilot. They will now have in the metadata, where like the location is and everything else. They will now have the fact that they have been generated with.

[00:54:43] Alex Volkov: They have been generated with DALI this information will sit in the metadata. Now it's only images, not text or voice or anything else from OpenAI. This happens over the API or from the ChatGPT interface as well. This increases the file size a little bit because of some of the stuff, but it's not super interesting.

[00:55:00] Alex Volkov: This can be stripped. So it doesn't mean that if the lack of presence of this thing does not mean that it's not generated with DALI. It just, if there is, it's definitely generated with DALI. And so this is an interesting attempt from OpenAI to say, Hey, we're doing as much as we can.

[00:55:15] Alex Volkov: It's not foolproof, but an interesting attempt. And also, I just want to mention that if, for those of us who develop with OpenAI, The API keys, they keep upgrading the developer experience there and the API keys part. And now you can restrict per API key. You can restrict its usage, which many people have been waiting for a long time.

[00:55:33] Alex Volkov: And that's really like many people has been wanting this. You can create one API key for OpenAI for a specific purpose and restrict it to only DALI, for example. And you can, I don't know if you can restrict. based on credits, I don't think so, but you can definitely restrict in, in the usage related stuff.

[00:55:49] Alex Volkov: That's, I think, all the updates from the big companies and the LLMs and APIs,

[00:55:53] Alex Volkov: This week's buzz is the corner and I stopped the music too prematurely. This week's buzz is the corner where I talk about the stuff that I learned in Weights & Biases this week. And I don't know how many of you were, had a chance to join our live segments, but we definitely had a build week. And I think I mentioned this before, but actually we had a live show on Monday.

[00:56:19] Alex Volkov: We're going to have another one this probably tomorrow. Yeah, tomorrow. I think it's Noon Pacific, where I interview my team, the GrowthML team in Weights & Biases, about the build with projects that we've built, uh, last December to try and see what's the latest and greatest in this world. So as we build tools for you in this world, we also wanna Build internal tools to see what are the latest techniques and stuff like we just talked about.

[00:56:46] Alex Volkov: For example, it gives us a chance to play around with them. It's like an internal hackathon. And what happened was is we build those tools and we present them to the company and then this was basically it. And I said, Hey, hold on a second. I learned the best publicly. I learned the best about, the way I just learned from Connor and Benjamin.

[00:57:02] Alex Volkov: I learned from Nisten and Far El and all the folks in the audience. And Luigi and I had a whole section where he taught me weights and biases before. I learned the best by being public and talking about what I'm learning as I'm learning this. And so I did the same thing with our folks from the GrowthML team.

[00:57:15] Alex Volkov: We just literally folks came up on stage and I asked them about what they built and what they learned. And we're going to summarize those learnings in the live show. And that live show, if you're interested, is all over our social, so on Weights & Biases YouTube and LinkedIn. Yes, LinkedIn, I now need to also participate in that whole thing.

[00:57:33] Alex Volkov: So if you have tips about LinkedIn, let me know. But it's live on LinkedIn, live on YouTube. I think we did X as well and nobody came. We're probably try to send you to the live YouTube flow. But basically the second part of this is coming up tomorrow. We're interviewing three more folks and you get to meet the team that I'm, the incredible team that that I'm part of.

[00:57:53] Alex Volkov: Very smart folks. like Kaggle Masters, and some of them came to Kano's show as well, which is super cool. And I find the first conversation super interesting and insightful for me. Definitely recommend if you're into Understanding how to build projects that actually work within companies was the process.

[00:58:11] Alex Volkov: We have folks who build something from scratch, we have somebody who runs a actual bot with retrieval and re ranking and evaluations and like all these things and [00:58:20] have been running them for a year basically on the production. So you can actually try our bot in Discord right now and in Slack and on GPTs.

[00:58:28] Alex Volkov: If you want to hear about the difference between a mature, rag based But that's in production for a professional AI company, but also the difference between that and something that somebody can like quickly build in a week. We've talked about those differences as well. So definitely worth checking out that live.

[00:58:46] Alex Volkov: Moving on from this week's buzz, and I learned a lot. Okay, so back from the this week's buzz, we're moving into vision.

[00:58:52]

[00:58:57] Alex Volkov: And Bria AI like super quick, they released a new Background Segmentation Model, or Background Removal Model, that's live on Hug Face, is called RMBG V1. 4, and I think the cool thing about this is that it now runs completely in the browser, thanks to the efforts of our friend Zinova, who is no longer in the audience, I think, from Hug Face and Transformers.

[00:59:19] Alex Volkov: js, and it's super cool. You can like, remove backgrounds completely without sending any images to anywhere, and just straight from your browser. That model is called, again, RMBG, and it's not Commercially viable. So you cannot use this for professional stuff, but it's open for you to try and play with in the voice category, the voice and audio category.

[00:59:39] Alex Volkov: We don't have a lot of audio stuff lately, so I think the main audio stuff that we've talked about was. I want to say Suno is like the latest and greatest, but we're still waiting for some cool music creation stuff from different labs. And definitely I know some of them are coming but in the voice category and you know that we've been talking about, my position in this and Nisten and I share this position.

[01:00:01] Alex Volkov: I think personally, The faster models will come out that can clone your voice and the faster they're going to come out in open source, the better it is generally for society. I know it's a hot take, I know, but I know also, I cannot reveal the source, I know that voice cloning tech is going to be at open source like super, super quick.

[01:00:21] Alex Volkov: And I think it's like one of those. Break the dam type things that the first kind of major lab will release a voice cloning and then everybody will see that nothing happened in the world, everybody else will release theirs, and we know everybody has one. We know for a long time that Microsoft has, I want to say Valley, was that Valley?

[01:00:38] Alex Volkov: That clones your voice in under three seconds. There's papers on this from every company in the world. We know that OpenAI has one. They collaborated with Spotify and they cloned Lex Fridman's voice and it sounds exactly like Lex Fridman. We know that companies like Heygen, for example, I think they use 11labs.

[01:00:54] Alex Volkov: 11labs has voice cloning as well. None of this is open source, everything is proprietary. So we're still waiting for the voice cloning area from open source from a big company. But for now, we got something called MetaVoice from a smaller company. Not from Meta, it's just called MetaVoice, it's confusing.

[01:01:08] Alex Volkov: It's just like a tiny model, 1. 2 billion parameters model. It's trained on 100k hours of data, which is quite significant, but not millions of hours. And it supports zero shot voice cloning. So basically under a few samples, like a basic sample of your voice, and then you're going to get a clone of your voice or somebody else's, which is what scares many people in this area.

[01:01:30] Alex Volkov: It has like long form synthesis as well. It's super cool. And it has emotional speech. If you guys remember, we've talked about. How important emotion is in voice cloning, because again, for those of you who follow ThursdAI for a while, you may remember myself voice cloned in kind of Russian, and I'm doing this with a lot of excitement, when the regular voice cloning thing for Alex speaks in a monotone voice, that's Very clearly not the same kind of person.

[01:01:56] Alex Volkov: So emotional speech is very important. And some of this is with prompt engineering and some of this happens in voice casting or voice acting. And the best part about this MetaVoice thing is Apache 2 license and it sounds pretty good. And so we've talked about multiple TTS models, and now this model is definitely out there.

[01:02:14] Alex Volkov: So if you're building anything and you want a TTS model for you with voice cloning, I think this is now the best. the best shot you have. It's called MetaVoice. I'm going to be adding this to the show notes as well. And I think we have a breaking news from a friend, VB with another model called Nemo.

[01:02:30] Alex Volkov: So let's take a look. Yeah, definitely a new model from NVIDIA. It's called Nemo. Let me actually use this. I want to use the sound as much as possible.

[01:02:50] Alex Volkov: So I'm gonna go and try and find this tweet for you, but basically we have a breaking news, literally Rich VB, which is the guy friend of the Padawars, who's in charge of, like, all the cool voice related and TTS related tech and Hug Face, he mentioned that NVIDIA AI released Nemo Canary.

[01:03:07] Alex Volkov: Nemo Canary is the top of open a SR leaderboard. VB is also part of the folks who are running the leaderboard for us, a SR stands for automatic speech Recognition. No, I think I'm confusing this. Yes, automatic speech recognition. Cool. Thank you, Nisten. So basically, if you guys remember Whisper, we talked about Whisper a lot.

[01:03:25] Alex Volkov: This is the leaderboard, and Whisper has been on top of this leaderboard for a while. Recently, NVIDIA has done some stuff with stuff like Parakit. And now we have a new contender in the ASR leaderboard called Nemo Canary 1B. 1B is not that much. Whisper The highest Whisper large, I think it's 2. 5 B or something.

[01:03:44] Alex Volkov: This is now the top SR leaderboard. It beats Whisper and it beats Seamless from Meta as well. And I don't know about License here. It supports four languages. Whisper obviously supports a hundred, which is, uh, which is, we know the best for many kind of low resource languages as well. Trained on not that much hours of annotated audio, only 85 1000 hours or so, and it's super fast as well.

[01:04:10] Alex Volkov: It's very interesting that NVIDIA does multiple things in this area. We had Parakit, now we have Canary as well. What else should we look at? I think Bits, Whisper, and a considerable margin, again, on these specific languages. Folks, we've been, I think, we've been on this trend for a while, and I think it's clear.

[01:04:28] Alex Volkov: Incredible automatic speech recognition comes on device very soon. Like this trend is very obvious and clear. I will add my kind of thoughts on this from somebody who used Whisper in production for a while. The faster it comes on device, the better. And specifically, I think this will help me talk about the next topic.

[01:04:47] Alex Volkov: Let's see what else I have to cover. Yeah, I think it's pretty much it. The next topic

[01:04:51] Nisten Tahiraj: I'm trying it right now, by the way. And it's pretty good.

[01:04:55] Alex Volkov: Are you transcribing me in real time or what are you doing?

[01:04:58] Nisten Tahiraj: yeah, I was transcribing your voice through the phone to my laptop but weirdly enough it doesn't output numbers, it only outputs words however

[01:05:06] Nisten Tahiraj: It seems pretty good, huh? I don't know, it seems good to

[01:05:09] Nisten Tahiraj: me, LGTM looks good to me.

[01:05:11] Alex Volkov: Yeah, it was good to me. Absolutely. The word error rate, the word error rate for Whisper is around 8%, I think, on, on average for these languages and for Canary is less than it's 5. I think, if I remember correctly, VB told us that word error rate is like how many mistakes per 100 words it does, and this does, Five Mistakes Versus Eight, I think on the general data sets.

[01:05:36] Alex Volkov: Quite incredible. This is coming and I think I'll use this to jump to the next thing

[01:05:39] Alex finds a way to plug Vision Pro in spaces about AI

[01:05:39] Alex Volkov: . The next thing, and briefly we'll cover this, is that I haven't used it for the show, but for the past, since last Friday, basically, I've been existing in reality and in augmented virtual spatial reality from Apple.